How Image Quality Tuning Improves Machine Vision Results

Key Highlights

- Inconsistent image quality due to hardware issues like uneven lighting and improper gain settings can cause detection failures in vision systems.

- Flat-field calibration is a simple yet crucial process to correct pixel nonuniformity, significantly reducing false positives and missed defects.

- Thermal effects impact image quality and focus; testing and calibrating at operating temperatures ensures system stability and accuracy.

- Camera internal processing settings, such as noise reduction and sharpening, should be reviewed and adjusted to prevent data distortion that hampers detection.

- Early calibration and hardware checks are more effective than software tweaks after deployment, ensuring the system learns from accurate, consistent images.

Let me tell you about a project I worked on two years ago. A food manufacturer had a vision system checking for label defects on bottles. It worked okay for the first few weeks, then started missing defects and flagging good product at the same time. The team spent about six weeks tweaking the detection model. New training data, different thresholds, adjusted preprocessing. Nothing really worked.

When I got involved, the first thing I did was pull up the raw camera feed and just watch it for a while. The image quality was inconsistent. Some frames looked sharp and well-lit. Others were slightly soft, a bit dim in the corners. Not dramatically different, just enough that you noticed if you were looking for it.

Turned out, the LED ring light had some units that were slightly dimmer than others, and the camera gain was set too high trying to compensate. On top of that, nobody had run flat-field calibration. The six weeks of algorithm work was trying to solve a hardware and setup problem with software. Once we sorted the imaging side out, the original model worked fine with minor threshold adjustments.

I have seen this pattern enough times now that it is one of the first things I check. If a vision system is struggling, look at the images before you look at the code.

The Way Most Camera Setups Actually Happen

Here is how camera setup usually goes on a new project. You mount the camera, turn it on, adjust the exposure until the image does not look too dark or too bright, aim the light at the product, and then move on to building the detection logic. Maybe it takes an hour, maybe half a day if you are thorough.

That is totally normal. Projects have deadlines and the image looks good enough to start working with. But good enough for human eyes and good enough for a machine vision algorithm are pretty different things.

Your eyes are very forgiving. They adjust brightness differences automatically, ignore consistent background patterns, and fill in detail that is not there. An algorithm does none of that. It sees every pixel exactly as it is. A corner of the frame that is 15 percent dimmer than the center looks fine to you and causes systematic errors for the algorithm, every single frame.

Most of these problems stay hidden during lab testing because you are usually testing with the best samples, under controlled conditions, at a comfortable room temperature. They show up in production, after you have already built everything around the assumption that the imaging is fine.

Correcting Uneven Brightness Across the Frame

Every lens loses some light toward the edges. It is just physics. On top of that, the sensor itself has pixels that respond slightly differently from each other, which adds another layer of nonuniformity. Put them together and you almost always end up with an image where the middle is brighter than the sides, even when your lighting looks perfectly even.

On a well-designed system with good optics, this might be a few percent. On a system with a cheaper lens or uneven illumination, it can be 15 to 20% or more. That is a big deal if your inspection is comparing pixel values to decide whether something is a defect.

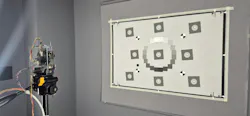

Flat-field calibration is the standard way to deal with this. You imagine a plain white or gray surface that you know is uniform, measure how the camera responds across every pixel, and use that to build a correction. After that, the camera output is much more consistent from center to edge. It takes maybe 30 minutes to do properly, and it removes a whole category of false positives and missed detections.

The catch is you must redo it if your lighting changes. If you swap a bulb, reposition a light, or even if the lights age and dim slightly over time, your calibration is no longer accurate. So, document when and how you did it. Treat it as a maintenance item, not a one-time setup step.

Temperature Changes More Than You Think

Most machine vision systems get tested at room temperature and then installed on a production floor that can get significantly warmer. Enclosures heat up. Equipment nearby radiates heat. A line running all day in summer in a non-air-conditioned building is a different thermal environment than your lab bench.

Sensors get noisier as they warm up. The effect is not dramatic, but it is real. A clean image at 22 degrees starts showing more random bright pixels at 38 degrees. Lens barrels also expand with heat, which shifts the focus slightly. On a high-resolution system trying to detect a 0.1mm scratch, even a small focus shift makes the image visibly softer.

The practical advice here is to test your system at its actual operating temperature, not just when you first turn it on. Run the line for a few hours, let everything stabilize, then check your image quality. If it has drifted, figure out whether you need better thermal management, a recalibration at operating temperature, or some kind of periodic focus check during the shift.

Good enough for human eyes and good enough for a machine vision algorithm are pretty different things.

What Professional Image Tuning Services Actually Change

When camera setup is done as part of proper image tuning services, the difference is that decisions are based on measurements instead of visual judgment. You capture test targets and measure signal-to-noise ratio at the gain settings you plan to use. You run SFR tests at multiple points across the frame to see where resolution drops off. You quantify the brightness of nonuniformity before and after calibration.

There is also a step that surprises a lot of people, which is reviewing the camera's internal processing settings. Most industrial cameras still ship with consumer-style image processing turned on by default. Noise smoothing that blurs fine edges. Sharpening that adds false contrast around transitions. These things make the image look nicer on a screen, but they are bad for machine vision because they change the actual pixel data in ways that are hard to account for in your algorithm. Turning them off or reducing them significantly usually improves results.

And when all of this is written down with the actual measured values, you have something you can use later. When the system on line 3 starts behaving differently from lines 1 and 2, you can compare the current camera configuration against the baseline. When you add a fourth line six months later, you can replicate the setup from documentation rather than doing it by eye again and hoping it matches—which is exactly why camera IQ tuning isn’t optional.

Do This Early, Not at the End

The best time to sort out your imaging is before you collect training data and before you start tuning your algorithm. If your training images come from a poorly calibrated camera, your model learns the wrong thing. It learns to work around the imaging problems rather than actually detecting the features you care about. Then when the imaging changes slightly, the model breaks in ways that are hard to diagnose.

Good imaging does not guarantee a good vision system. But bad imaging makes everything else harder. Fix the camera first, then build the rest of the system on top of the images you can trust. That is still the most reliable approach I know.

About the Author

Rutvij Trivedi

Co-founder and Director

Rutvij Trivedi is co-founder and director at Silicon Signals, Pvt. Ltd.. He is an embedded systems architect with more than 20 years of expertise in embedded product engineering and system development. Trivedi has led Fortune 500 programs across multiple industries and contributed upstream to the Linux kernel and Zephyr OS multimedia projects.