Finding the optimal hardware for deep learning inference in machine vision

By Mike Fussell

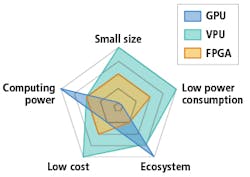

When deploying deep learning techniques in machine vision applications, hardware is required for inference. Choices include graphics processing units (GPU), field programmable gate arrays (FPGA), and vision processing units (VPU). Each of these have advantages and limitations, making hardware selection a key consideration in planning a project.

Due to a massively-parallel architecture, GPUs are suitable for accelerating deep learning inference. Companies like NVIDIA (Santa Clara, CA, USA; www.nvidia.com) have invested heavily in the development of tools for enabling deep learning and inference processes to run on the company’s CUDA (Compute Unified Device Architecture) cores. Google’s (Mountain View, CA, USA; www.google.com) popular TensorFlow software offers GPU support targeting NVIDIA’s CUDA-enabled GPUs.

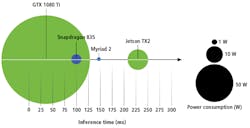

Some GPUs have thousands of processor cores and target computationally-demanding tasks like guidance for autonomous vehicles and training networks for deployment on less powerful hardware. In terms of power consumption, however, GPUs typically demand quite a bit. NVIDIA’s GeForce RTX 2080, for example, requires 225W, while the Jetson TX2 module consumes up to 15W. Additionally, GPUs can be expensive, as the RTX 2080 GPU costs $800 USD.

Widely deployed in machine vision applications—and the basis of many machine vision cameras and frame grabbers—FPGAs offer the middle ground between the flexibility and programmability of software running on a general-purpose CPU, and the speed and efficiency of a custom-designed application specific integrated circuit (ASIC). An Intel (Santa Clara, CA, USA; www.intel.com) Aria 10 FPGA-based PCIe Vision Accelerator card consumes up to 60W of power and costs $1500 USD.

One limitation in using FPGAs in machine vision is that FPGA programming is a highly-specialized skill. Developing neural networks for FPGAs is also complex and time consuming. While developers can use tools from third parties to simplify tasks, these tools are often expensive and lock users into closed ecosystems of proprietary technology.

A newer technology, VPUs are a type of System-on-Chip (SoC) designed for acquisition and interpretation of visual information. VPUs target mobile applications and are optimized for small size and power efficiency. Intel’s Movidius Myriad 2 VPU, for example, can interface with a CMOS image sensor, pre-process captured image data, pass the resulting images through a pre-trained neural network, and output a result while consuming less than 1W of power. Intel’s VPUs do this by combining traditional CPU cores and vector processing cores to accelerate the highly-branching logic typical of deep neural networks.

Due to a power-efficient design (nominal 1W power envelope) VPUs are particularly suited for embedded applications, such as handheld, mobile, or drone-mounted devices requiring long battery life. Though less powerful than GPUs, VPUs are quite small, enabling their design into extremely small packages. For example, FLIR Machine Vision’s (Richmond, BC, Canada; www.flir.com/mv) Firefly DL camera offers an embedded Myriad 2 VPU and is less than half the volume of a standard ‘ice cube’ machine vision camera.

Intel’s open ecosystem for Myriad VPUs enables developers to use the deep learning framework and toolchain of their choice. Additionally, Intel’s Neural Compute Stick, which is based on the latest VPU from Intel—the Intel Movidius Myriad X VPU—offers a USB 3.0 interface and costs less than $80.

Differences in architecture between GPUs, FPGAs, and VPUs make performance comparisons in terms of floating-point operations per second (FLOPS) of little practical value. Comparing published inference time data is a useful starting point, but inference time alone may be misleading. While the inference time on a single frame may be faster on the Myriad 2 than the Jetson TX2, the TX2 processes multiple frames at once, yielding greater throughput. Additionally, the TX2 performs other computing tasks simultaneously, while the Myriad 2 cannot.

Ultimately, there is no easy method for comparing available options aside from testing. Prior to selecting the hardware for an inference-enabled machine vision system, designers should conduct various tests to determine the accuracy and speed required for their application. These parameters will determine the characteristics of the neural network which will be required and the hardware onto which it can be deployed.

Mike Fussell is a Product Marketing Manager at FLIR Machine Vision.