System embeds laser, camera, and computer

Andrew Wilson, Editor, [email protected]

For a number of years, structured-light techniques have been used to extract depth information from scenes. In building systems, developers use laser line lights that are projected onto a scene. Reflected light from the object is captured by a solid-state camera and rendered using simple triangulation. In systems that require stationary 3-D objects to be digitized, the structured light is moved across the field of view (FOV) of the object while the camera remains stationary. Where such devices are in motion, such as on a conveyor belt, the structured line light is projected at a cross section across the object. As the object moves through the line light, reflected laser light can analyze the surface and volume characteristics. This technique has found wide acceptance in applications ranging from inspecting the surface of automobile brake pads to determining how fish-slicing machines can be optimized to make the best cut of filleted fish before vacuum-packing (see Vision Systems Design, February 2005, p. 13).

“In the past,” says Karl Gunnarsson, business development manager at SICK (Minneapolis, MN, USA; www.sickusa.com), “system integrators needed to select the correct structured-light system, camera, computer, and I/O systems from OEM components. Not only was this time-consuming, it required an intimate knowledge of the 3-D resolution needed.”

Resolution along the conveyor will depend on the velocity of the conveyor belt and the frame rate of the camera. “For a conveyor moving at 1 m/s and a camera capable of 5000 profiles/s, 5 samples/mm can be achieved.” To compute height or depth resolution, the FOV of the camera must be known. This will depend on the imager format, the focal length, and focus distance of the camera. If a camera is placed above the conveyor and has a 30-in. FOV, for example, it will be capable of resolving height differences down to 0.01 in.

Once images are captured, system integrators are still faced with deciding how to process the captured images. In many systems, low-cost smart cameras are used. However, because many of these contain FPGA- or DSP-based processors, specialized code must be written to perform image analysis. Alternatively, PC-based frame grabbers can be used and images processed using off-the shelf software-development kits. In either case, the developer is forced to produce specialized code for image analysis and process control.

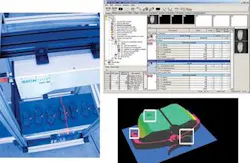

To alleviate the integration problem faced when building 3-D measurement and control systems, SICK|IVP has developed the IVC-3D, a system that the company claims is the world’s first 3-D smart camera. “By combining lighting, camera, and computer into one, the IVC-3D can detect and compute 3-D geometrical features of objects, as well as control an external machine, robot, or conveyor without the use of an external PC,” says Gunnarsson.

At present, the IVC-3D is available in two versions that can image 150 × 50- or 600 × 200-mm typical measurement areas. To image the 150 × 50-mm area, a structured laser light with a 38° fan angle from StockerYale (Salem, NH, USA; www.stockeryale.com) is incorporated into the system. For 600 × 200 mm, a similar laser with a 68° fan angle is used. And, because the FOV is a trapezoid, each version can image larger objects with a smaller width, depending on the fan angle. To capture images, the system incorporates a camera based on a CMOS imager originally developed by Integrated Vision Products (IVP; Linkoping, Sweden), now part of SICK. To control both the illumination and camera, the system incorporates an embedded XSCALE-based CPU that provides I/O, serial, and Ethernet interfaces to the 3-D camera system.

To program the unit, SICK has developed a menu-driven image-processing software package called IVC Studio that runs in a browser window on the IVC-3D. This software is accessed from a PC over the camera’s Ethernet interface. Camera set-up and inspection tasks can then be arranged by stringing together a number of different tools in a set-up window (see figure). Parameters for each tool are then displayed in another window and can be set either by movement of the mouse or by entering values in parameter fields. After programming, the IVC-3D can operate in a stand-alone mode without the use of a PC.

“Because the IVC-3D toolbox contains tools for image processing on standard gray-scale imaging and special tools used in 3-D measurements, systems that require 3-D measurement and control can be set up rapidly,” says Gunnarsson. “With a price of $14,000 in single quantities, the IVC-3D may at first appear to be more expensive than systems based on off-the-shelf components. However, because of the several months of development time required to build systems using stand-alone components, the integrated solution provided by the IVC-3D is, in fact, more reliable, accurate, and cost-effective.”