Virtual cameras provide multiprocessing capabilities

One of the advantages of CMOS imagers is the ability to address regions of interest (ROIs) within the sensor. In high-speed image processing, this capability has led to the introduction of cameras that can operate at different resolutions and frame rates. In low-light-level applications, varying the integration time of such imagers has allowed cameras to offer very large dynamic ranges, a factor most important in embedded automotive applications.

AtVision 2004 (Stuttgart, Germany), Kamera Werk Dresden (Dresden, Germany; www.kamera-werk-dresden.de) introduced a new concept that uses these advantages in what the company is calling Virtual Camera. According to Peter Hoffmeister, managing director, Virtual Camera technology allows different regions of the full frame of a single camera to be captured simultaneously and the exposure time, position of the ROIs, and trigger rates of these regions to be independently controlled. Positioning different sized or overlapped ROIs within the captured images allows different operations to be performed on data from different parts of the image region.

By controlling individual exposure times of each of the regions, the camera acts as a set of independent “virtual cameras” that possess individual linear or logarithmic characteristics. This is especially useful in machine-vision inspection systems, where image quality may vary dramatically across an individual frame.

To demonstrate the effectiveness of this technology, Kamera Werk Dresden demonstrated its Virtual Camera technology running on its Loglux i5 Camera Link camera. This camera incorporates an Ibis 5 1280 × 1024 sensor from FillFactory (Mechelen, Belgium; www.fillfactory.com), a Spartan IIe FPGA from Xilinx (San Jose, CA, USA; www.xilinx.com), and a microcontroller for booting and controlling camera parameters.

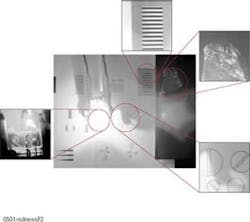

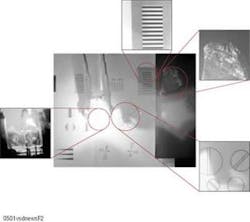

At the Stuttgart show, Kamera Werk Dresden showed how, by using its Windows-based API, four independent regions of 1280 × 1024 images could be individually addressed. Using a PCI-1428 Camera Link frame grabber from National Instruments (NI; Austin, TX, USA; www.ni.com), four separate images from the Loglux were shown displayed on a PC monitor.

“Because most frame grabbers, including the NI PCI-1428, merely define a single sync signal,” says Hoffmeister, “they cannot be used to set individual sync signals and thus cannot be used to set different ROIs within a single frame.” For this reason, the system is currently limited to window quadrants within the 1280 × 1024 frame of the camera.” Hoffmeister admits that processing of such image data is also a bottleneck at the present time. “After images are captured from the different regions,” he says, “they may need to be processed using different algorithms.”

In a machine-vision system, for example, one virtual camera may be used to read a barcode within an image, while another looks for the presence or absence of defects. At present, the FPGA within the camera drives the sensor to image data in frames and outputs the data to the Camera Link interface. After images are captured by the PC, they must be processed in sequence.

Although the demonstration at Stuttgart showed four separate images processed at 3 to 4 frames/s/ROI window, the company has used the Loglux camera to develop a virtual two-camera system that uses two 100 × 100 windows of the 1280 × 1024 camera running at 250 frames/s/ROI window. According to Hoffmeister, several companies have expressed interest in the technology.