Algorithm assesses image and video quality

Andrew Wilson, Editor, [email protected]

What determines the quality of a digital image? The ultimate test is subjective judgment by the human eye. But how can this be determined by an algorithm? And how can it be quantified? These were just a few of the questions that proceeded a keynote presentation entitled “New Measures for Image and Video Quality” by professor Alan Bovik, director of the Laboratory for Image and Video Engineering at the University of Texas at Austin (Austin, TX, USA; live.ece.utexas.edu), at NIWeek (August 2006).

“When a distorted image is compared to a readily available ideal reference image,” Bovik says, “a full reference-quality assessment can be done. However, if no such reference image is available, a blind image-quality assessment must be performed, which is much more difficult. Should some reference information (such as known edges) be available, then a reduced reference assessment can be made. However, such reduced reference tests are by their nature application-specific.”

Much progress has recently been made in full-reference image-quality assessment. These assessment algorithms can provide beneficial results in benchmarking image-processing and communications algorithms.

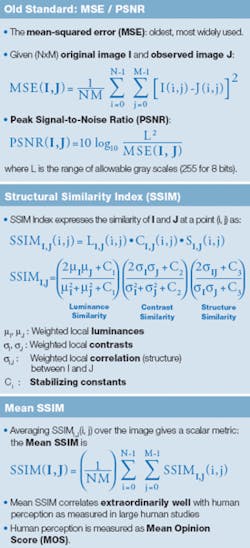

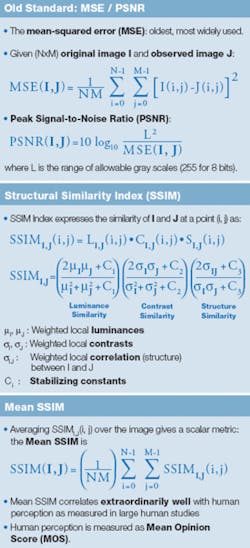

“In the past,” says Bovik, “the mean squared error (MSE), or the log of its reciprocal, the peak signal-to-noise ratio (PSNR) between the original image and an observed image, has traditionally been used to compare images” (see box). “Although the MSE is computationally and analytically simple and can easily be optimized algorithmically, it correlates very poorly with human perception” (see Fig. 1). In the figure below, an original image of Albert Einstein has been distorted in a number of different ways. Although the processed images have nearly identical MSEs, they possess very different visual qualities.

“Human opinion is the ultimate gauge of image quality,” says Bovik, “and the way to measure this image quality requires asking many subjects to view images under calibrated test conditions. The resulting mean opinion scores (MOSs) can then be correlated with quality-assessment algorithm performance.”

For a computer-based system to determine the quality of a digital image, the loss of structure in the distorted image must be measured. This can be done by combining local measurements of the similarities of luminance, contrast, and structure between the test and reference images. A weighted average of these local measurements then provides a scalar metric that correlates extraordinarily well with human perception as measured in MOS studies.

To demonstrate this, Bovik has developed what is called the structural similarity index (SSIM). As can be seen, this algorithm takes into account weighted local luminances, contrasts, and correlation between the test and reference images.

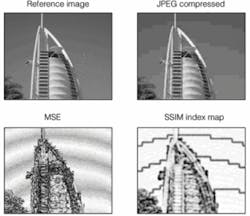

To visualize whether the resulting image quality is good or poor, SSIM can be displayed as an SSIM Index map (see Fig. 2). For a compressed JPEG image, for example, the SSIM map easily highlights the blocky artifacts introduced by the JPEG DCT algorithm. To illustrate the effectiveness of the SSIM algorithm, the processed images of Einstein were compared using both the MSE and SSIM. As can be seen, the SSIM algorithm correlates well with human perception and, says Bovik, appears to be an ideal fidelity metric, as well as a quality metric.

Of course, other methods for image-quality assessment do exist. These include the JNDMetrix Technology from Sarnoff (www.sarnoff.com/products_services/video_vision/jndmetrix/index.asp) and NASA’s DCTune algorithm (vision.arc.nasa.gov/dctune/). To provide a benchmark for these and other quality-assessment algorithms, including PSNR, picture quality scale, and noise quality measure, Bovik and his colleagues computed many statistical yardsticks, such as the Spearman rank-order correlation coefficient (SROCC) relative to MOS across a number of compressed, blurred, and noisy images.

“With the SSIM algorithm, a gain of 2%-3% in SROCC was achieved over the nearest competitor, the Sarnoff Emmy-award-winning JNDMetrix, which is no longer being sold as a software package. This,” says Bovik, “is significant perceptually and almost as large a gain as had been achieved in the last 30 years of research.”

Two major applications exist for this technology. The first, and probably most important, will be for benchmarking image-processing algorithms such as deblurring and reconstruction. “At present,” says Bovik, “there is almost no agreement on which image filter may be best to perform a specific operation. Using SSIM optimization methods, such deblurring algorithms could use SIMM as a metric to provide benchmarks of algorithm performance.”

Furthermore, SIMM-based inspection methods could be used to compare known model images with acquired images. By generating a SIMM map of the output, these two images could be readily compared. To speed the development of such systems, Bovik and his colleagues have developed LabView-based Virtual Instruments that perform SIMM analysis, present SIMM maps, and generate mean SIMM values. These public-domain algorithms can be obtained by contacting Bovik at [email protected].