Compiler maps imaging software to reconfigurable processors

Researchers are developing compiler technology that can automatically map signal- and image-processing algorithms to configurable computing environments.

By Andrew Wilson, Editor at LargeThe satellite flagship of NASA's Earth Observing System, Terra (formerly called EOS AM-1), was launched last December to hyperspectrally map the Earth. Previously, these observation satellites used on-board preprocessing algorithms that were often prototyped in high-level languages.

Now, as a means to increase the speed of signal- and image-processing algorithms, NASA is studying reconfigurable computing systems as low-cost ways to increase performance over general-purpose CPUs and DSPs. Moreover, in situ reprogrammability means that such systems can be reconfigured on-the-fly for easy modifications, changes, and upgrades.

Nevertheless, embedding image-processing algorithms into field-programmable gate arrays (FPGAs) still requires expensive hand-coding. To overcome this difficulty, researchers at Northwestern University (Evanston, IL) are developing compiler technology that can automatically map signal- and image-processing algorithms to configurable computing environments.

In this effort, professor Prith Banerjee, chairman of electrical and computer engineering and director of the Center for Parallel and Distributed Computing of Northwestern University, and his colleaguesµprofessors Alok Choudhary, Scott Hauck, and Nagaraj Shenoy·have developed a compiler that takes code from the MATLAB development environment (The MathWorks; Natick, MA) and automatically maps it onto a configurable computer. Known as the MATLAB compiler for heterogeneous computing systems (MATCH), the software maps MATLAB descriptions of various embedded system applications onto a system of FPGAs, embedded processors, and digital-signal processors (DSPs) built from commercial off-the-shelf components.

FIGURE 1. To build a heterogeneous computer, researchers at Northwestern University used a combination of general-purpose CPU, DSP, and FPGA boards. As the main CPU controller, a Force 5V MicroSPARC-II processor running Solaris is interfaced to a WildChild FPGA board from Annapolis Microsystems and a DSP board from Transtech DSP. For general-purpose processing, the system also includes two MVME2604 boards from Motorola running the Microware OS-9 operating system.

"Purely FPGA-based systems are usually unsuitable for complete algorithm implementation," says Banerjee." In most computations a large amount of code is rarely executed, and attempting to map all of these functions into reprogrammable logic is inefficient. Reconfigurable logic is also slower than a floating point CPU and complex integer arithmetic units. The solution is to combine the advantages of microprocessor-based embedded systems, DSPs, and FPGAs into a single system," he adds. While the microprocessors implement most of the signal- or image-processing algorithms, reconfigurable logic accelerates only the most critical computation kernels.

Heterogeneous computing

To configure a heterogeneous computer system designed to accommodate the MATCH compiler, Banerjee and his colleagues turned to off-the-shelf boards including several general-purpose CPU boards coupled to both FPGA- and DSP-based accelerators. Serving as a general-purpose CPU controller board, the Force 5V from Force Computers (San Jose, CA) features a Sun MicroSPARC-II processor running the Solaris operating system from Sun Microsystems (Palo Alto, CA). This board is used as the main CPU controller in the system and communicates with the other system boards via the VME bus or an Ethernet interface (see Fig. 1).

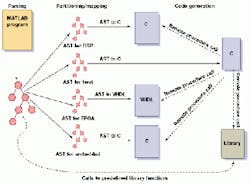

FIGURE 2. To produce parallel code from a MATLAB program, an original MATLAB program is parsed by the compiler into an abstract syntax tree (AST). After the AST is constructed the compiler, it is partitioned into one or more sub-trees. Nodes correspond to predefined library functions that map onto different parts of the VME hardware.

To accelerate the critical parts of the signal- and image-processing routines, the Force V host CPU is interfaced to a WildChild board from Annapolis Micro Systems (Annapolis, MD). With eight on-board 4010 FPGAs from Xilinx (San Jose, CA), each with 400 common logic blocks (CLBs), the board also features a Xilinx 4028 FPGA with 1024 CLBs and 1-Mbyte local memory. In operation, all the FPGAs communicate using either an on-board 36-bit-wide systolic bus or a crossbar switch.

To perform DSP intensive tasks, the VME-based system uses a TDM-428 board from Transtech DSP (Ithaca, NY). On this board, four 60-MHz TMS320C40 processors from Texas Instruments (Dallas, TX) are each coupled to 8-Mbyte RAM. Connected by an on-board 8-bit-wide, 20-Mbyte/s communication bus, one of the on-board processors is used to communicate with the Force V host processor via the VME bus interface.

General-purpose CPUs in the system also include two MVME-2604 boards from the Motorola Computer Group (Tempe, AZ). Each of these boards hosts a PowerPC-604 processor each running at 200 MHz with a 64-Mbyte local memory running the OS-9 operating system from Microware Systems (Des Moines, IA). These processors communicate via a 100BaseT Ethernet interface.

Compiler technology

Structured as a function-oriented language, most MATLAB programs are written using predefined functions implemented as sequential codes running on conventional processors. However, to increase the performance of these functions, they must be mapped to different types of processors including DSPs and reconfigurable processors. Conventional programs using loops and vectors in MATLAB must also be translated by the MATCH compiler into sequential or parallel codes.

"Being a dynamically typed language," says Banerjee, "MATLAB poses several problems. The compiler must discover whether variables are floating point and the number and extent of dimensions if the variable is an array." To automatically perform such inferencing, the MATCH compiler maps various parts of the MATLAB programs onto the appropriate parts of the target architecture. "To fine-tune this mapping," says Banerjee, "the compiler provides methods so that an experienced programmer can guide the compilation process."

To produce parallel code from a MATLAB program, the original MATLAB program is parsed by the compiler into an abstract syntax tree (AST). After the AST is constructed, it is modified or annotated with more information and partitioned into one or more sub-trees. Nodes correspond to predefined library functions that map onto different parts of the target hardware (see Fig. 2).

After generating ASTs for the individual parts of the original MATLAB program, the ASTs are translated for appropriate target processors. Depending on the mapping, the ASTs corresponding to FPGAs are translated to register transfer level (RTL) VHDL, and those corresponding to host/DSP/

embedded processors are translated into equivalent C-language programs. These generated programs are compiled using the respective target compilers to generate the executable/configuration bit streams.

Functions for the WildChild FPGA board are developed at RTL VHDL using the Synplify logic synthesis tool from Synplicity (Sunnyvale, CA). These functions include matrix addition, matrix multiplication, one-dimensional fast Fourier transform (FFT), and finite impulse response and infinite impulse response filters. In each case, C-program interfaces to the MATCH compiler allows these functions to be called from the Force CPU board. Similarly, DSP library functions generate object code for the TMS320C40 processors on the Transtech DSP board using the PACE C compiler from Texas Instruments. These DSP functions include real and complex matrix addition, real and complex matrix multiplication, and one- and two-dimensional FFTs.

Because MATLAB allows a procedural style of programming using constructs such as loops and control flow, parts of MATLAB programs may not map to predefined library functions. All such fragments of the program need to be translated to the target hardware to which they are mapped. "In most cases," says Banerjee, "parallel versions that can exploit data parallelism must be developed. To do so, the compiler must decide on which processors to perform the compute operations." In the MATCH compiler, an owner-computes rule only permits operations on a particular data element to be executed by processors that own the data element; ownership is determined by the alignments and distributions that the data are subjected to.

FIGURE 3. MATLAB code was mapped onto five FPGAs on the WildChild VME FPGA board to increase the throughput of hyperspectral mapping more than five times over conventional techniques (left). Sample outputs of the raw image (middle) and the processed and classified image (right) from the hyperspectral image classification application are also shown.

When possible, the MATCH compiler automatically maps the user program onto the target machine. Automatic mapping requires optimizing the types and numbers of processors under both performance and resource constraints. Performance characterization of the predefined library functions and user-defined procedures guide this automatic mapping.

Image classification

Image-processing tasks such as hyperspectral image classification naturally exhibit a large degree of parallelism. To speed up such computational tasks, Banerjee and his colleagues compiled the NASA probabilistic neural-network algorithm using the MATCH compiler and the reconfigurable architecture. To determine land, swamp, or ocean terrain, the neural-network algorithm transforms captured images into different classes.

Written in MATLAB and tested on a Hewlett-Packard (Palo Alto, CA) C-180 workstation with a 180-MHz PA-8000 CPU and 128 Mbytes of memory, the algorithm can process 1.6 pixels/s when coded in an iterative manner. While vectorization improves this speed to 35.4 pixels/s, coding in Java and C languages results in higher 149- and 364-pixel/s processing capability, respectively. To dramatically increase processing speed, Banerjee and his colleagues mapped the MATLAB code onto a set of five FPGAs on the WildChild Board. The result was a processing speed of 1942 pixels/s (see Fig. 3).

"Although there have been several commercial efforts to generate programmable logic from high-level languages," says Banerjee, "the MATCH compiler generates code for DSPs, embedded processors, and FPGAs using both a library-based approach and the automatic generation of C-code for DSPs and RTL VHDL code for FPGAs." With this approach, high-level languages such as MATLAB can take advantage of the increased computational power of DSPs, embedded processors, and FPGA. If such approaches are embraced by embedded, DSP, and FPGA board vendors, the reconfigurable image-processing demands on systems programmers will ease.

Company InformationAnnapolis

Micro Systems

Annapolis, MD 21401

Web: www.annapmicro.com/

Force Computers

San Jose, CA 95138

Web: www.forcecomputers.com

Hewlett-Packard Co.

Palo Alto, CA 94303

Web: www.hp.com

Microware Systems

Des Moines, IA 50325

Web: www.microware.com

Motorola

Computer Group

Tempe, AZ 85282

Web: www.mcg.mot.com/

NASA Goddard

Space Flight Center

Greenbelt, MD 20771

Web: pao.gsfc.nasa.gov

Northwestern University

Evanston, IL 60208

Web: www.ece.nwu.edu/

Sun Microsystems

Palo Alto, CA 94303

Web: www.sun.com

Synplicity

Sunnyvale, CA 94086

Web: www.synplicity.com

Texas Instruments

Dallas, TX 75266

Web: www.ti.com

The MathWorks

Natick, MA 01760

Web: www.mathworks.com/

Xilinx

San Jose, CA 95124

Web: www.xilinx.com