Imaging technology enhances the study of art

Combining the rigor and objectivity of computer vision with historical and subjective knowledge will change understanding of art.

By David G. Stork

We may be entering a new era in the evolution of the study of fine art, an era where computer-vision algorithms will build upon the science and technology of imaging to help answer old questions, open up new vistas, and expand our understanding of art. In the past several decades, advances in imaging-sophisticated cameras and imaging systems with ever increasing spatial and spectral resolution-have allowed art scholars to see into artwork as never before.

For example, infrared reflectography and x-radiography reveal underdrawings-the earlier versions of some paintings otherwise invisible to the human eye. Close study of the changes an artist makes during execution of a painting, the pentimenti (from the Italian, “repentance”), show how the artist struggled to solve problems as he developed his composition.

Infrared reflectograms of Jan van Eyck’s Portrait of Giovanni Arnolfini and His Wife (1434), for instance, show how the artist adjusted the relative heights of the two figures and changed their eye contact and the positions of their feet and more throughout the development of this complex psychological study of the relationship between a wealthy Bruges patron and his wife. Each version explored a different formal composition and psychological relationship between a figure, the other figure, and the symbol-laden room around them.

Perhaps the most important success of multispectral imaging in art has been the analysis of the Archimedes Palimpsest. This manuscript contained a copy of his The Method and Stomachion and was the only source for the Greek text of his On floating bodies (which dealt with specific gravity and is known to schoolchildren around the world through the famous bathtub Eureka! story). In the 10th century, medieval scribes scraped off this text, wrote over its parchment, and rebound it as a medieval prayer book, all but obliterating Archimedes’ text. Painstaking multispectral and x-ray fluorescence imaging together with image processing have recovered most of the original marks, hidden for more than a millennium, and enabled classics scholars to read the original text.1

Another triumph of imaging science applied to art has been the reverse aging of artworks to restore their original color schemes. One of the greatest masterpieces of French neo-impressionism is Georges Seurat’s Un dimanche après-midi à l’Ile de Grande Jatte-1884 (1884-1886) in the Art Institute of Chicago. This large canvas was executed in the technique of pointillism-many tiny dots of color-and was deeply influenced by Seurat’s study of color theory.

If we are to see this work as the artist intended and then fully understand his achievement, it is essential that we see the colors as the artist had applied them. Even in the few years after Seurat’s short life, however, critics noted that some of his pigments were fugitive, that is, subject to fading. Recently, image scientist Roy S. Berns modeled mathematically the spectra and the fading of different pigments in Grande Jatte.2 He then ran his computer model of such fading “backward in time” to “un-age” the current painting and thereby produce a digital image that likely matched the painting as Seurat’s painted it. This new rendering of Seurat’s original painting is brighter and its color contrasts more pronounced than in the painting as we see it today; it better reveals the deep influence of Seurat’s study of the color science of his day.

The new era

In the past, image processing of art has relied heavily upon the human eye and the judgment of art scholars and connoisseurs. In the future, however, some of the analysis will be done by computer vision and pattern-recognition algorithms, often relying on methods developed for other applications, such as robotics.

For example, Antonio Criminisi has pioneered the use of “uncalibrated” methods from computer vision for reconstructing three-dimensional (3-D) spaces from the images depicted in realist paintings. Although the construction of 3-D space from a two-dimensional (2-D) projection is formally “ill-posed”-that is, there are an infinite number of 3-D spaces consistent with a given 2-D projection-Criminisi used natural assumptions to constrain his reconstructions. For instance, he could often assume that certain angles were right or certain planes were parallel in the space depicted in a painting. Other simple geometrical derivations involved computing the relative heights of figures standing on the same floor, regardless of their distance from the painter or viewer.

The most impressive and important example of Criminisi’s work is the construction of a virtual space from Masaccio’s Trinità (1427-1428) in Florence, Italy, a fresco widely believed to be the earliest surviving work constructed by the then newly developed principles of rigorous geometrical perspective.3 Criminisi’s algorithms created a virtual computer model of the fictive space of the fresco, a space that a user can fly through and art historians can examine in detail to understand which passages Masaccio rendered in correct perspective and which he did not.

Dan Rockmore and his colleagues have used multiscale representations of brush strokes for authentication of works such as drawings by Peter Bruegel the Elder and the large painting Madonna with Child attributed to Pietro Vannucci, known as Perugino.4 The central idea is that the computer algorithm can analyze brushstrokes much as a graphologist analyzes handwritten signatures, and such strokes may be distinct enough to distinguish one artist from another, including forgers or students. Some art historians are quick to point out the limitations of this approach as it stands, but all interested parties recommend continued research, experiment, and testing.

investigating problems

Kimo Johnson and I have also applied techniques from computer vision to problems in art. One question of interest to art scholars centers on the working methods of painters, specifically where they place the illumination within a tableau and how that position affects the final painting.

Such a question was brought to the fore by a revisionist theory that claimed that the illumination in one painting by the Lorrainese Baroque master Georges de la Tour, Christ in the Carpenter’s Studio (1645), was not its apparent position at the candle depicted in the scene. Cast-shadow analysis is a straightforward approach to this problem and works quite well if there are several clear, sharp shadows cast by objects onto other surfaces. In this painting, cast-shadow analysis showed that the illuminant was in fact in the position of the candle.5

Johnson and I tried another method for inferring the location of the source of the illumination: the occluding-contour algorithm. This algorithm takes as input the lightness distribution along a boundary or occluding contour of an object and estimates the direction of illumination most consistent with this distribution.

There are several constraints that must be met for the algorithm to give meaningful results. For instance, the material must be diffusely reflecting, or Lambertian (like cloth or skin, not like glass or metal); it must also be of uniform reflectance or albedo (the method will not work well on a zebra); the source of illumination should be small, and somewhat distant from the contour; and so forth. Our occluding-contour analyses of Christ in the Carpenter’s Studio confirmed the earlier cast-shadow analyses that the candle was indeed the position of the illuminant.6

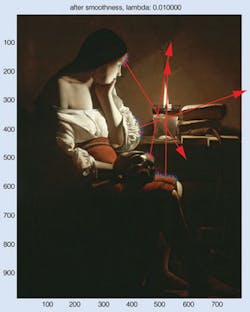

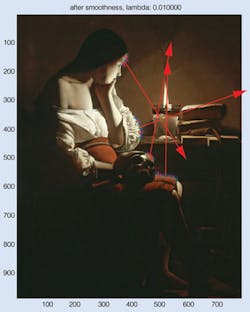

Applying the occluding-contour algorithm to another nighttime or nocturne painting by de la Tour, one whose illumination also seems to be due to a single candle, Johnson and I chose four occluding contours that respected the constraints of the algorithm (see figure on p. 69). The four illuminant directions estimated by the algorithm all agree quite well with the location of the candle. In one sense, this is to be expected: clearly the painter worked from the candle as illuminant and wished to depict it as faithfully as possible. But in another sense, our result is surprising: it not only validates the algorithm itself but serves as a testament to de la Tour’s mastery of rendering accurately and realistically both the shape of these contours as well as the pattern of lightness along each.

Johnson and I performed a sensitivity analysis of the algorithm and tested the painterly ability of de la Tour using his Christ in the Carpenter’s Studio. We artificially altered the lightness along the contours in principled ways, reran the algorithm again, and observed the resulting estimated direction to the illumination. If the lightness along each contour was set to be constant, the algorithm yielded directions that were chaotic and wildly inconsistent. If the lightness was set to have the proper mean and standard deviation, the directions were somewhat more coherent. If the lightness was set to approximate the actual painted lightnesses to a cubic spline, then the directions agreed quite well with the location of the candle-but not quite as well as with the lightnesses in the actual painting. In short, our sensitivity analyses showed that de la Tour was so expert, so precise, in his rendering of lightness that he was better than a cubic fit.

Another technique is to use computer graphics to analyze paintings. James Schoenberg and I have built computer- graphics models of the tableau in Jan van Eyck’s Portrait of Giovanni Arnolfini and His Wife to test how close the artist adhered to the rules of perspective. This, in turn, has shed light on the controversial claim that he secretly built an optical projector and traced images during the execution of his works. Another colleague and I have built a computer graphics model of the tableau in Georges de la Tour’s Christ in the Carpenter’s Studio to explore hypothetical locations of the illuminant within the scene, to better understand his working methods.

This new era will see the combination of the rigor and objectivity of computer vision with the historical and subjective knowledge of the art scholar and connoisseur. It will be interesting to see how our understanding of art changes as a result.

A symposium on this topic, “Computer image analysis in the study of art,” will be held at the SPIE/IS&T Electronic Imaging conference in San Jose CA, USA, on January 28, 2008. It will examine the range of computer methods applied to art-from multispectral imaging to multiscale analysis of brushstrokes to fractal analysis of Jackson Pollock’s drip paintings to computer graphics reconstructions of famous paintings.

References

- R. Netz and W. Noel, The Archimedes Codex: How a Medieval Prayer Book Is Revealing the True Genius of Antiquity’s Greatest Scientist, Da Capo Press (2007).

- R. S. Berns, “Rejuvenating Seurat’s palette using color and imaging science: A simulation,” in Seurat and the making of La Grande Jatte, R. L. Herbert, ed., The Art Institute of Chicago, 214 (2004).

- A. Criminisi, M. Kemp, and A. Zisserman, “Bringing pictorial space to life: Computer techniques for the analysis of paintings,” in Digital art history: A subject in transition, A. Bentkowska-Kafel, T. Cashen, and H. Gardner, eds., Intellect Books (2005).

- S. Lyu, D. Rockmore, and H. Farid, “A digital technique for art authentication,” Proc. National Academy of Sciences 101(49), 17006 (2004).

- D. G. Stork and M. Kimo Johnson, “Computer vision, image analysis and master art, Part II,” IEEE Multimedia 13(4), 12 (2006).

- D. G. Stork and M. K. Johnson, “Estimating the location of illuminants in realist master paintings: Computer image analysis addresses a debate in art history of the Baroque,” Proc. 18th Intl Conf. on Pattern Recognition, I, 255 (2006).

David G. Stork is chief scientist at Ricoh Innovations, Menlo Park, CA, USA; rii.ricoh.com.