DEVELOPERS LOOK TO neural networks To solve complex Imaging tasks

DEVELOPERS LOOK TO neural networks To solve complex Imaging tasks

By Andrew Wilson, Editor at Large

To implement feature and pattern recognition in many machine-vision, medical, and scientific applications, image-processing techniques such as normalized correlation are used to match specific features in an image with well-known templates. In addition, these imaging applications generally require multiple, complex, and irregular features within images to be analyzed

simultaneously. For example, in semiconductor-wafer inspection, imaging defects are often complex and irregular and require more human-like inspection techniques. By using neural networks and associated devices, however, developers can train such systems with hundreds or thousands of images so that the system eventually "learns" to recognize any detected irregularity.

Unlike conventional image-processing systems, neural networks are based on models of human brain cells and comprise a large number of simple elements that operate in parallel. In the human brain, more than a billion of these elements, called neurons, are individually connected to more than 10,000 other neurons. Al though each brain cell operates like a simple processor, the massive interaction among them makes human vision, memory, and thinking possible.

Supervised or not

To train a neural network in pattern recognition, either supervised or unsupervised learning methods can be used. In supervised learning mode, it is assumed that the correct output of the neural network is already known. In such cases, a target image may be presented to the neural network in the form of gray-scale or binary inputs, and an output or classification-output pattern is generated. This output pattern is then compared to the known correct output. If the output pattern does not match this output, the weights within the network are adjusted accordingly. When such images are then presented to the network, the network will correctly classify them. Supervised learning algorithms are used in both forward- and back-propagation networks to change the weights in the network`s hidden layer.

In unsupervised learning mode, the correct output pattern of the neural network is not known. In such topologies as the Kohonen feature map, output representations are created in a feature map in which similar inputs activate output units that are close to one another. Using such unsupervised learning modes to classify different images, for example, will result in a neural network where neurons have been weighted to classify images by type (see Fig. 1).

Whereas unsupervised learning organizes image features in terms of a feature space, supervised learning can be used to further process such image classes. Because of this, large data sets can be reduced by using a combination of unsupervised and supervised learning methods. In the radial basis function (RBF) model, for example, classification of data is performed by analyzing input vector and stored prototype data represented as vectors in n-dimensional space. Once classification is complete, the system can learn by adjusting the weights in the network as a function of the new input and the weighted vector map already present.

Analog goes digital

To accelerate image-pattern recognition and classification operations, many researchers turned to silicon devices to speed up neural-network processing capabilities. At first, such circuits were purely analog, allowing true neural capability to be mapped in silicon. Recently, however, designers have abandoned these often noisy, imprecise analog circuits in favor of digital circuits that allow arbitrary precision, noise immunity, and ease of system integration.

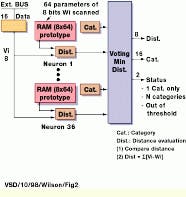

For example, at the IBM Essonnes Laboratory (Essonnes, France), researchers have developed the zero-instruction-set computer (ZISC), a 36-neuron single-chip neural network device for recognition and classification applications (see Fig. 2). Capable of performing RBF functions, this integrated circuit (IC) operates without any programming instructions. Instead, applications are developed by only reading or writing to the global registers common to all neurons and the registers specific to all neurons.

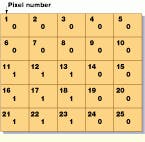

To perform edge detection using this IC, each ZISC neuron stores a typical subimage that falls in either the contour or noncontour category. Each neuron can store up to 64 components of an 8-bit subimage, and thus each component corresponds to the gray level of one pixel. In the learning phase, subimage units of 7 ¥ 7 pixels can be used to characterize a central pixel. This central pixel is then characterized as black or white and as a contour or not. In the classification phase, the ZISC compares the subunit area with the stored subimages for each pixel in the original image. The nearest subimage match permits the IC to highlight the pixel as either a contour or not.

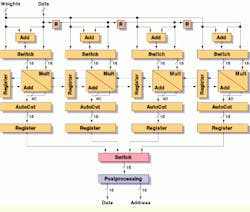

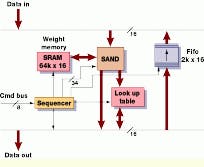

Like the ZISC, the simply applicable neural device (SAND) from the Microelectronics Institute (IMS; Stuttgart, Germany) can also be used to model RBF networks, multilayer perceptrons, and Kohonen feature maps. At the center of the IC are positioned four arrays. Each array contains a multiplier and four adders, one of which serves as an accumulator. To implement a neural-network processor, a synchronous-RAM weight store, a first-in, first-out (FIFO) buffer, and a look-up table (LUT) are required (see Fig. 3). To control the memory management and the SAND chip, a field-programmable gate array (FPGA) is used to provide a simple macro command set.

In operation, input data consisting of weights and data are processed by the SAND IC. Dependent on the operating mode, a nonlinear transfer function can be implemented as an external LUT. Output layers of the hidden neural-network layers are temporarily stored in the FIFO before again being processed by the SAND IC. According to researchers, the SAND architecture is already speeding the automated diagnosis of mammograms. A PCI board containing as many as four SAND devices is also available from datafactory GmbH (Leipzig, Germany).

RAM-based nets

While many IC vendors are taking a formal approach to neural-network ICs, others are exploring different approaches for building pattern-recognition systems. In a patent granted last June, Arthur Gaffin and Brad Smallridge of SIGHTech Vision Systems (San Jose, CA) have developed a novel RAM-based neural network that does not employ a feed-forward, recurrent, or laterally connected network. To classify and thus inspect complex images, image data are initially captured by a 720 ¥ 480-pixel-resolution CCD camera in the company`s Eyebot system.

To differentiate between good and bad images, binary image data from the camera are isolated as a series of overlapping 5 ¥ 5-bit subregions. Binary values represent the pixel values of the image within the subregion (see Fig. 4). To reduce the large amount of data, a mathematical hashing or compression function such as an exclusive OR (XOR) gate or an invert (INV) gate is used on different pixels to reduce 25 bits to 19 bits. These 19 bits are then used to directly address a memory location in the Eyebot system.

If the system is in learn mode, the address is used to write a "1" into that memory location. If the system is in forget mode, then a "0" is placed in the memory location. By moving the 5 ¥ 5 "kernel" over the complete 720 ¥ 480 image, the Eyebot retains a representation of the image in the form of thousands of small shapes. After training, the count of reoccurring 1s and 0s represent how close the current image is to a trained image and allows the Eyebot to be deployed as a low-cost inspection or recognition machine-vision system.

In a medical application for the Eyebot, Labcon (Marin, CA) has installed such a system for inspecting pipette tips. In operation, the system determines whether a filter has been placed inside the pipette, and, if not, it activates a reject mechanism to eject the pipette from the production process.

Like other areas of image processing, neural networks do not necessarily present a panacea to developers of medical, military, or machine-vision applications. However, as a useful pattern-matching and classification tool, the processing capabilities of neural networks must be considered. Indeed, when used with more commonplace image-processing functions such as edge detection, histogram equalization, and statistical analysis, improvements in neural networks are steadily working their way toward an accurate representation of the human-vision system.

FIGURE 1. Laterally connected neural networks, such as the Teuvo Kohonen feature map, consist of feed-forward inputs and a lateral layer or feature map where neurons organize themselves according to specific input values. Such networks can learn in unsupervised mode, creating output representations in a feature map in which similar inputs activate output units that are close to one another.

FIGURE 2. The IBM zero-instruction-set computer (ZISC) is a 36-neuron single-chip neural network device developed for recognition and classification applications (left). To perform edge detection using this IC, each ZISC neuron stores a typical subimage that falls in the contour or noncontour category. Subimage units of 7 ¥ 7 pixels are used to characterize a central pixel as part of a contour or not (bottom).

FIGURE 3. The simply applicable neural device (SAND) IC can model RBF networks, multilayer Perceptrons, and Kohonen feature maps. At the heart of the IC, four arrays each feature a multiplier and four adders, one of which serves as an accumulator (right). To implement a neural network processor, an external SRAM weight store, a FIFO buffer, a look-up table (LUT), and an FPGA are required (bottom).