Enabling deep learning for the everyday design engineer

Johanna Pingel, Product Marketing Manager, MathWorks

As deep learning techniques are increasingly adopted by technologies like speech recognition devices and document management systems, engineers and researchers are realizing that there is a substantial knowledge gap between deep learning experts and the broader population of design engineers. There is a growing need for engineers trained in deep learning, according to Johanna Pingel, Product Marketing Manager, MathWorks, but to successfully train these individuals, a scalable and intuitive modeling system is required.

In this Q&A, Pingel elaborates on current deep learning challenges and opportunities, and how MathWorks is adding new and important deep learning capabilities that simplify how engineers, researchers, and other domain experts design, train, and deploy models.

In recent years we have experienced exponential growth in the capabilities of deep learning technologies. In your opinion, what have been the main factors driving the development of this technology?

Johanna Pingel: According to Gartner’s 2017 Hype Cycle for Emerging Technologies, deep learning reached megatrend status this past year thanks to a few contributing factors. While deep learning has been around for at least 20 years, the availability of massive data sets in the form of Internet images and videos, the exponential increase in the computing power of graphics processing units (GPUs), and the prevalence of deep learning networks have led to the maturation of deep learning systems.

One thing that is maybe not talked about enough is the fact that it can be difficult for non-deep learning experts to integrate the technology into their own designs. What do you feel are the biggest challenges for non-experts?

Deep learning poses many challenges for experts and non-experts alike. In today’s landscape, some of the most common challenges result from the massive amounts of data made available by the Internet. While the Internet holds a vast collection of still and moving images, this data supply can create a significant obstacle for anyone who needs to capture and label thousands, or even millions, of images required for image classification, object detection, or semantic segmentation.

A lack of familiarity with deep learning frameworks, such as Caffe and TensorFlow, is another potential stumbling block for scientists and engineers. Not only can it take time to learn a new programming language, the process often demands specialized programming skills that are outside the domain of the non-expert.

Are there resources that engineers and users can access to acquire necessary deep learning knowledge?

More and more we are coming to realize that there is a shortage of engineers trained in deep learning, making it imperative for non-expert engineers and scientists to quickly acquire deep learning knowledge. Unfortunately, there is an underwhelming amount of instructional materials available to meet this need – an absence that creates another barrier for engineers as they seek to tap the power of deep learning. Couple this with the lack of a comprehensive workflow that is sharable and easy to use, and engineers are left at a dead end as they struggle to adapt to this new technological language.

In looking at these problems, there seems to be a discrepancy between where engineers currently stand with deep learning and where they need to be. What are companies, including yours, doing to remove these hurdles?

For years vendors like MathWorks have been dedicated to developing scalable yet familiar tools to help engineers address the steep learning curve associated with emerging technologies. Deep learning is one of the newest and most in-demand technologies, but very few engineers understand how to properly integrate the technology into their designs. MathWorks’ Release 2017b addresses this industry need and includes tools to simplify deep learning capabilities that enable engineers, researchers, and other domain experts to design, train and deploy models.

Can you tell our readers a bit more about these tools and how they help with adopting deep learning?

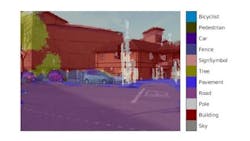

One of the major tools made available in Release 2017b is the new Image Labeler app in our Computer Vision System Toolbox. This tool provides a convenient and interactive way to label ground-truth data pulled from a sequence of images. It also supports semantic segmentation using deep learning to classify pixel regions in images and evaluate and visualize segmentation results.

Release 2017b has also added functionality to our Neural Network Toolbox, which supports complex deep learning architectures, including directed acyclic graph (DAG) and long short-term memory (LSTM) networks while also providing access to popular pretrained models such as GoogLeNet.

These additions help engineers streamline the process of labeling data while they work in an environment that is familiar to them. These toolboxes also help engineers label data quickly and accurately – a task that previously was incredibly tedious and expensive.

Do the capabilities of the latest release address other issues commonly associated with training a deep learning model?

Yes, for example, we have factored GPU model training into Release 2017b, which eliminates the need for manual coding when setting up single or even multi-GPU machines. We’ve also included a GPU Coder in 2017b that explicitly targets GPUs for code optimization. This allows engineers and scientists working in MATLAB to automate the task of converting deep learning models to CUDA code for NVIDIA GPUs.

We’ve also taken further steps to address final model deployment. New capabilities provide the flexibility to target a variety of deployment solutions, such as enterprise solutions, web applications, and embedded GPU applications.

How, in your opinion, does MATLAB differentiate from the competition?

When faced with the challenge of creating more intelligent products and applications, engineers are forced to either develop deep learning skills themselves or outsource to other teams that may have the expertise, but lack the application context. Here is where MATLAB is perhaps the most differentiated – we enable domain experts to use deep learning in their systems without the need for a deep learning expert.

We also provide training guides, videos, use cases and online tutorials like Learn to Use MATLAB for Deep Learning in 2 Hours. These are easily available to engineers and scientists and provide the necessary background to help them master deep learning tools without becoming deep learning experts.

Pictured: Semantic segmentation using deep learning. Copyright: 1984–2018 The MathWorks, Inc.

Share your vision-related news by contacting James Carroll, Senior Web Editor, Vision Systems Design

To receive news like this in your inbox, click here.

Join our LinkedIn group | Like us on Facebook | Follow us on Twitter