Census transform brings stereo to embedded systems

Andrew Wilson, Editor, [email protected]

In applications such as tracking people within industrial workspaces, mobile robot navigation, and targeting, 3-D representations of image data must be captured accurately and at high resolution. While classic correlation approaches such as normalized cross correlation (NCC), sum of absolute difference (SAD), and sum of squared difference (SDD) algorithms can be used, the computational demands of such algorithms necessitate FPGA, DSP, or combined DSP/FPGA implementations.

Last year, for example, Focus Robotics (Hudson, NH, USA; www.focusrobotics.com) introduced its nDepth Vision processor-an OEM subassembly featuring two off-the-shelf CMOS imagers interfaced to an FPGA development board from Xilinx that uses the SAD algorithm to compute a depth map. While the same algorithm running on a general-purpose processor can only achieve a few frames per second on 256 × 256 images, the nDepth processor can run at 30 frames/s on 752 × 480 images (see Vision Systems Design, August 2005, p. 23).

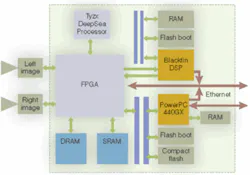

This month, Tyzx (Menlo Park, CA, USA) expects to introduce an embedded stereo camera-the G2-that incorporates two CMOS imagers, PowerPC, Blackfin DSP, and an FPGA. Rather than use algorithms such as NCC, SAD, or SDD, the camera incorporates a Tyzx Census transform correlation-based ASIC that the company claims performs better than traditional approaches.

“Census transform correlation is an efficient, robust algorithm for visual correspondence,” says Ron Buck, CEO of Tyzx. “The algorithm consists of a transform and a correlation step. In the transform step, the intensity of a pixel within a region of interest is compared with the intensity of pixel values in its neighborhood. If the intensity of the neighbors is less than the original pixel, a “1” is stored in a bit string, otherwise a “0” is stored. This is done for images taken with both cameras. After the bit strings are computed, correlation compares the individual bit strings using the Hamming distance. This provides a measure of the number of errors that transform one string into the other and thus the degree of correlation between the two images.

Three properties

“Census transform correlation exhibits three key properties,” says Buck. “It performs well near discontinuities and is an efficient algorithm that can readily be implemented in hardware. Perhaps the most important property of the algorithm, however, is that it does not assume or require that corresponding points have constant brightness. In 3-D systems this is very important, since such systems use two cameras that will have different internal properties. Other factors may also cause corresponding points to have different intensities such as changes in scene illumination and viewing angle.”

Census transform correlation does not assume these pixels are of constant brightness since it uses ordering information among intensities, which is not affected by camera parameters. When objects have sufficient texture, the ordering of intensities is only slightly influenced by changes in illumination or viewing angle.

To generate 2- and 3-D data sets where such functions as clipping can be performed in x, y, and z axes, the G2 supports what Tyzx calls visual primitives similar in concept to the graphics primitives used in OpenGL. The first of these, Stereo Correlation, takes the right and left images from the camera and generates an output range image using the Census transform. Range and intensity data can then be fed to a background modeling primitive that generates a foreground/background map. The 3-D data are then projected into a Euclidean volume that can be manipulated and transformed as a graphical map.

“At 30 frames/s, 512 × 480 images are fed into the range processor; range results and rectified images are then processed by background modeling, and the results are fed to the projection algorithm. Finally, color, range, and projection results are DMA’d into the PowerPC,” says Buck.

To further develop the technology, Tyzx has recently received a $4 million investment from Takata (Tokyo; Japan; www.takata.com), a manufacturer of automotive safety systems. This follows an earlier investment by Microsoft cofounder Paul Allen.