June 2014 Snapshots: Critter cams, IR imaging for F1 racing, document scanning, web inspection, activity recognition algorithm

Founded in 1915, the Cornell Lab of Ornithology (Ithaca, NY, USA;www.birds.cornell.edu) is dedicated to the study and conservation of birds. Since 2012, the organization has been expanding its web streaming offerings to enable users to study various species of birds.

The lab has a number of different camera sites installed throughout the world. In Ithaca, New York for example, Cornell has installed a P3364-LVE fixed dome network camera from Axis Communications (Lund, Sweden;www.axis.com) at a great blue heron nest. The 1 MPixel AXIS P3364-LVE PoE camera features a 1/3in progressive scan RGB CMOS image sensor, and runs at 25-30 fps and can be configured for individually configurable video streams in H.264 and motion JPEG with controllable frame rate and bandwidth. Following the installation, the camera has enabled more than 1.4 million people to watch herons return in spring and raise their young (http://bit.ly/1rtQvpH).

Cornell researchers have also installed an AXIS Q6044 PTZ network camera at an albatross nest in Kaui, Hawaii. With similar features to the AXIS P3364-LVE PoE camera, the AXIS Q6044 PTZ camera features motion detection capabilities, electronic image stabilization, automated defog and a removable infrared cut-out filter. In addition to these two installations, Cornell has installed cameras for live viewing of barn owls in Texas and Indiana, red-tailed hawks in Ithaca, New York and ospreys in Missoula, Montana.

FLIR Systems to provide IR technologies to racing team

FLIR Systems (Wilsonville, OR, USA;www.flir.com) has been named an innovation partner with the Infiniti Red Bull Racing team (Buckinghamshire, United Kingdom; www.infiniti-redbullracing.com), the winner of four consecutive Formula One World Championships in both Constructor's and Driver's categories. The racing team will use FLIR Systems' IR technologies to gather temperature data on its 2014 challenger, the RB10 race car.

FLIR will use its Lepton miniature thermal imaging cameras to provide the Infiniti Red Bull Racing team with insights into the thermal characteristics of their car's components and operations. With the introduction of 1.6 liter V6 Turbocharged engines with dual energy recovery systems, Formula One teams are presented with new challenges for engine cooling and managing engine temperature.

Of the new collaboration and cooling challenges, Christian Horner, Team Principal of Infiniti Red Bull Racing said, "This year sees the most fundamental changes to Formula One in well over a decade. The team most efficient in gathering relevant data and adapting accordingly will be the one which triumphs".

Lepton cameras are about the size and weight of a conventional CMOS cell phone camera module and feature an 80 x 60 pixel uncooled VOx micro-bolometer array with a pixel size of 17 x 17 µm and a frame rate of 9Hz. The 8.5 x 8.5 x 5.9 mm fixed focus camera features a spectral range of 8-14 µm, 50° horizontal field of view, and thermal sensitivity of <50 mK. Lepton utilizes wafer-level detector packaging, wafer-level micro-optics, and a custom integrated circuit that supports all camera functions on a single IC.

Vision system scans stuffed envelopes

A manufacturer of inserting and mailing equipment recently found itself with a potential customer that required the capture of images of the front of stuffed envelopes and perform optical character recognition from the address blocks. To develop this system, the company sought the expertise of Dan Houseman, owner of Savvy Engineering. (St Louis, MO, USA;www.savvyengineering.com).

In the document scanning application, data of envelopes of different sizes with address windows in varying locations needed to be captured. Houseman decided to use TCE-1024-U line scan cameras from Mightex (Toronto, ON, Canada;www.mightexsystems.com). These 1024-pixel USB 2.0 cameras feature CCD image sensors with 14 x 14 µm pixel size that are capable of achieving clock rates of 25,000kHz in 8-bit normal mode and 10,000kHz in 12-bit normal mode, as well as 11,000kHz for both 8 and 12-bit trigger mode. The cameras are supplied with a software development kit to allow users to develop proprietary applications.

The cameras are mounted inside a custom housing along with custom-fabricated LED strip lighting powered by the PC's internal 12V power supply. After the envelopes are stuffed and travel through postage metering, they move through the scanner at a speed of 1300 mm/s.

"The camera scan rate is slightly more than 10,000 lines per second and the achievable resolution is about 180 DPI," Houseman says.

As the documents are scanned, the PC runs two custom applications—one for the acquisition of images and the other for OCR.

The image acquisition application transfers images as JPEG files into a "hot folder," and the OCR application monitors the folder for new images, stores the OCR data in a database, and moves the image into a selected storage folder. The OCR application runs at a lower priority level than the image acquisition app so that images are not at risk of being missed, according to Houseman.

The images and data captured by the system are used as a "proof of mailing," that may be referenced at later date by the manufacturer.

Machine vision system identifies web defects

Manufacturers of materials such as plastics, papers, foils, films, metals and non-wovens require 100% surface inspection, which enables them to remain competitive and meet regulatory requirements by identifying and resolving problems early in the production process so that defective goods do not reach the market. Realizing this, Active Inspection (Grand Rapids, MI, USA;www.activeinspection.com) has developed its AI Surface vision system to perform web inspection on a number of materials including glossy, matte, and textured surfaces. Defects identified by the AI Surface inspection system include gels, skips, pinholes, roll-marks, holes, and scratches.

Depending on the complexity of a particular application, AI Surface includes up to eight 4k or 8k line scan cameras. For lower-end applications, the system uses Teledyne DALSA (Waterloo, ON, Canada;www.teledynedalsa.com) Piranha2 4k cameras connected to a Solios eV-CL PCIe Camera Link frame grabber from Matrox Imaging (Dorval, QC, Canada; www.matrox.com/imaging). Piranha2 4k monochrome cameras feature a CMOS 4096 x 1 pixel sensor with a 7 x 7 µm pixel size. These cameras, which feature base Camera Link output format, achieve a maximum line rate of 18 kHz.

For higher-end applications, the AI Surface system uses four Teledyne DALSA Piranha3 8k monochrome cameras connected to a Matrox Radient eCL PCIe Camera Link frame grabber. Piranha3 8k cameras feature a 8192 x 1 pixel CMOS image sensor with a 7 x 7 µm pixel size. These cameras, which feature Camera Link medium or full output format, achieve a maximum line rate of 33 kHz.

In addition to the cameras, the AI Surface inspection system employs Lotus LED line lights from ProPhotonix (Salem, NH, USA;www.prophotonix.com) for illumination, a multi-CPU Windows-based workstation, a National Instruments (Austin, TX, USA; www.ni.com) DAQ for data acquisition, a marker/tagger, an encoder, and a Microsoft SQL database server.

Images captured by the Piranha cameras are processed using the Matrox Image (MIL) library. Processing operations used include convolution, binarization, morphology (dilate and erode), flat-field correction and projection. AI Surface inspects a web that is 130 ns wide at a rate of 670 ft/min. The inspection system can detect defects as small as 0.008 x 0.008ins.

The system, which features an touchscreen HMI that provides real-time feedback and tuning, supports alarms, flagging, machine control, and waste removal actions. When a serious class of defect occurs, the operator sees the defect on the screen and a designated action sequence is activated, such as driving a downstream reject deveice.

Knowlton Technologies (Watertown, NY, USA;www.knowlton-co.com), a manufacturer of nonwoven materials, has recently installed the AI Surface inspection system. "AI Surface let us remove three workers from a 100% manual inspection procedure and allowed us to realize a quick return on our investment," said Richard F. Barlow, Advanced Materials Engineer at Knowlton Technologies. "Also, the user interface is configured to allow the creation of multiple custom classifiers for various customer requirements."

Researchers develop activity recognition algorithm

Hamed Pirsiavash, a postdoc at MIT (Cambridge, MA, USA;www.mit.edu), and his former thesis advisor, Deva Ramanan of the University of California at Irvine (Irvine, CA, USA; www.uci.edu) have developed an activity recognition algorithm that uses techniques from natural language processing to enable computers to more efficiently search videos. The algorithm analyzes a partially completed action and issues a probability that the action is of the type that it is looking for. It may revise this probability as the video continues, but does not have to wait until the action is complete to assess it.

Pirsiavash and Ramanan's algorithm uses aspects of natural language processing. "One of the challenging problems natural language algorithms attempt to solve is what the subject, verb and adverb used in a sentence might be," Pirsiavash says. "Similarly, a complex action — like making tea or making coffee —has a number of sub-actions." For the algorithm to work, a library of training examples of videos depicting a particular action and sub-actions are specified.

The researchers tested their algorithm on eight different types of athletic endeavors with videos from YouTube and found that it identified new instances of the same activities more accurately than existing algorithms. Pirsiavash suggests the algorithm could be used for medical purposes to determine whether elderly patients remembered to take medication.

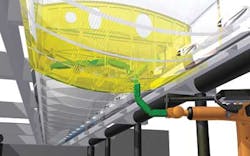

Robots automate aircraft wing assembly

While certain parts of the automated aircraft assembly process can be performed with relative ease, the wing assembly portion of the process remains a complex task. Hollow chambers within the wing are accessed through narrow 45 cm x 25 cm chambers, making it difficult for workers to fit bolts and seal joints. For each individual wing box, approximately 3,000 drilling and sealing actions are required. As a result, researchers at the Fraunhofer Institute for Machine Tools and Forming Technology IWU (Chemnitz, Germany;www.iwu.fraunhofer.de/en) have developed an articulated robotic system to automate the process.

"The robot is equipped with articulated arms consisting of eight series-connected elements which allow them to be rotated or inclined within a very narrow radius to reach the furthest extremities of the wing box cavities," said IWU project manager Marco Breitfeld.

Each of the robot's eight limbs is fitted with either a tool for drilling and sealing or a camera. Each arm is 2.5m long and capable of supporting up to 15 kg tools, in addition to its own weight. The robot is driven by an intricate gear system and a small motor is integrated into each one of the robot's arms. Used in conjunction with a cord-and-spindle drive system, each section of the robot arm can be moved independently and turned through an angle of up to 90°.

Fraunhofer researchers are currently testing the design and will demonstrate the robot at the Automatica trade show in Munich.

UAV features 14M pixel camera

Designed for both indoor and outdoor flying operations for 12 mins at a time, the Bebop Drone, an unmanned aerial vehicle (UAV) from Parrot (Paris, France;www.parrot.com) employs a 14 MPixel camera with a 1/2.3in CMOS 30fps image sensor. The camera has a 180° fisheye lens, H264 video encoding and a 3-axis digital image-stabilization system.

Users control the Bebop via a Wi-Fi connection using the iOS and Android compatible FreeFlight3.0 app for tablets and smartphones. On-screen controls allow movement and vision functions to be adjusted while the drone beams back a live video feed to the tablet. Users can also plan flights on a smartphone or tablet by touching waypoints on the screen and letting the UAV autonomously navigate. Images can also be viewed using the Oculus Rift (Irvine, CA, USA;www.oculusvr.com) virtual reality headset.

With the integration of a 3-axis stabilization system and a 14 MPixel camera, Parrot has placed an emphasis on the Bebop's ability to capture high-resolution images. In addition, Bebop is the first UAV from Parrot that can transmit live streaming of video to a phone or tablet. It will be interesting to see what types of applications the UAV is used for, especially considering the recent ruling that civilian UAVs in public airspace are not, in fact, illegal.

Vision Systems Articles Archives