Imaging Upgrades Border and Port Control

Visible and infrared subsystems combine to enable cost-efficient wide-area surveillance

Winn Hardin, Contributing Editor

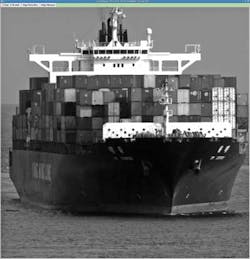

One of the most difficult challenges facing the United States related to border and customs control is the size of the application. The Canadian/US border alone is 5500 miles long. The US/Mexico border is nearly 2000 miles long and neither of these borders includes the Atlantic and Pacific coastlines, the Gulf of Mexico, or the thousands of miles of rivers that provide access to regions inside the country.

Obviously, posting border officials along some 15,000 miles is not feasible, nor is using traditional CCTV techniques for mounting video surveillance. However, the size of US borders is only one of the challenges facing border protection. Data must be available in real time to multiple government agencies in an easy-to-use and intuitive format.

As part of a recent technology evaluation program sponsored by the US Department of Homeland Security (DHS) Science and Technology Directorate, Innovative Signal Analysis (ISA) has demonstrated a persistent surveillance system to help meet these challenges. ISA's daytime and nighttime WAVcam sensors are being evaluated in an operational testbed environment at Angel's Gate in California, which is the mouth to the Long Beach and Los Angeles ports.

The system offers large-area (~7–50 square miles for human detection, depending on the selected sensor types and lenses), wide-angle (90°) surveillance with automated change detection and change history display in day and night conditions, along with a real-time data server that allows users in the US Coast Guard, port authorities in both Long Beach and Los Angeles, and US Customs and Border Protection (CBP) to simultaneously access and control the surveillance video as part of the evaluation (see Fig. 1). To assist officials with identifying potential threats across the 80-Mpixel, panoramic video feed, the WAVCam system uses specialized image-processing algorithms to detect moving objects in the video feed and maintain a history of those tracks over time.

To put the new product into perspective, one WAVcam sensor simultaneously provides continuous surveillance coverage and high-resolution imagery that would require 40 or more conventional surveillance cameras, according to Wayne Tibbit, business development manager for ISA. When considering the total investment of a surveillance system's deployment and infrastructure, this results in substantial cost efficiencies.

High-res surveillance

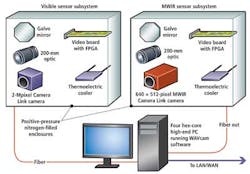

In its simplest form, the WAVcam system is essentially two boxes: a sensor subsystem and an image-processing unit/video archive/server. The third element is a client-side software program, WAVclient, that allows any user with access to the network to control the video feed as if they controlled the camera subsystem. The sensor subsystems can include visible cameras for daytime surveillance or midwave infrared (MWIR) cameras for nighttime surveillance.

At the Angel's Gate installation and technology demonstration, DHS asked ISA to supply two sensor subsystems to provide long-range, daytime, and nighttime surveillance: a WAVcam-VIS and a WAVcam-MIR. Both subsystems include a camera, camera optics, an astronomy-grade mirror with motion controls, and a processing board that controls camera and optics interface and control functions, inertia sensing, image processing, and interface functions to compress the images and bundle video data with sensor data for TCP/IP communication to the local area network (LAN). The subsystem also includes temperature sensors and control functions (see Fig. 2).

The daytime WAVcam sensor subsystem contains an Imperx Bobcat 2-Mpixel, visible CCD camera with Camera Link output. “The method for creating the high-resolution panoramic image is stepping the camera's instantaneous field of view across the subsystem's 90°-wide field of view in 2° increments every few milliseconds,” explains ISA's Tibbit. “The Bobcat runs at up to 41 frames/s, and we spread those frames across the entire 90° field of view. So it takes between 1 and 2 s to acquire a new panoramic image. The images are stitched together in our downstream processing algorithms into a single ‘Wide Area View,' hence the name WAVcam.”

Field operations have shown that this update rate is fully effective over the ranges and wide areas the system is designed to observe. Tibbit says the initial WAVcam generation uses fixed-focal-length lenses, but subsequent models will incorporate active telephoto zoom technology in addition to the current digital zoom technology.

ISA's team used the US Army's Night Vision Thermal Imaging Systems Performance Model (NVTherm) for IR system performance modeling to ensure the camera/optics combination met the minimum requirement of being able to place 2–3 pixels on a human-sized target within the coverage range—or from distances up to 3 miles for the Angel's Gate installation of the WAVcam night sensor.

A proprietary processing board inside the enclosure includes an inertial measurement unit (IMU) chip that monitors movement of the enclosure due to external forces such as wind. It also hosts temperature and pressure sensors that monitor the environment inside the enclosure to make sure the Marlow Industries thermoelectric cooler is working properly, and the positive-pressure nitrogen gas inside the enclosure and hermetic seals are keeping contaminates from entering the enclosure.

The board is controlled by a Xilinx FPGA that implements an on-chip microprocessor. The FPGA controls the compression of incoming images using a lossless, wavelet JPEG 2000 algorithm, and collects sensor data for bundling with image data to send across the fiberoptic output in TCP/IP protocol to the downstream processing unit.

“The HD Imperx camera offers a standard Camera Link interface, and the manufacturer provided all the information we needed to integrate it into the WAVcam system,” says Tibbit. “It offers beautiful imagery, a full set of controls to command the camera and control integration time, as well as other camera functions.” Controlling the integration time is critical to ensuring each part of the panoramic image offers the proper contrast for accurate downstream processing.

The nighttime WAVcam sensor subsystem includes a Lumitron 640CZ MWIR camera with 640 × 512-pixel InSb sensor. “The [pixel] pitch for the MWIR camera is about twice that of the 7.4-μm Imperx camera, so it offers about a quarter of the resolution… but that's still an 8.2-Mpixel panoramic image,” explains Tibbit. “The camera is cryo-cooled and robust, and is currently in active use by US Army programs.”

Automated assist for security

WAVanalytics software runs on the downstream PC. Images enter the PC through either a fiber or copper Ethernet connection, and the WAVanalytics software stitches the individual frames together after stabilizing the field of view from frame to frame using IMU information. From there it enhances the panoramic image's contrast for optimal viewing. ISA used fiber connectivity for the Angel's Gate demonstration.

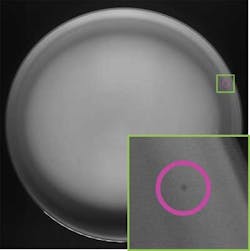

Because of the size of the image, WAVanalytics also uses “change detection” algorithms, essentially employing proprietary signal-processing algorithms to compare each panoramic image and identify changes in the stationary image. These changes represent moving objects, which are then color-highlighted in the panoramic image (see Fig. 3). At the same time, the display shows a sonar-like video feed that visually displays the change detection targets' motion over time, generating easily recognized “target signatures.”

All images are stored locally on the PC, which also acts as a video server for the WAVclient software, which can run on any number of terminals as long as the network bandwidth is not exceeded. WAVclient is written in JavaScript, so it can run on virtually any mobile or desktop computing platform. To perform the image-processing functions, archive the images, and serve up the data, ISA chose a high-end PC with four hex-core processors to handle both the image processing as well as the video serving functions.

By using a video server to deliver video data to any number of WAVclients, each user can have full control of the video data, selecting regions of interest (ROIs) around identified targets or other suspicious objects—regardless of where the user is on the network. By clicking on the ROI, WAVclient automatically switches to the full high-resolution image (Fig. 3). Also, since the user controls for the video functions and archive functions are intuitively arranged together, any operator can automatically go back in time through stored video by moving the slider at the bottom of the display or by entering a specific date/time to be reviewed.

“We didn't want to have a separate video recorder that would require the user to turn away from the live feed to access stored video,” explains Tibbit. “You drag the slider back in time and you see what happened; drag it forward to the present and you fast-forward right back to real time.”

The sonar-like histogram for change detection events is incorporated to simplify change tracking and determining whether a potential threat is a bird or other uninteresting object, or a potentially threatening person, boat, or other vehicle. “Humans do pattern detection really well, and looking at the changes over time tells the user whether the object is moving too fast to be a boat—such as a nearby bird—or something worth closer investigation,” Tibbit says.

Innovative Signal Analysis's trial at Angel's Gate has been ongoing for several months, and DHS is expected to release a full report of the system's success in the upcoming months. In the meantime, WAVcam designers are anticipating new developments, including recent physical surveillance and protection standards. For example, the C2 Display Equipment Information Interchange standard (SEIWG ICD-0101A), coming out of the Department of Defense's Physical Security Equipment Action Group, will help security companies develop products that work with other sensor modalities in an open-source fashion.

Company Info

Cambridge Technology

Lexington, MA, USA

www.camtech.com

Imperx

Boca Raton, FL, USA

www.imperx.com

Innovative Signal Analysis

Richardson, TX, USA

www.signal-analysis.com

Lumitron

Louisville, KY, USA

www.lumitron-ir.com

Marlow Industries

Dallas, TX, USA

www.marlow.com

Xilinx

San Jose, CA, USA

www.xilinx.com

Vision Systems Articles Archives