System Spies Spectral Signatures

Hyperspectral-camera-based vision system reconstructs images in real time for intensive analysis applications

Andrew Wilson, Editor

In many vision applications, simply capturing and processing a grayscale image is sufficient. By understanding the position of each pixel in x,y space and its grayscale intensity value at each point, functions such as edge detection and blob analysis can be used to measure features within the image.

However, in certain biomedical, industrial, military, and machine-vision applications, it is necessary to know not just the intensity of every pixel in x-y space but also in wavelength. Applications such as biomedical image analysis, hydrocarbon gas analysis, and military imaging benefit from spectral image analysis; the data collected are used to identify fluorophores, quantify specific gases, or detect objects with known spectral signatures within an image.

Working toward a better system

A number of different methods have been employed to build hyperspectral imaging systems. Perhaps the simplest of these uses a color filter wheel in conjunction with a monochrome camera to capture multiple images of the same scene at different frequencies. In the design of its VeriColor Spectro spectrophotometer, X-Rite uses a wheel with 31 specified interference filters that are rotated between the viewing optics and a calibrated optical sensor to capture multispectral images.

Resonon, for example, uses a different approach, accomplished by placing a diffraction grating behind a narrow linear slit in the body of the company's Pika II digital FireWire imaging spectrometer so a 1-D line along the object is imaged during each readout frame. Because the Pika II is operated in a push-broom fashion, objects moving along a conveyor belt can be imaged and digitized in real time. Images from both systems can then be viewed as a function of wavelength at any pixel (see "Imaging systems tackle color measurement," Vision Systems Design, August 2007).

Other approaches have recently been proposed to lower the cost of the components used in the systems while allowing hyperspectral images to be captured at faster rates. Recently, IMEC showed a way of producing hyperspectral imagers by replacing the dispersive elements and focusing lens with an optical fixed wedge structure that is post-processed onto the imager (see "Novel technology lowers the cost of hyperspectral imaging," Vision Systems Design, January 2011).

Cubist approach

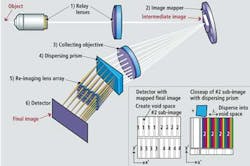

In a more recent development, Rebellion Photonics has designed a hyperspectral camera that uses multiaperture optical techniques (see Fig. 1) to greatly increase the light efficiency of spectral imaging devices and to allow large-format spectral images to be acquired in snapshot mode. The company's first product, the Arrow, is a complete $125,000 system that captures 500 × 500 × 40-pixel images at rates as fast as 7 frames/s.

Each of the 500 × 500 pixels in the datacube image consists of 40 different spectral channels that digitize the 400–800-nm visible spectrum at spectral bandwidths between 1 and 10 nm. Due to the nonlinear dispersion of the prism, the spectral spread at the blue end of the spectrum is much larger than in the red end. Sampling the spectrum at these 40 different channels results in a datacube of 500 (x) × 500 (y) × 40 (z) with the depth, or z coordinate, in the image representing the wavelength of the corresponding pixel.

In the design of the system, the image is first relayed onto an image mapper. This image mapper consists of hundreds of micromirror facets that redirect the light from each x,y position in the original image into an array of 40 sub-imagers (see Fig. 2). Splitting the image into narrow lines and splaying these out essentially cuts each sub-image field into strips such that blank regions exist between each of the lines in the sub-images. By re-imaging the object, which now consists of a set of narrow, separated lines through a dispersing prism, the spectral information of each line within the object can be captured on a 2-D detector.

These data are then captured using a Bobcat 16-Mpixel Camera Link camera from Imperx that was supplied to Rebellion Photonics by Saber1 Technologies. By over-clocking the Kodak KAI-16000 interline transfer CCD image sensor used in the camera, 500 × 500 × 40-pixel datacube images can be recorded at rates up to 7 frames/s.

After images are captured, they are transferred over the Camera Link interface. To reconstruct hyperspectral datacube images in real time, the captured image data are processed and rendered using an Nvidia graphics processor and proprietary software developed by Rebellion Photonics (see Fig. 3).

To identify different parts of the electromagnetic spectrum at different spectral bandwidths, different prism arrays can be inserted into the multispectral camera. For visible imaging applications, for example, Rebellion Photonics' Arrow allows 40 spectral channels to be sampled across the 400–800-nm spectrum, for a mean bandwidth of 10 nm per channel. An alternative prism array allows the same instrument to be used with a mean bandwidth of 5 nm across the 450–650-nm spectrum.

Because of its snapshot mode of operation, the Arrow has demonstrated unprecedented levels of light collection efficiency; while this is an important advantage in biomedical imaging, it can be used to even greater effect in remote sensing applications. Thus, Rebellion has also begun developing snapshot spectral-imager designs for use in the infrared spectrum, intended for hydrocarbon gas cloud monitoring, surveillance, and military imaging applications.

According to Rebellion's Dr. Robert Kester, the company has already found interest for the camera system from operators of oil and gas rigs. "Explosive hydrocarbon leaks are a constant, immediate threat at refineries and oil rigs. Leaks, when not detected early, accumulate into dangerous clouds that can ignite when they reach a certain concentration," he says.

"Current leak detection methods focus on installing point detectors at various locations that measure pressure or particular chemical concentrations. These traditional point detectors can be ineffectual because they do not give a full and accurate picture of the chemical environment within the plant or rig," says Kester. "With the Arrow system, one camera can cover large areas of the facility and is equivalent to thousands of line detectors working in unison. Since area coverage is inherently more reliable than any single-point detection or sensor network, false positive rates are reduced."

Company Info

IMEC

Leuven, Belgium

www.imec.be

Imperx

Boca Raton, FL, USA

www.imperx.com

Nvidia

Santa Clara, CA, USA

www.nvidia.com

Rebellion Photonics

Houston, TX, USA

http://rebellionphotonics.com

Resonon

Bozeman, MT, USA

www.resonon.com

Saber1 Technologies

North Chelmsford, MA, USA

www.saber1.com

X-Rite

Grand Rapids, MI, USA

www.xrite.com