Frame grabber helps record air-simulation scenarios

Andrew Wilson, Editor, [email protected]

The primary activity at the Air Combat Simulation Division of Lockheed Martin (Marietta, GA; www.lmaeronautics.com) is F-22 weapons-system-effectiveness validation. The company provides the US Air Force Operational Test and Evaluation Center (AFOTEC; Kirtland AFB, NM; www.afotec.af.mil) with a simulation system to verify that the F-22 aircraft meets its performance objectives.

AFOTEC and USAF Air Combat Command pilots fly F-22 simulators against various air-to-air and surface-to-air threats across a range of combat scenarios. After completing a mission, pilots review their performance using an operational debrief system (ODS). The ODS computer synchronizes the playback of video and audio files recorded from the simulated F-22 cockpit during a mission. The cockpit has six computer displays. In real time, the left, right, primary, and center multifunction displays, which contain most of the critical data, are continuously recorded. Audio cues from the pilot's communications panel and any aural cues generated by the mission computer are also recorded. Additionally, a single video track of the combined head-up display (HUD)/gun-camera display is also digitized. In total, one audio and five video tracks are recoded for each cockpit with up to four F-22s participating in any given simulation exercise simultaneously.

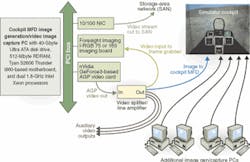

In the simulator, the F-22 display software runs on Microsoft Windows 2000-based PCs. For image display, a GeForce3-based accelerated-graphics-port video card from nVidia (Santa Clara, CA; www.nvidia.com) produces resolution of 1024 × 768 × 32 bits at 60 Hz. After the graphics images are generated, RGB signals from the board are split into five different RGB channels by an IN3264 VGA distribution amplifier from Inline (Orange, CA; www.inlineinc.com). One line from the splitter returns to the computer and re-enters through an I-RGB 75 image-digitizer board from Foresight Imaging (Lowell, MA; www.foresightimaging.com). The remaining RGB signals drive the cockpit display and monitors in the simulation control room.

Using the I-RGB 75 video frame-grabber card, the color cockpit video displays with a resolution of 1024 × 768 pixels at 60 Hz are recorded. A similar PC-based system uses Foresight's I-RGB 165 board to record the 1280 × 1024-pixel at 60 Hz combined head-up/gun-camera display.

Typical exercises last approximately 30 minutes. Videos are recorded at 10 frames/s with an average data rate of between 300 to 400 kbits/s per track. For video compression, Motion JPEG (MJPEG) software running on a host S2603 motherboard from Tyan (Fremont, CA; www.tyan.de) compresses data for the HUD/gun-camera view. For the cockpit displays, which mostly contain colored symbology on a black background, Microsoft's Run Length Encoding (RLE) algorithm is used. This algorithm provides a lossless reproduction of the display screens that contain only primary and secondary colors.

Software for capturing the video is written using the Foresight Imaging Idea v1.71 software-development kit (SDK). This SDK allows the use of six different pixel-format types. Whereas 16-bit, RGB (5-5-5) pixel data constitute the MJPEG encoded stream, an 8-bit pixel type was used for RLE.

Because synchronization is critical, it is imperative that starting or pausing the frame-grabber-based recorder occurs with hardly any latency. Over a 30-minute recording period, with several commands given to start and stop the recorders intermediately, the total track frame count varies by no more than two or three frames across the five recorded tracks obtained from one cockpit.