Smart Systems and Software

Software support remains key for successful smart camera deployment in machine-vision applications

Andrew Wilson, Editor

By integrating CCD or CMOS sensors with field-programmable gate arrays (FPGAs), digital signal processors (DSPs), CPUs, I/Os, and networking interfaces, smart camera vendors now offer system developers a way to deploy machine-vision systems at low cost. However, not all of these products are similar. While some target the low-end sensor replacement market, more sophisticated smart cameras integrate the functionality of a complete machine-vision system in a single package.

But what exactly constitutes a smart camera? Some may argue that every camera can be defined as smart since the processes of capturing images and transferring them to a host computer involve rather sophisticated electronics.

More generally, the accepted definition is a camera that can perform an autonomous operation on an image and produce a result to determine whether a part is good or bad. To perform this type of function, of course, demands more than capturing an image and transferring it to a host computer. It requires performing different types of imaging operations on the captured image data and extracting this information. However, it is how the image data are processed and extracted that defines the categories of smart cameras that are currently available, at the same time making the definition of a smart camera so intangible.

To perform image-processing functions within smart cameras, vendors often partition these tasks over a number of different processors that may include processing elements embedded on the image sensor itself. AnaFocus, for example, uses this approach in its EYE-RIS 1.3 GigE camera that uses a 176 × 144-pixel monochrome sensor-processor that combines sensor-level image preprocessing with digital post-processing running on FPGA. Programmed in C, the camera is offered with an application development kit that includes a set of image-processing libraries and can run at speeds up to 8000 frames/s.

Rather than use sensor-processor approaches coupled with FPGAs, most smart camera vendors perform point processing and neighborhood operations such as image thresholding and flat-field correction only within the camera's embedded FPGA. These operations are used to preprocess images for later interpretation; relatively few vendors allow system developers to program these devices because they often do not wish to provide the VHDL support required.

More often, these types of functions are supported by toolkits that allow the developer to configure the camera for the tasks. However, these approaches are more common in cameras that are not marketed as “smart” because the resulting image data has simply been preprocessedâno decision regarding the content of image data has been computed.

Image analysis

To perform more sophisticated image-analysis functions such as image segmentation, feature extraction, or interpretation requires global image-processing functions to be performed. To accomplish this, smart camera vendors are leveraging the power of DSPs and CPUs-often both-in conjunction with partitioning point and neighborhood operators on FPGAs. The choice of which DSP, CPU, and FPGA to use in these cameras varies as much as the varieties of the smart cameras themselves (see “Embedded Intelligence,” Vision Systems Design, August 2009).

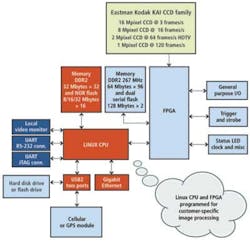

For its part, FastVision combines both a Xilinx Spartan FPGA and a Philips Nexperia 1502/1702 media processor in its 640 x 480-pixel FastCamera 34 smart camera; Imaging Solutions Group allows its Custom CCD Camera Platform to be configured with a variety of CCDs, FPGAs, and Linux-based CPUs (see Fig. 1).

Just as only a handful of vendors allow system developers to program the FPGAs within their cameras, only a few offer development toolkits with which to program embedded CPUs, DSPs, or FPGAs within smart cameras. Those that do, though, offer system integrators the advantage of customizing these smart cameras with proprietary in-house software, allowing the development of products targeted for niche machine-vision applications.

At VISION 2009, held in November in Stuttgart, Germany, FiberVision showed a 5-Mpixel smart camera based on a VCSBC4012 nano single-board camera from Vision Components specifically for detecting laser marking on the side of solar wafers (see “Smart camera inspects solar wafer codes," Vision Systems Design, January 2010). Now, Vision Components itself has developed a near-infrared (NIR) smart camera that is sensitive to wavelengths up to 1100 nm to detect and identify defects such as micro cracks, shunts, and disconnected fingers in solar wafers. Featuring a 400-MHz DSP from Texas Instruments, the VC4067/NIR camera integrates a 2/3-in., 1280 × 960-pixel CCD sensor and runs at a maximum rate of 14 frames/s (see Fig. 2).

No programming required

Many system integrators do not have the expertise or desire to use such low-level development toolkits to program smart cameras to perform specific image-processing tasks. Rather, they wish to deploy these products in an expeditious fashion to perform a particular machine-vision task.

To address this larger market, most smart camera vendors offer their own machine-vision libraries in conjunction with camera offerings. Like smart cameras themselves, these libraries are offered in a number of forms ranging from C-callable image-processing routines, graphical programming interfaces, and point-and-click development environments.

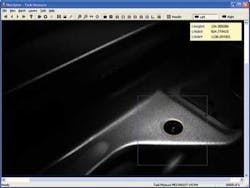

Interestingly, companies such as Matrox Imaging, National Instruments, Cognex, Microscan, and PPT Vision provide their system integrators with a number of different options to program these cameras. For its Intel Atom-powered Iris GT series of smart cameras, Matrox Imaging offers a range of cameras with sensors from Kodak and Sony with CCDs ranging from 640 × 480 to 1600 × 1200 pixels. To program the camera, the company offers an integrated development environment (IDE) known as Matrox Design Assistant that allows applications to be created by constructing a flowchart of video capture, analysis, location, measurement, reading, verification, communication, and I/O operations. For more sophisticated programmers, application development can be accomplished using Windows development tools in conjunction with the Matrox Imaging Library (MIL).

To understand whether a specific smart camera could be useful for any particular dimensional gauging, OCR, pattern recognition, color analysis, or feature extraction task requires an understanding of the nature of each application and the limitations of the hardware and software offered by each manufacturer. Although many software packages offered with smart cameras feature similar functionality, it is the hardware characteristics of the devices themselves that currently limit the applications of the devices.

While many smart cameras offer on-board image capture, processing, and industry-standard I/O, networking, and triggering capabilities, for example, most use sensors with pixel formats that range from 640 × 480 to 1600 × 1200, running at a maximum frame rate of approximately 60 frames/s. Therefore, for very high-speed, high-resolution applications such as web inspection or large-format document imaging, the use of these cameras may be limited.

Since the requirement to lower cost and increase functionality results in these cameras being offered as standalone products, most smart cameras are limited to a range of inspection tasks, most notably those found in the industrial automation market. These factors have led smart camera vendors to offer ranges of products that target different applications within these markets.

At the low-cost end of the smart camera spectrum, so-called smart sensors can now perform functions such as color analysis, parts presence, and traditional barcode scanning tasks. Companies such as Banner Engineering, Balluff, and Cognex have taken advantage of this by introducing products with increased functionality. To allow these smart sensors to be rapidly deployed, these companies offer easily configurable tools that require no programming to locate, count, find, and measure objects within the sensor's field of view.

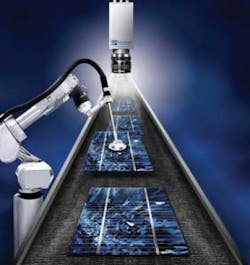

To broaden the reach of machine-vision applications, these companies are adding more sophisticated tools to their libraries. Recently, Cognex added 3D-Locate software to its software library; it uses multiple sets of 2-D features found by the company's PatMax geometric pattern-matching tool to determine an object's 3-D orientation (see Fig. 3).

FIGURE 3. To broaden the reach of machine-vision applications, companies such as Cognex are adding more sophisticated tools to their libraries. The company's 3D-Locate software uses multiple sets of 2-D features found by the company's PatMax geometric pattern-matching tool to determine an object's 3-D orientation.

Hardware and software

Today, the largest sector of the smart camera market is dominated by companies that offer their own hardware and software. By deploying these camera systems, developers are limited to the functionality offered by the hardware and software of each individual product.

Still, once committed to a particular vendor, system integrators are often reluctant to choose an alternative vendor because of the learning curve associated with configuring multiple software packages. For this reason, most system houses only develop machine-vision systems with few disparate smart cameras.

This situation may change in the future with the introduction of lower-cost smart cameras that resemble PC-based imaging systems. Although at present slightly more expensive than embedded smart camera systems, systems from companies such as Sony, Leutron Vision, and FiberVision are already leveraging software products from third parties such as NI, MVTec Software, and Neurocheck to offer developers a wider choice of programming tools.

As one of the first to recognize this trend, Cognex has formed an Acquisition Alliance designed to establish cooperative sales and marketing efforts that leverage the company's VisionPro software into other vendors' smart camera products. Should this trend continue, the current conundrum regarding which smart camera is specialized for a particular machine-vision application will not need to be addressed. Although this will result in more choices for the system integrator, differentiation of smart cameras from various vendors will become more difficult and result in further price reductions as vendors compete.

Company Info

AnaFocus

Seville, Spain

www.anafocus.com

Balluff

Florence, KY, USA

www.balluff.com

Banner Engineering

Minneapolis, MN, USA

www.bannerengineering.com

Basler

Ahrensburg, Germany

www.baslerweb.com

Cognex

Natick, MA, USA

www.cognex.com

DALSA

Waterloo, ON, Canada

www.dalsa.com

FastVision

Nashua, NH, USA

www.fast-vision.com

FiberVision

Würselen, Germany

www.caminax.com

Imaging Solutions Group

Fairport, NY, USA

www.isgchips.com

JAI

Copenhagen, Denmark

www.jai.com

Leutron Vision

Glattbrugg, Switzerland

www.leutron.com

Matrox Imaging

Dorval, QC, Canada

www.matrox.com/imaging

Microscan Systems

Renton, WA, USA

www.microscan.com

MVTec Software

Munich, Germany

www.mvtec.com

National Instruments

Austin, TX, USA

www.ni.com

Neurocheck

Remseck, Germany

www.neurocheck.com

PPT Vision

Bloomington, MN, USA

www.pptvision.com

Sony

Park Ridge, NJ, USA

www.sony.com/smart

Vision Components

Ettlingen, Germany

www.vision-components.com