NVIDIA unveils details on mobile processor designed for autonomous vehicles

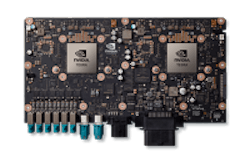

Earlier this year, NVIDIA introduced its NVIDIA DRIVE PX 2 platform (pictured), which is a platform that combines deep learning, sensor fusion, and surround vision for autonomous vehicles. Now, NVIDIA has provided more details on the Parker Systems on a Chip (SoC) that is powering it.

Parker is built around NVIDIA’s Pascal GPU 256-core architecture and two Denver 2.0 64-bit cores and four ARM Cortex A57 64-bit cores. The SoC delivers up to 1.5 teraflops(1) of performance for deep learning-based self-driving AI cockpit systems. The Denver 2.0 CPU is a seven-way superscalar processor that supports the ARM v8 instruction set and implements an improved dynamic code optimization algorithm and additional low-power retention states for better energy efficiency, according to NVIDIA.

The device’s new 256-core Pascal GPU enables the running of deep learning inference algorithms for self-driving capabilities and offers the raw graphics performance and features to power multiple high-resolution displays, such as cockpit instrument displays and in-vehicle infotainment panels. Parker-based autonomous cars can be continually updated with newer algorithms and information on an ongoing basis by working in concert with Pascal-based supercomputers in the cloud.

Parker can be used as a single unit or it can be integrated into more complex designs, such as NVIDIA DRIVE PX2, which employs two Parker chips along with two Pascal GP cores. DRIVE PX 2 can run 24 trillion deep learning operations per second to run the most complex deep learning-based inference algorithms.

Additionally, Parker is designed to support both decode and encode of video streams up to 4K resolution at 60 fps, which will enable automakers to use higher resolution in-vehicle cameras for accurate object detection, and 4K display panels to enhance in-vehicle entertainment experiences.

View a press release on Parker.

View more information on NVIDIA DRIVE PX2.

Share your vision-related news by contacting James Carroll, Senior Web Editor, Vision Systems Design

To receive news like this in your inbox, click here.

Join our LinkedIn group | Like us on Facebook | Follow us on Twitter

Learn more: search the Vision Systems Design Buyer's Guide for companies, new products, press releases, and videos

About the Author

James Carroll

Former VSD Editor James Carroll joined the team 2013. Carroll covered machine vision and imaging from numerous angles, including application stories, industry news, market updates, and new products. In addition to writing and editing articles, Carroll managed the Innovators Awards program and webcasts.