Artificial intelligence processors enable deep learning at the edge

CEVA, Inc.’s NeuPro line of artificial intelligence (AI) processors for deep learning inference at the edge are designed for "smart and connected edge device vendors looking for a streamlined way to quickly take advantage of the significant possibilities that deep neural network technologies offer."

The new self-contained AI processors are designed to handle deep neural networks on-device and range from 2 Tera Ops Per Second (TOPS) for the entry-level processor to 12.5 TOPS for the most advanced configuration, according to CEVA.

"It’s abundantly clear that AI applications are trending toward processing at the edge, rather than relying on services from the cloud," said Ilan Yona, vice president and general manager of the Vision Business Unit at CEVA. "The computational power required along with the low power constraints for edge processing, calls for specialized processors rather than using CPUs, GPUs or DSPs. We designed the NeuPro processors to reduce the high barriers-to-entry into the AI space in terms of both architecture and software. Our customers now have an optimized and cost-effective standard AI platform that can be utilized for a multitude of AI-based workloads and applications."

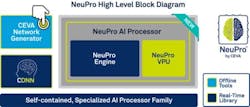

NeuPro architecture is comprised of a combination of hardware- and software-based engines for a complete, scalable, and expandable AI solution. The family consists of four AI processors, offering different levels of parallel processing:

- NP500 is the smallest processor, including 512 MAC units and targeting IoT, wearables and cameras

- NP1000 includes 1024 MAC units and targets mid-range smartphones, ADAS, industrial applications and AR/VR headsets

- NP2000 includes 2048 MAC units and targets high-end smartphones, surveillance, robots and drones

- NP4000 includes 4096 MAC units for high-performance edge processing in enterprise surveillance and autonomous driving

Each processor consists of the NeuPro engine and NeuPro vector processing unit (VPU). The NeuPro engine includes a hardwired implementation of neural network layers among which are convolutional, fully-connected, pooling, and activation, according to CEVA. The NeuPro VPU is a programmable vector DSP, which handles the CEVA deep neural network (CDNN) software and provides software-based support for new advances in AI workloads.

Furthermore, NeuPro supports both 8-bit and 16-bit neural networks, with an optimized decision made in real time. The processors’ MAC units reportedly achieve better than 90% utilization when running, while the overall processor design reduces DDR bandwidth substantially, improving power consumption levels for any AI application, according to CEVA.

CEVA will offer the NeuPro hardware engine as a convolutional neural network accelerator. NeuPro will be available for licensing to select customers in the second quarter of 2018 and for general licensing in the third quarter of 2018.

View more information on NeuPro AI processors.

Share your vision-related news by contacting James Carroll, Senior Web Editor, Vision Systems Design

To receive news like this in your inbox, click here.

Join our LinkedIn group | Like us on Facebook | Follow us on Twitter

About the Author

James Carroll

Former VSD Editor James Carroll joined the team 2013. Carroll covered machine vision and imaging from numerous angles, including application stories, industry news, market updates, and new products. In addition to writing and editing articles, Carroll managed the Innovators Awards program and webcasts.