Autonomous robot successfully completes highly-organized product packaging

While automation has been successfully applied in warehouses for inventory management and item storage and retrieval, boxing items for shipping largely remains the province of human employees. Researchers from the Computer Science Department at Rutgers University seek to change that.

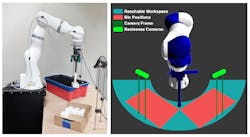

According to their study titled Towards Robust Product Packing with a Minimalistic End-Effector (bit.ly/VSD-RPP), the solution lies in a new system that integrates RBG-D camera data, a single robot arm that can push objects with a vacuum-based suction cup “finger,” and three new placement software routines.

The challenge investigated by the researchers was tightly-packing multiple items into a box. The experiments used a LBR iiwa 14 R820 robot arm from KUKA equipped with a suction-based end-effector and two Intel RealSense SR300 depth cameras. MoveIt! robotics manipulation platform software was used for motion planning and Cartesian Control software was used to guide the robot’s arm.

In the experiment, the robot picked randomly-placed cuboid objects from a source bin and packed them tightly into an empty target bin, which should result in grid-pattern packing. The researchers designed three parametrized routines (called primitives in robotics) and integrated them into general sense/pick/transfer routines in order to make tight packing possible: the ability to topple objects, a robust placement routine, and corrective packing.

If the system cannot predict a grasping point for any object in the source bin that would allow the item to be smoothly deposited in the second bin, the toppling routine is activated. The system picks up an object, locates a flat, empty space in the source bin, and topples the object into that space. Once the object is toppled the system re-analyzes the position of the object and looks for a successful grab point.

Finally, if required, the corrective packing routine is initiated. If the top of an object once placed is not perpendicular to support surfaces like the wall of the box or the sides of other objects already in the box (i.e. leaning against another object, for example), the system computes an optimal direction in which the robot nudges the object until it is properly aligned. If an object is not within the desired footprint (in the case of cuboid objects like those used in the experiment, a grid), the robot pulls the object until it fits within the footprint.

Success was measured in part in terms of what percentage of the volume of the target bin was unoccupied, i.e. whether the objects were packed tightly. Only 0.04% of available volume was unoccupied when the toppling, placement, and corrective routines were run. In experiments where all three of the corrective routines were removed from the pipeline, 49.55% of the available volume was unoccupied, thus proving the effectiveness of the new routines.

Related stories:

3D vision-guided robot sorts veterinary catheters for packaging

3D machine vision guides robotic system for logistics e-fulfillment

Oxford Economics study on robotics and industrial automation suggests loss of 20M jobs by 2030

Share your vision-related news by contacting Dennis Scimeca, Associate Editor, Vision Systems Design

SUBSCRIBE TO OUR NEWSLETTERS

About the Author

Dennis Scimeca

Dennis Scimeca is a veteran technology journalist with expertise in interactive entertainment and virtual reality. At Vision Systems Design, Dennis covered machine vision and image processing with an eye toward leading-edge technologies and practical applications for making a better world. Currently, he is the senior editor for technology at IndustryWeek, a partner publication to Vision Systems Design.