Automated pick-and-place application differentiates between thousands of SKUs

Integrator Vertical AIT (Katy, TX, USA; www.verticalait.com) was contracted to design a pick-and-place application for a manufacturer of generic connectors produced for a variety of different industries like oil and gas, aerospace, and utilities. The manufacturer wanted a system that could successfully process a wide variety of different parts without having to pause the line for any retooling or recalibration.

There were three primary challenges in designing the application. First, the raw number of different parts: the manufacturer has over a thousand SKUs in its inventory. The application therefore needed flexibility. Second, the connectors are relatively small. A robot would require good dexterity to pick them up.

Third, the parts would not lay flat if they were dumped into a pile because some of the connectors have screw heads and others do not. Therefore, singling out individual parts would present a challenge and the customer did not want to invest in relatively expensive 3D imaging technology. The application needed to incorporate a method for separating the parts prior to delivering them to the picking area.

Once the robot picked a connector, it would need to place the connector into a fixture for a proprietary treatment process and remove the connector from the fixture when the process had finished. Allen Biehle, co-founder and CEO of Vertical AIT, says the required motion resembles holding a bolt perpendicular to the ground by its end, dipping the bolt into a pipe, and then lifting the bolt out of the pipe.

Before the application was installed, human employees were picking up the connectors with tweezers or fingers, sometimes also using magnifying glasses or headband-mounted magnifiers and placing the connectors into the fixture. Biehle says that in some cases the employees were picking up a 3 mm screw and putting it into a fixture with clearance of only a few thousandths of an inch.

Furthermore, the treatment process also involved environmental hazards. The manufacturer therefore wanted the automation system not only to increase productivity but also to promote worker safety. The manufacturer did not, however, because of space limitations want a traditional work cell with cages and fences. It wanted a collaborative solution that allowed employees to fill feeders with parts for processing and remove bins of completed parts while the application continued to operate.

To fulfill the client’s specifications, Vertical AIT designed an application that features a vibratory feeder; a Genie Nano 5G A-CAM-G3-GM10-M4060-model camera, GEVA 400 computing platform, and Sherlock software from Teledyne DALSA (Waterloo, ON, Canada; www.teledynedalsa.com); LED illumination; a UR5e model cobot (Figure 2) from Universal Robots (Odense, Denmark; www.universal-robots.com) ; a custom 3D-printed tray to hold the parts during the picking process; and a patent-pending gripper for the robot.

The application begins with an employee dumping a bag of parts into the vibratory feeder that separates the parts and pushes them toward a slide that deposits the parts onto the custom 3D-printed tray. The custom tray features slopes around its edges to prevent parts from sliding off and a textured surface to help arrest the motion of the parts once they hit the tray.

The Genie Nano 5G M4060 camera (Figure 3), which features an 8.9 MPixel monochrome Sony (Brooklands, Surrey, UK; www.image-sensing-solutions.eu) IMX255 image sensor with 3.45 µm pixel size, 5 GigE interface, and 26 mm C-mount lens, is fixed-mounted above the picking tray with a 24-in. working distance. Vertical AIT chose this specific model for its high resolution, to image features as small as half a millimeter. A lower-resolution model produced a 10% error rate for determining precise part dimensions.

In an early iteration of the application, the camera was mounted on the robot arm. This added too much time to the total process. The robot had to get into position to capture an image, wait for image processing and determination of the correct path for the robot, and then make the pick. The application ideally would have another part ready for a pick after the robot finished placing the previous part.

Vertical AIT trained Teledyne DALSA’s Sherlock image processing software to recognize part categories defined by features and size. Because all the connectors are cylindrical, the software can locate the center point of any connector after determining the correct category.

Related: Glass vial manufacturer's vision system allows 100% inspection

To create a part category, first the Genie Nano camera captures an image of a connector placed in the center of the pick tray. Correct parameters for edge detection and precision chosen in Sherlock make the edges of the part clearly discernable.

Next, an employee manually chooses a pick location at the appropriate position on the connector to make sure the pick location isn’t located too close to any collar on the connector. The employee then saves the model in the GEVA 400’s memory as a new part category.

During a day and a half of training and troubleshooting following installation of the application at the manufacturer’s factory, Vertical AIT created two categories to demonstrate the process, after which factory employees took over. Twenty connectors were used to create 10 categories. Once the employees had the process down, they were able to create a new category in about five minutes.

Biehle says Vertical AIT hasn’t heard from the manufacturer since late 2020 when it installed the application (a good thing to an integrator), and he would expect that the manufacturer would have created many more categories by now.

The parts properly singulate 95% of the time after landing on the pick tray, says Biehle. In the other 5% of cases, force feedback sensors in the robot gripper detect the problem as a collision error, registering contact with an object prior to the gripper reaching the intended pick point. If this happens, the robot makes a sweeping motion across the top of the connector the robot intended to pick. This pushes the stacked connectors apart.

If the camera detects no parts in the picking tray, the GEVA 400, via a digital output connection, signals the vibratory feeder to start. Once parts move down the slide and onto the picking tray, the camera registers the presence of the parts, and the feeder switches off.

The camera captures an image of a connector on the tray; the Sherlock software analyzes the image to determine the proper part category and sends, via Modbus TCP, the appropriate coordinates and a start command to the robot; and the robot makes the pick.

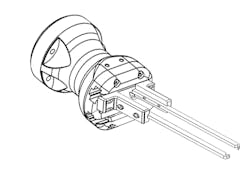

The gripper (Figure 4) features a patent-pending groove system that ensures the connector always slides into position between the gripper arms perpendicular to the fixture, no matter the precise orientation of the part when the gripper makes the pick.

In addition to determining the center point for the pick, the part categories determine the insertion depth for that connector, or the height at which the gripper should rest while holding the connector in the fixture during the treatment process. Generally, the longer the connector, the higher the position relative to the fixture the gripper will rest at while the treatment takes place.

If the force feedback sensors detect any resistance prior to the gripper reaching the proper insertion depth for that category of connector, this indicates incorrect alignment with the fixture. In this case, the robot drops the part back into the vibratory feeder for reprocessing.

If the process goes smoothly, and the connector successfully slides into the fixture for the treatment process, the robot deposits the finished connector into an output bin. When the output bin fills up, an employee empties the finished parts into a plastic bag and returns the empty bin. The pick-and-place cycle takes around 15 seconds, says Biehle, which gives the employee plenty of time to put an empty bin in place while system operation continues.

According to Biehle, the pick-and-place application break-even is about 3000 operating hours. The application has also freed employees for different duties at the factory.

About the Author

Dennis Scimeca

Dennis Scimeca is a veteran technology journalist with expertise in interactive entertainment and virtual reality. At Vision Systems Design, Dennis covered machine vision and image processing with an eye toward leading-edge technologies and practical applications for making a better world. Currently, he is the senior editor for technology at IndustryWeek, a partner publication to Vision Systems Design.