Vision-guided robots pack candy

Off-the-shelf components inspect and pack fragile products.

By R. Winn Hardin, Contributing Editor

In past years at Spangler Candy Company, approximately 15 workers stood around a collection conveyor to inspect individually plastic-wrapped candy canes for shape and breakage and to pack them by the dozen into cardboard “cradles” to be displayed on store shelves. “We needed to get an edge on production and labor costs,” explains Spangler engineer Dave Bauer. “Candy canes are such a commodity item that we needed the boost to keep production in the USA. We knew we’d have to do it with technology.”

Bauer decided that automation was the key to inspecting and packing the candy product. After his initial research, he determined that the best way to significantly reduce labor costs while protecting workers from repetitive tasks was to build 12 vision-guided robot workcells using an Adept Technology AdeptOne four-axis servo-driven SCARA robot with a fifth-axis option that works with the Adept advanced gray-scale vision system (see Fig. 1).

“The only way we could do this project so we could afford it was to do it in-house,” Bauer says. “And the only way I could integrate it so I wouldn’t have to worry about interfacing the systems was to use a vision system and robot from the same company. In the Adept vision system, the vision brain is built into the robot controller, and that has made my life easier,” Bauer says.

Lighting Scenarios

“Because the candy is white and red, the first question was, what are we going to do for a background?” Bauer explains. After considerable testing, the old conveyor material was swapped for a black material with overhead lighting to produce the best contrast in a gray-scale image. Binary would be too limited to accommodate a candy cane with two colors plus background, according to Bauer, while a color vision system would be too costly and complex for the application.

At Adept’s application test lab, engineers tried multiple lighting scenarios, including various LED lights, structured lights, and traditional halogen lights. A pair of halogen spotlights, similar to track lighting used in homes, turned out to work best. “Because there aren’t many windows in the facility and the ambient light has steady intensity and direction, we were able to go with a standard overhead spotlight-the kind that is easily replaced,” Bauer says.

Prior to automation, three conveyors fed to a central packing area where 12 to 15 workers visually inspected the candy canes and then packed them into cradles before putting full cradles on a series of cross conveyors to boxing and palletizing areas. The automation plan called for replacing 12 of the workers with AdeptOne robotic arms, which mimic a human arm in reach (800 mm) and are capable of picking and placing candy canes in excess of the 50-per-minute throughput necessary to pack all of the candy canes coming down the conveyor. The robots could work faster, Bauer says, but the candy is fragile and excessive movement can cause them to break.

To assist with identifying individual candy canes for pick and place, three robots were placed on each of the three main conveyors. A single laborer stands at the end of each conveyor and separates candy canes that fail inspection from candy canes that group together on the conveyor, which is an automatic pass condition for the robots to reduce breakage and keep throughput high.

Labor savings

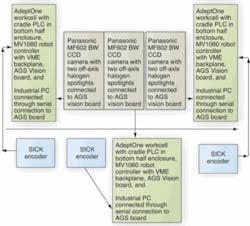

Each robot workcell includes the robot arm with a MV1060 robot controller that has a VME backplane with 44 24-V LVDS inputs and 40 24-V LVDS outputs; a SICK optical encoder to track the conveyor position; an AGS vision board with on-board memory buffer and image-processing engine; a pair of halogen lights; a Panasonic MF602 gray-scale CCD camera; and a Spangler-built cradle feeder PLC (see Fig. 2). “I liked the Panasonic camera because the dip switches were easy to access and it was easy to choose different modes for the camera, shutter speeds worked well with the application, and the cameras worked right out of the box without any problems,” Bauer says.

The cameras are located 25 in. above the conveyor and 35 in. upstream from the robot arm to give the vision system time to do its three inspections and locate the placement and orientation of the candy on the conveyor before passing that information along to the robot controller. Before packing, the vision system and robot controller are calibrated using Adept AIM VisionWare user interface to call V+ routines for calibration using data from the SICK optical encoder attached to the conveyor drive shaft. The operator controls the workcells through the AIM interface running on an industrial PC. The PC is also used to view images from the vision system during operation (see Fig. 3).

As candy comes down the conveyor, the MF602 camera captures continuous video feeds from the moving conveyor. When threshold levels indicate the possible presence of a candy cane, the vision system uses blob analysis to make a general area measurement to see if the object is one candy cane or more than one grouped together. If it is only one candy cane, the vision system inspects the product; if it is more than one piece of candy, the system allows the grouping to pass through to the laborer at the end of the conveyor for packing.

If the area measurement indicates a single piece of candy, edge-detection algorithms locate the outer edges of the candy cane and measure the length of the cane for accuracy. The vision system determines the centroid of the crook, checks that diameter for consistency, and then determines the angle between the centroid and the other end of the cane. This angle information is used for an inspection check and to determine the orientation of the candy cane for the robot arm. The orientation information and location on the conveyor based on data from the optical encoder are passed across Adept’s VME backplane in the robot controller to the Motorola 68060 industrial processor on the robot controller and converted into movement controls for the AdeptOne robot arm.

Although pick-up locations and orientations for each piece of candy change, placement locations are taught to the robot before operation, refined during calibration, and remain constant through packaging. Spangler chose the fifth-axis option on the AdeptOne robot arms to ease the final rotation of the end-of-arm tooling prior to inserting the candy cane in the specially designed cardboard cradles.

Connected to the robot controller through one of the 24-V LVDS I/O connections is a Spangler-built PLC that holds cradles for the robot to fill and carry away after filling. Handshakes between the PLC and robot controller pause the workcell until a full cradle is carried away and an empty cradle takes its place.

Spangler’s automation system has evolved in recent years from one vision system to support two robotic arms to one vision system per arm as a result of falling costs of vision systems and the better performance afforded by one-to-one vision-to-robot support. Adept has also moved to a VME hybrid controller system en route to a fully open system and away from proprietary connections, according to Adept Midwest regional manager Rick Pelton.

Company Info

Adept Technology

Livermore, CA, USA

www.adept.com

Panasonic Vision Systems

Secaucus, NJ, USA

www.panasonic.com/visionsystems

SICK Inc.

Minneapolis, MN, USA

www.sickusa.com

Spangler Candy Company

Bryan, OH, USA

www.spanglercandy.com