Infrared technology targets industrial automation

Infrared technology targets industrial automation IR cameras are used in industrial-plant monitoring and more and more in industrial automation.

By Andrew Wilson, Editor

English astronomer Sir William Hershel is credited with the discovery of infrared (IR) radiation in 1800. In his first experiment, Hershel subjected a liquid in a glass thermometer to different colors of the spectrum. Finding that the hottest temperature was beyond red light, Hershel christened his newly found energy "calorific rays," now known as infrared radiation.

Two centuries later, IR imagers and cameras are finding uses in applications from missile guidance tracking to plant monitoring to machine-vision automation systems. Invisible to the human eye, IR energy can be divided into the three spectral regions: near-, mid-, and far-IR, with wavelengths longer than that of visible light. Although the boundaries between these are undetermined, the wavelength ranges are approximately 0.7 to 5 µm (near-IR), 5 to 40 µm (mid-IR), and 40 to 350 µm (far-IR).

However, do not expect today's commercially available IR detectors or cameras to span such large wavelengths. Rather, they will be specified as covering more narrow bandwidths between approximately 1 and 20 µm. Many manufacturers may use the terms near, mid-, and far-IR loosely, often claiming that their 9 µm-capable camera is based on a far-IR-based sensor.

Absolute measurement

For the system developer considering an IR camera for process-monitoring applications, the choice of detector will be both manufacturer- and application-specific. Because of this, the systems integrator must gain an understanding of how and what is being measured.

null

Perhaps one of the largest misconceptions is that IR measures the temperature of an object. This misconception results from Plank's law, which states that all objects with a temperature above absolute zero emit IR radiation and that the higher the temperature the higher the emitted intensity. Plank's law, however, is only true for blackbody objects that have 100% absorption and maximum emitting intensity. In reality, a ratio of the emitting intensity of the object and a corresponding blackbody with the same temperature must be used. This emissivity—the measure of how a material absorbs and emits IR energy—affects how images are interpreted.

In the design of its MP50 linescan process imager, Raytek (Santa Cruz, CA, USA) incorporates a reference blackbody for continuous calibration (see Fig. 1). Targeted at continuous-sheet and web-based processes, the scanner offers a 48-line/s scan speed and is offered in a number of versions capable of capturing spectral ranges useful for examining plastics, glass, and metals. Other manufacturers offer blackbodies as accessories that can externally calibrate their cameras.

Since people cannot see IR radiation, the images captured by IR detectors and cameras must first be processed, translated, and pseudocolored into images that can be visualized. In these images, highly reflective materials may appear different from less-reflective materials, even though their temperature is the same. This is because highly reflective materials will reflect the radiation of the objects around them and therefore may appear to be "colder" than less-reflective materials of the same temperature.

Material properties

In considering whether to use IR technology for any particular application, therefore, the properties of the materials being viewed must be known to properly interpret the image. In printed-circuit-board analysis, for example, the emissivity of different metals can be used to discern faults in the board. However, if the emissivity of materials is similar, it may be difficult to discern any differences in the image.

In many applications, including target tracking, this does not pose a problem. In heat-seeking missiles, for example, the difference between the emissivity of aluminium alloy used to build a rocket and the fire that emerges from its boosters is so high that discerning the two is relatively simple. In other applications, the task may be more complex.

null

Infrared cameras use a number of different detector types that can be broadly classified as either photon or thermal detectors. Infrared absorbed by photon-based detectors generates electrons or bandgap transitions in materials such as mercury cadmium telluride (HgCdTe; detecting IR in the 3- to 5- and 8- and 12-µm range) and indium antinomide (InSb; detecting IR in the 3- to 5-µm range). This results in a charge that can be directly measured and read out for preprocessing.

Rather than generate charge or bandgap transitions directly, thermal detectors absorb the IR radiation, raising the temperature of single or multiple membrane-isolated temperature detectors on the device. Unlike photon-based detectors, thermal detectors can be operated at room temperature, although their sensitivity and response time are longer.

To create a two-dimensional IR image, camera vendors incorporate focal-plane, or staring, arrays into their cameras. These detectors are similar in concept to CCDs in that they are offered in arrays of pixels that can range from as low as 2 x 2 to 640 x 512 formats and higher, often with greater than 8 bits of dynamic range.

Incorporating a thermal detector in the form of an amorphous silicon or vanadate (YVO4) microbolometer, the Eye-R320B from Opgal (Karmiel, Israel) features a 320 x 240 FPA. With a spectral range from 8 to 12 µm, the camera also offers automatic gain correction, remote RS422 programmability, and CCIR or RS170 output. The company also offers embeddable IR camera modules that can use a number of 640 x 480-based detectors from different manufacturers.

Discerning features

As the wavelength of visible light is shorter than that of IR radiation, visible light can discern features within an image at higher resolution. For this reason ultraviolet (UV) radiation, which the human eye also cannot perceive, is used in to detect submicron defects in semiconductor wafers. Because the frequency of UV light is higher, the spatial resolution of the optical system is also higher, allowing greater detail to be captured.

Unfortunately, quite the opposite is true of IR radiation. With a lower frequency than visible light, IR radiation will resolve fewer line pairs/millimeter than visible light, given that all other system parameters are equal. Indeed, it is this diffraction-limited nature of optics that leads to the large pixel sizes of IR imagers. And, of course, an IR imager with 320 x 240 format and a pixel pitch of 30 µm will have a die size considerably larger than its 320 x 240 CCD counterpart with a 6-µm pixel pitch. This larger die size for any given format is another reason IR imagers are more expensive than visible imagers.

In many visible machine-vision applications, it is necessary to determine the minimum spatial resolution required by the system. And the same applies when determining whether an IR detector can be used in such an application. This is accomplished visibly by using test charts with periods of white and black lines. If, for example, the required resolution were 125 line pairs/mm, then the pitch of those line pairs would be 8 µm. From Nyquist criteria, it can be determined that the most efficient way to sample the signal is with a 4-µm pixel pitch. A smaller pitch will not add new information, and a larger pitch will result in errors.

In such optical systems the pixel pitch at the limit of resolution is given by the diffraction-limited equation

where f/# equals the focal length/aperture ratio of the lens. Thus, a pixel pitch of 2.68 µm is needed to resolve a 550-nm visible frequency at f/8. In an IR system, with a wavelength of 5 µm and the same focal length/aperture ratio, the pixel pitch required will be approximately 25 µm or nine times larger. With a 25-µm pixel pitch, the minimum number of line pairs/millimeter that can be resolved will have a 50-µm period, which equates to approximately 2 line pairs/mm with an f/1.8 lens.

Luckily, most camera manufacturers specify these parameters. The Stinger IR camera from Ircon (Niles, IL, USA), for example, is specified with an uncooled 320 x 240 FPA, spectral ranges of 5, 8, and 8 to 14 µm, a detector element size of 51 x 51 µm, and an f/1.4 lens (see Fig. 2). The company's literature states that targets as small as 0.017 in. can be measured with the camera, a fact that can be confirmed by some simple mathematics.

To increase this resolution, some manufacturers use lenses with larger numerical apertures (smaller f#s). Because glass is opaque to IR radiation, these lenses are usually fabricated from exotic materials such as zinc selenide (ZnSe) or germanium (Ge), adding to the cost of the camera. Like visible solid-state cameras, IR cameras are generally offered with both linescan and area format arrays. While linescan-based cameras are useful in IR web inspection, area-array-based cameras can capture two-dimensional images. Outputs from these cameras are also similar to visible camera and are generally standard NTSC/PAL analog formats or FireWire and USB-based or digital formats.

Future developments

What has, in the past, stood in the way of acceptance of IR techniques in machine-vision systems has been the cost of IR systems compared with their visible counterparts and the lack of an easy way to combine the benefits of both wavelengths in low-cost systems. In the past few years, however, the cost of IR imaging has been lowered by the introduction of smart IR cameras that include on-board detectors, processors, embedded software, and standard interfaces. And, realizing the benefits of a combined visible/IR approach, manufacturers are now starting to introduce more sophisticated imagers that can simultaneously capturing visible and near-IR images.

Recently, Indigo Systems (Goleta, CA, USA) announced a new method for processing indium gallium arsenide (InGaAs) to enhance its short-wavelength response. The new material, VisGaAs, is a broad-spectrum substance that enables both near-IR and visible imaging on the same photodetector. According to the company, test results indicate VisGaAs can operate in a range from 0.4 to 1.7 µm. To test the detector, the company mounted a 320 x 256 FPA onto its Phoenix camera-head platform and imaged a hot soldering gun in front of a computer monitor (see Fig. 3). The results clearly show that a standard InGaAs camera can detect hardly any radiation from the CRT, while the VisGaAs-based imager can clearly detect both features.

null

Click to download Industrial Infrared Camera Systems [pdf size=64Kb]

Camera and frame grabber team up to combat SARS

To restrict the spread of severe acute respiratory syndrome (SARS), Land Instruments International (Sheffield, UK; www.landinst.com) has developed a PC-based system that detects elevated body temperatures in large numbers of people. Because individuals with SARS have a fever and above-normal skin temperature, infrared cameras can analyze and detect the viral illness.

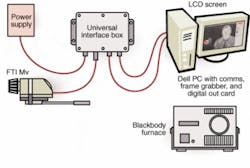

Land Instrument's Human Body Temperature Monitoring System (HBTMS) uses the company FTI Mv Thermal Imager with an array of 160 × 120 pixels to capture a thermographic image of a human body (typically the face) at a distance of 2 to 3 m. Data captured are then compared with a 988 blackbody furnace calibration source from Isothermal Technology (Isotech, Southport, UK; www.isotech.co.uk). Permanently positioned in the field of view of the imager, this calibrated temperature reference source is set at 38°C and provides a reference area in the live image scene. The imager is then adjusted to maintain this reference area at a fixed radiance value (200).

To capture images from the FTI Mv, the camera is coupled to a Universal Interface Box (UIB), which drives the imager, images, and imager control from a PC up to 1000 m away. Video and RS422 control signals are then transmitted to the PC from the UIB. IR images are transmitted as an analog video signal via the UIB and digitized by a PC-based MV 510 frame grabber from MuTech (Billerica, MA, USA; www.mutech.com), which transfers digital data to PC memory or VGA display. Because the board offers programmable gain and offset control functionality, the incoming video signal can be adjusted for the maximum digitization range of the camera.

Once the image has been acquired, it is analyzed by Land's image-processing software that displays the images, triggers alarms via a digital output card, and records images to disk. Any pixels in this area with radiance greater than the set threshold trigger an alarm output. To highlight individuals who may have the disease, a monochrome palette is used, with any pixel on the scene with radiance levels above the threshold highlighted in red.

null

null

null