Horizons in Vision

President

Vision Systems International Consultancy

Yardley, PA; www.imagelabs.com/vsi/

The North American and worldwide markets for machine vision appear to have peaked in 2000 and have been in decline ever since. The principal factor in the decline has been the overall reduction in capital spending in the semiconductor and electronics industries, the major user industries for machine-vision technology. In 2002 this was further exacerbated by the decline in capital spending throughout manufacturing industries.

Significantly, while this decline was taking place, machine-vision technology was improving in terms of price/performance, and the underlying infrastructure is making the application of the technology easier and less expensive. Consequently, the opportunities for machine vision are greater than ever, as buyer justification is easier when based on return on investment, productivity, and quality gains. Unlike years ago when the components used in machine vision were essentially "borrowed" from other applications, today, users find catalog components with features making them especially well suited to machine-vision applications. With the cameras available today, timing issues have all but disappeared. With special telecentric optical arrangements, magnification errors have been eliminated. Off-the-shelf diffuse coaxial lighting arrangements have taken much of the challenge out illuminating a scene uniformly.

Whereas multidisciplinary skills are still required to understand how to select the appropriate complement of components for a machine-vision application, at least the components are available. Today these multidisciplinary skills are resident in the hundreds of merchant system integrators of one type or another. Applications also have been aided by the availability of "canned" software packages offered by many vendors. These bundle specific suites of algorithms perform specific tasks: pattern matching, alignment, optical character recognition and verification, 2-D/1-D symbol reading, liquid-crystal-display inspection, light-emitting-diode inspection, blister-pack inspection, and empty-cavity inspection, among others.

Products such as smart cameras (cameras with self-contained image-processing and analysis capabilities) and self-contained vision sensors with tethered cameras have altered the competitive landscape. With prices that result in total hardware costs of less than $15,000 and performance consistent with high-end systems of only a few years ago, these products are permeating the general-purpose market.

Further infiltrating the market are digital cameras based on the FireWire (IEEE 1394) and Camera Link interfaces, which, by feeding a stream of digitized video data into the PC bus, make cost-effective host-based processing possible. Further market penetration can be anticipated as CMOS imagers improve and replace CCD imagers as the imager of choice for many machine vision applications. All these lower cost machine-vision options mean that many more must be sold just for the market to remain the same. The flip side, of course, is that at lower prices adoption justification is much easier and the opportunity exists to make widespread use of machine vision throughout manufacturing lines and operations.

While the electronics and semiconductor industries will remain the major users of machine vision, one generic application that is driving the adoption of machine-vision-based 2-D symbol readers is traceability. Besides the semiconductor and electronics industries, lot and part traceability is important to the aerospace, automotive, pharmaceutical, food, and medical-device industries. Wherever product security and product recall are possible, the benefits of lot traceability can be substantial by reducing recalls to only specific lots.

In some of these same industries, security concerns are demanding better access controls and monitoring of computer-based systems. Early machine-vision systems lack the compute power to add the functions required to respond to these initiatives. In other words, some regulations coming out of agencies (such as the US FDA, USDA, and so forth) will require companies to replace older systems with newer ones. In many cases, companies should be considering doing this anyway. Older systems can no longer be supported because parts are no longer available, and newer systems will provide vastly improved performance, given gains in processing power achieved in recent years. In other words, there should be an emerging replacement market.

All in all, the future for the machine-vision market looks good. Capital spending cannot be put on hold indefinitely. One of the easiest purchases to justify is one that will yield both productivity and quality gains. Machine vision is definitely one such technology. The semiconductor industry is making significant changes in its production practices as it migrates to ever-finer linewidths and ever-larger wafers. These changes require new equipment, and virtu-ally every piece of manufacturing equipment in the semiconductor industry requires machine vision. The higher value of the final wafer demands increased in-process inspection to guarantee yield and quality. Packaging changes in the semiconductor industry are also demanding new machine-vision-based inspection capabilities.

A key challenge, however, is the migration of both the semiconductor and electronics industries largely to Asia-Pacific countries—most recently to China. Accordingly, the machine-vision market in North America will inevitably suffer as these two major consuming markets continue to migrate. The North American machine-vision industry servicing these two industries, however, will thrive.

THE FUTURE OF HIGH-PERFORMANCE MACHINE VISIONBy Joseph SgroChief Executive Officer

Alacron Inc.

Nashua, NH; www.alacron.com

An intriguing new trend in machine vision emerged in early 2002. Around that time, several customers in the inspection and semiconductor industries asked Alacron to integrate either multiple, fast, large CCD arrays or high-frame-rate CMOS sensors with data rates in the 500- to 1000-Mbyte/s range into real-time systems. These requirements opened a two-tiered approach to machine-vision platforms. The first, or native, approach uses Pentium-based computing with a "basic" (that is, nonaccelerated) frame grabber. The other, or accelerated, approach speeds up the data processing prior to transfer into the PC by means of faster-operating frame grabbers or cameras. Users usually prefer the native approach because it provides a system with ease of programming, speed sufficient for real-time processing, and economy.

The native approach is feasible for the lower end of the frame-grabber market (seeVision Systems Design Camera Link Special Report, May 2002, p. S4). That end of the market probably will migrate to USB 2.0 or IEEE 1394 (FireWire) interfaces because of adequate performance, widespread availability, and low- or no-cost motherboard options. These interfaces achieve the advantages listed above for data rates within the 0- to 40-Mbyte/s range. These rates are adequate for much of the machine-vision market and are within the realistic throughput for a single or dual Intel-based Pentium solution.

The native approach may generate problems, however, if a user needs more-intensive processing or significantly increased sensor data rates (which are approaching or exceeding 1 Gbyte/s). The native approach to this problem is to buy an SMD Pentium box with an adequate throughput bus and an adequate basic frame grabber. While this approach may seem to be the most direct solution, it probably is not the cheapest, fastest, or easiest to deploy in the high-performance machine-vision environment.

native processing

Understanding the scalability of native processing requires an examination of the two different memory schemes that are commercially available: shared private memory (cluster memory) and shared global memory (global memory or SMD). In the cluster memory approach, every processor has local memory in which to operate. One example is a stack of PCs linked by a 100-Mbit/s or a gigabit Ethernet connection; an embedded example is Coreco Imaging's Mamba Series. The performance and unit cost of this approach generally is linear for a reasonable number of units, for example, fewer than ten.

The global memory scheme is inherent in commercial server and workstation units, which come with a support chip that shares memory among four to eight processors. These workstation units often are not linear, but are superlinear with cost; with the number of processors they use, however, their performance is sublinear. This effect can be demonstrated by using the Intel Graphics Suite to benchmark the scalability of two- and four-processor, global-memory architectures. The extrapolation to eight processors is straightforward because the eight-processor solution is not faster than the cluster of two SMD, four-processor units.

The Intel Fusion Chipset supplies this global memory architecture. For the shared two-processor model, a performance increment of 1.6 units is obtained (that is, the time to perform two threads of the Intel library was 1.7 times the uniprocessor model). For the four-processor configuration running four threads, the result was 39/25 times four times the uniprocessor time. This leads to a scalability factor of 90% for the two-processor model (that is, two processors have a throughput of 1.7) and 60% for the four-processor model (that is, four processors have a throughput of 2.6 processors).

To ascertain the advantages of the approaches, differences were determined in the performance of near-future microprocessor and FPGA offerings, cost of implementation, and power consumption relative to throughput. As discussed, the scalability of the shared-memory native solution is approximately 85% for two processors and 65% for four processors for the SMD approach. The cluster-memory approach usually is linear or nearly so with processing units because there is no interprocessor contention for memory, and splitting the I/O streams does not unduly burden a processor with I/O that it does not need.

Solution comparisons

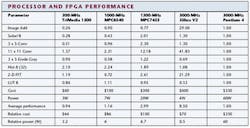

To measure relative performance for imaging, a suite of routines commonly used in imaging to generate performance ratios was selected. Near-future, state-of-the-art processors from Intel, Philips, and Motorola were compared, as well as field-programmable gate arrays (FPGAs) from Xilinx. These processors and FPGAs represent the various solutions vendors currently are using or will probably use to handle high-data-rate or compute-intensive applications. The table lists such processors and FPGAs and their imaging performance, cost, and power relative to the benchmark Intel Pentium 4 processor. The larger the number is for the speed indicated in the table, the faster the performance of the processor is relative to the Pentium 4.

The native solution is both feasible and desired for data rates and camera applications that can be performed on one or two Pentium 4s, which is usually true for data rates in the 40-Mbyte/s range. However, when data rates of 80 Mbytes/s or greater or real-time processing become intensive, an embedded or FPGA approach offers a more cost-effective and efficient solution than a native one. The FPGA solution is optimal in a smart or embedded processor camera because most preprocessing of images generally requires only a limited repertoire of fixed-point processing.

With the recent introduction of hybrid FPGAs with processors such as Xilinx Vertex II Pro, this combination may allow significant simplification of the choices for a manufacturer who can modify the mix of cells and processors as needed in either the camera or frame grabber to meet the needs of a particular application. Therefore, individual customization using the hybrid solution may be the preferred approach in the near future.

With respect to cost, the accelerated solution is more economical if more than two Pentium 4s are needed to handle the data flow, which generally is the case for high-performance machine vision. Moreover, most native solutions are incapable of sufficient throughput to handle the required data rates at the high end, even in a cluster or SMD native environment, because of the prohibitive cost of real-time processing.

VISION IMAGING MOVES OUTDOORSBy Bernd HoefflingerManaging Director

Institute for Microelectronics Stuttgart

Stuttgart, Germany; www.ims-chips.de

The industrial machine-vision/image-processing market continues to grow in Europe. In fact, Germany saw a growth of 16% in 2001, and the market is expected to climb to (1.1 billion by 2004, an aggregate annual growth of more than 12%. Almost all of this industrial imaging market involves applications under controlled indoor-lighting conditions on the factory floor.

However, the advanced technical capabilities of high-dynamic-range cameras are also moving industrial electronic vision to outdoor uses. Here, however, lighting is totally uncontrolled, and it is, in fact, a compelling problem because of intense sunlight during the day and extreme darkness during the night. Moreover, commercial nighttime activities usually mandate the use of high-intensity and blinding spotlight sources.

To overcome outdoor lighting problems, fully automatic digital cameras are providing a dynamic range of 120 dB and a sensitivity of better than 1/10 lux for direct connectivity to TV monitors. They can optimize scene brightness and contrast continuously for recorded scenes. Their capabilities include no white saturation, no background problems, high and constant contrast resolution, well-resolved shadows, and maximum image information contents.

For example, consider the challenging 24/7 visual requirements at an airport, with its chaotic mix of shiny moving aircraft, flashing vehicles, and spotty illumination on the taxi field and at the gates. Multimillion dollars of savings can be achieved at airports with more precise and effective aircraft movements and by the avoidance of injuries to operators, vehicles, and collisions between aircraft and docking gear.

Volker Gengenbach, working with Honeywell Airport Systems (Wedel, Germany), has developed a camera-guided, aircraft-docking system, which won the prestigeous Fraunhofer Prize 2001. The aircraft vision docking system uses a high-dynamic-range camera. This camera observes a particular gate area day and night with constant apperture and totally free of any saturated-missing frames or dead times, which occur in other systems because of varying integration times and other necessary adjustments. As the aircraft rolls slowly to a stop at an arrival gate, the camera displays an image on a monitor that is mounted on the gate wall facing the pilot of the incoming aircraft. The monitor shows the distance in meters to the prescribed aircraft-stopping point for the pilot. With this setup, the aircraft is brought to a stop at the proper point so that the jetway can be moved exactly to the aircraft's departure door. The camera-guided aircraft-docking system operates under all weather, lighting, and daytime and nightime conditions. Several airports around the world have already installed this system.

Another outdoor vision challenge and opportunity is driver safety, especially during nighttime hours This application calls for high technical performance at the low cost of consumer electronics. The European Automotive Industry continues to make progress on this front, and camera-based systems are being demonstrated in dark environments, such as tunnels. Wide-spectrum, high-speed camera imaging for drivers with scene illumination by permanent near-IR high-beam automobile headlights can detect pedestrians that are visually invisible to us and far away at distances beyond 100 m. At the same time, oncoming and nearby cars with high beams are recorded in the same image without saturation or blinding effects, with all cars and pedestrians within the same dark underground tunnel. The car-installed, high-dynamic-range camera provides 640 × 480-pixel resolution at 40 frames/s that images the light spectrum from visible to IR.

The automotive vision market includes vehicle guidance, collision avoidance, and intelligent headlights. By 2010, the outdoor vehicle imaging market is projected to exceed the industrial machine-vision market.

SYNERGIES TO IMPROVE SYSTEMS INTEGRATIONBy Christof Zollitsch,Chief Technical OfficerStemmer Imaging GmbH

Puchheim, Germany;

www.stemmer-imaging.de

Currently, the machine-vision/image-processing industry is debating the pros and cons of smart cameras versus PC-based machine-vision systems. Smart cameras are all-in-one units compared to the modular concept of PCs. Smart cameras impress designers with their point-and-click capability, which provides easy inspection, test, and operational procedures, ease of integration into the manufacturing environment, and reasonable price. On the other hand, the flexibility of PC-based solutions is superior in terms of camera interfaces, scalability of computational power, and wide acceptance across broad applications. However, price does become an issue when several cameras are needed.

A recent analysis of vision systems concludes that the two most important system-design-selection factors are application requirements and the vision skills of the systems integrator. The same considerations emerge when using either software libraries or a graphical user interface (GUI) as the best method of application programming. Of course, using a GUI dramatically reduces the time needed to solve an application problem. But if a systems integrator can't solve the application problems and there is no mechanism to implement additional functionality, the easiest development environment does not help at all.

On the other hand, there is no value to flexibility and its potential to solve application problems if it cannot be implemented. The same conclusion is reached as before: application requirements and vision skills drive the design decision about which vision technique to use. Both factors result in the same design questions. How can the customer benefit most? What is the most appropriate approach to implement a machine-vision system? How can the customer, with minimum effort, gain the benefits of all methods?

Synergies are the best answer to these questions. On a daily basis, systems integrators are confronted with a variety of design demands. These problems clash with their company's efforts to develop standard products instead of multiple-purpose ones. The market demonstrates how difficult this task is: to standardize as much as possible but also to be as flexible as possible.

Consequently, having to choose smart cameras versus PC-based vision systems is invalid as is having to choose between using software libraries or graphical user interfaces. Users do not want to decide between flexibility and processing speed or between ease-of-use and development speed. The more interconnection possibilities and synergies available among these different development platforms, the more opportunities emerge for customers to reap benefits.

The design solution to all these problems is software. Future software developments will meld the advantages of different hardware platforms and software environments together. All of the various strategies provide design merit, and they offer more advantages if they share some common synergies.