Miniature cameras and microdisplays empower the handicapped

Miniature cameras and microdisplays empower the handicapped

Lawrence J. Curran

Contributing Editor

Handicapped children with neuromuscular diseases are acquiring the ability to express themselves audibly, type out commands, and activate small appliances with the assistance of a headband-mounted miniature video camera and microdisplay, a custom image-processing chip, and a standard personal computer (PC) equipped with custom software. This integrated vision system has reached the prototype stage at Children`s Hospital in Boston, MA. The results to date are encouraging, according to Dr. Howard Shane, the hospital`s director of speech services, chief scientist at Assistive Technology Inc. (Newton, MA), and an associate professor at Harvard Medical School (Cambridge, MA). His specialty centers on providing communication skills for children with cerebral palsy and other debilitating disorders.

Cerebral palsy is usually attributed to brain damage that occurred at or before birth and is characterized by muscular impairment. In addition, the disease is often accompanied by speech and learning difficulties. But at Children`s Hospital, children afflicted with the disease are learning to communicate, after training, using an innovative, head-wearable, miniature camera-display system, even if they have little or no use of their hands.

The vision-system prototype was developed by ISCAN Inc. (Burlington, MA), a manufacturer of video-based, eye-movement-monitoring equipment, in conjunction with Assistive Technology. Rikki Razdan, ISCAN president, says his company has been working for four years to incorporate eye-tracking technology into a wearable system that enables impaired children to structure messages and initiate commands. Assistive Technology developed a specialized word-processing program, called Look and Select, which is used in one version of the prototype. After the two companies connected, they initially produced a large, floor-mounted camera prototype, which has since been miniaturized into an eye-goggle arrangement.

Using the miniature vision system, a patient with a severe spinal cord injury--who is unable to move arms or legs--can still type, dial a telephone, watch television, and control simple electrical appliances by eye-fixating on PC-monitor icons for a prescribed time. The system can also be linked to a speech synthesizer, thereby enabling a patient to trigger a sound-assembled message stored in the system by eye fixating on a `Speak` icon.

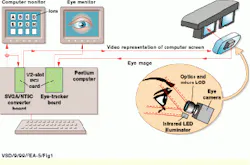

Head-mounted hardware

"We`ve been working iteratively with Dr. Shane and his department, and the latest version uses our lightweight system mounted in an eye-goggle arrangement," Razdan says. ISCAN calls the goggle unit HMEIS, or Head-Mounted Eye-Imaging System. It comprises a miniature video camera, an optical assembly that includes a micro liquid-crystal display (LCD), and an infrared illuminator designed to capture high-contrast eye-initiated images suitable for processing by a custom image-processing chip. "The [patient] fit has to be snug enough to tolerate possible spastic head movements," Razdan explains. In addition to the HMEIS, other essential system components include an RK-726PCI pupil/corneal-reflection-tracking processor subsystem, an SVGA-to-NTSC signal-converter board, and special software (see Fig. 1).

The RK-726PCI processor uses a two-dimensional (2-D), real-time digital image-processing chip. It resides on a PCI card that occupies a half-slot in a Dell Computer Corp. (Round Rock, TX) PC. During operation, the image-processing chip accepts images obtained from the ISCAN miniature black and white video camera located on the headband-mounted display. This image processor tracks the position of the patient`s pupil and corneal reflection (see Fig. 2).

The patient`s eye is illuminated by an infrared light-emitting diode (LED) in the eye-imaging optics subassembly, which is mounted to the video camera by a bayonet adapter. The adjustable LED illuminator can be moved by a special ball-joint assembly. The camera views the patient`s eye through the head-mounted display optics. The micro-LCD display, which replicates the icons presented on the PC monitor, is integrated into the optics subassembly. Replication is accomplished using the SVGA-to-NTSC signal converter, designated as the TView Gold PCI from Focus Enhancements Inc. (Wilmington, MA). "The subject sees an NTSC representation of the computer screen in the display," Razdan says.

The system software residing in the PC host calibrates the patient to the viewed screen. The screen shows the appropriate icons for patient-computer interaction when the patient looks at designated areas in the microdisplay.

Eye technology

The ISCAN eye-tracking technology is based on the automatic recognition and tracking of the eye`s dark pupil. "Under low-level infrared [IR] illumination, the eye pupil appears as a dark hole, or sink, to the ambient IR when viewed by the video camera," Razdan explains. The video camera is linked to the eye-image-processing circuit board installed in the PC.

The eye-tracking image processor--a custom chip designed by ISCAN--recognizes and tracks both the position of the dark pupil and the bright reflection of the IR light source from the cornea--the curved surface in front of the eye`s pupil. "The difference between the dark pupil and the bright corneal reflection indicates the eye`s position in relation to the viewed scene, even if there are small head movements with respect to the fixed eye-imaging camera," Razdan says. The most basic configuration of the system can tolerate head movements of ±1 inch.

The PC acquires data corresponding to the position of the pupil and corneal reflection at 60 Hz. It then computes the eye`s line of sight in conjunction with the host software. "The calibration procedure requires 15- 20 seconds, during which time the patient wearing the headband-mounted goggles sequentially fixates on five points within the camera`s field of view," Razdan says. Those five points are used to construct and store a computer model of the unique geometry of the patient`s eye, which is then correlated to the display being viewed during later eye tracking.

The calibrated eye point of regard is used to highlight the selected icon and may be displayed as a cursor superimposed over a video image of the scene viewed by the patient. This step graphically indicates the area of interest to an accuracy of greater than 1° over a 40°-60° central field of view.

The pivotal Assistive Technology application software in the system is an icon-based program developed to assist severely disabled persons. The software includes representations of as many as 48 icons on the PC monitor; any icon can be selected by means of eye fixation.

Using the system

To initiate operation, the patient wearing the head-mounted system stares at an icon within the microdisplay (see Fig. 3). The corresponding icon on the PC screen immediately changes color, which indicates to the patient or system operator that the icon has been initially selected. After the patient`s adjustable fixation time has elapsed, the icon`s color changes again, and an audible tone is generated, indicating that the selection of that icon has been entered into the PC.

"The software is designed to allow a disabled patient to `type` by selecting letters on a virtual keyboard and to dial a telephone with the aid of a communications card installed in the PC," Dr. Shane says. The patient can also focus on an icon of a speech synthesizer, which then sounds an assembled message. An on-screen television icon and icons for silicon-controlled-rectifier ac outlets have been added to enable entertainment options, as well as simple appliance-control functions. These functions might include turning light switches on and off, opening and closing curtains, and signaling a nurse or attendant.

The Look and Select software "is a full-blown word-processing program" that presents letters and functions on the monitor, which are replicated on the LCD, Dr. Shane says. The software allows a patient wearing the goggles to make icon selections, thereby producing messages on the virtual keyboard. The software incorporates macros that enable word-processing functions such as erasing and backspacing to speed the typing task.

Dr. Shane has ambitious plans for follow-on vision systems. "Our goal is to provide full computer usage to children, including word processing, Internet access, and greater communication capabilities," he says. These advancements are more challenging, however. Dr. Shane reports than since his department shifted from the dedicated goggle-based Look and Search model by writing a software driver for a mouse-driven implementation, the task has become "much more complicated. But our experience indicates that it certainly can be done." He also wants to trim the cost of the goggle-based prototype, which he estimates to be about $15,000. "We want to reduce it to $10,000 or less to make the system practicable," he concludes.

FIGURE 1. The eye-goggled-based Head-Mounted Eye-Imaging System comprises a miniature video (eye) camera, an optics assembly, a micro liquid-crystal display (LCD), and an infrared (IR) illuminator. A video representation of the icon-based computer monitor is sent by the Pentium computer to the eye goggles for micro-LCD viewing by the patient. At the same time, an image of the patient`s eye is sent from the camera mounted on the eye goggles to the computer to enable the image processor on the RK-726PCI board to track eye movements and fixations. Another monitor shows images of the patient`s eye as tracked by the image processor. Characters or icons displayed on the computer monitor can be selected by the patient, who sees their SVGA-to-NTSC-converted representations on the micro-LCD.

FIGURE 2. The ISCAN eye-tracking image-processor chip recognizes and tracks both the position of the dark pupil and the bright reflection of the IR light source from the cornea--the curved surface in front of the eye`s pupil. The difference between the dark pupil and the bright corneal reflection indicates the eye`s position in relation to the viewed scene, even if there are small head movements of ±1 inch with respect to the fixed eye-imaging camera.

FIGURE 3. Using a recent version of the Head-Mounted Eye-Imaging System, a patient with a severe spinal cord injury--who is unable to move arms or legs--can still type, dial a telephone, watch television, and control simple electrical appliances by eye-fixating on PC-monitor icons for a prescribed time. The system can also be linked to a speech synthesizer, thereby enabling a patient to trigger a sound-assembled message stored in the system computer by eye fixating on a `Speak` icon.

Company Information

Assistive Technology Inc.

Newton, MA 02459

(617) 641-9000

Fax: (617) 641-9191

Web: www.assistivetech.com

Children`s Hospital

Boston, MA 02115

(617) 355-6466

Fax: (617) 355-6822

E-mail: [email protected]. harvard.edu

Dell Computer Corp.

Round Rock, TX 78682

(800) 426-5150

Fax: (512) 728-3653

Web: www.dell.com

Focus Enhancements Inc.

Wilmington, MA 01887

(978) 988-5888

Fax: (978) 988-7555

Web: www.focusinfo.com

ISCAN Inc.

Burlington, MA 01803

(781) 273-4455

Fax: (781) 273-0076

Web: www.iscaninc.com