Research Institute Creates Framework and AI Tools for Autonomous Industrial Robots

San Antonio, TX, USA—The Southwest Research Institute (SwRI), a nonprofit R&D organization focusing on fundamental and applied engineering and research, has introduced an image reconstruction framework and AI-based classification tools that allow industrial robots to visually scan and reconstruct an object and then perform tasks on it.

The solution “intelligently classifies regions and textures of part surfaces in various stages of work,” says Matt Robinson, robotics R&D manager at SwRI (San Antonio, TX, USA; www.swri.org). It can be applied to grinding, painting, polishing, cleaning, welding, sealing, and other industrial processes.

In traditional robot programming, an expert in computer aided design (CAD) designs the work, sometimes including robotic processing information, but this approach can be slow and inflexible. To overcome this obstacle, SwRI developed its approach using Scan-N-Plan, an open-source set of tools that use 3D scanning techniques “to generate part geometry and location in real time,” according to a brochure from the ROS-Industrial Consortium-Americas (San Antonio, TX, USA, https://rosindustrial.org), which manages the project. The engineers also used ROS 2, a suite of open-source middleware tools, which are maintained by the nonprofit Open Robotics (Mountain View, CA, USA; www.openrobotics.org).

The SwRI approach involves an image reconstruction framework that creates high-fidelity mesh maps of objects using the 3D data processing library Open3D. It is an update of an earlier reconstruction framework, which proved difficult to set up and led to accuracy issues at the edges of the mesh, according to a blog post on the ROS-Industrial Consortium's website.

Using the framework, the first step is to scan a part with an RGB-D camera, mounted on a robot. “We calibrate the camera’s location, so we know precisely where it is in space at all points,” explains Tyler Marr, research engineer at SwRI. Software creates the 3D mesh using color and depth images combined with position data.

Marr said the system is flexible and will work with any 3D camera. “The requirement is that we have depth images,” says Marr, adding that the process can be adapted to color or grayscale images.

As the camera is scanning a part, a live feed of the process is displayed on a monitor, allowing human operators to go back and program the system to rescan areas, if necessary, before exporting the completed mesh.

Robinson says that this approach would be particularly useful at organizations with a large mix of activities to automate.

Once the mesh is created, simple menus assist users in generating toolpaths for the robot to perform work. These toolpaths are then converted into robot trajectories, which the robot then executes, Marr explains. The software also allows an operator to watch the robot’s work as it is in process.

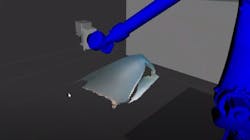

To show industrial companies how to use the reconstruction framework and classification tools, SwRI created a demonstration of a robot sanding airplane parts. In this application, SwRI’s engineers used the RealSense™ Depth Camera D455 from Intel (Santa Clara, CA; www.intel.com). They created a video of the demonstration as an educational tool, and it is available below.

The demonstration software is an open-source resource. In addition, the ROS Industrial Consortium plans to develop and post to its website workshops and advanced training sessions on how to use ROS 2 to automate robotic processes involving sanding, painting, and other common industrial processes. The goal of the project, Robinson says was “to provide a general framework to assist people in developing these sorts of solutions.”

About the Author

Linda Wilson

Editor in Chief

Linda Wilson joined the team at Vision Systems Design in 2022. She has more than 25 years of experience in B2B publishing and has written for numerous publications, including Modern Healthcare, InformationWeek, Computerworld, Health Data Management, and many others. Before joining VSD, she was the senior editor at Medical Laboratory Observer, a sister publication to VSD.