Robotic imaging orients credit cards

By Andrew Wilson,Editor

Robot-guided vision system directs precision positioning and movement of plastic credit-card sheets.

During the manufacture of credit cards, large laminated sheets are formed that comprise many individual cards. The high temperature and pressure of the sheet lamination fabrication method produce thin credit cards that measure approximately one by two feet and exhibit jagged edges. These sheets are then cut into numerous individual cards.

Prior to the cutting operation, the jagged edges of the sheets must be removed and smoothed. In the past, this operation consisted of a manual process that sheared the sheets along their perpendicular edges—a process that resulted in excessive scrap material. Moreover, because the process was manual, it was limited by the efficiency of the production technicians.

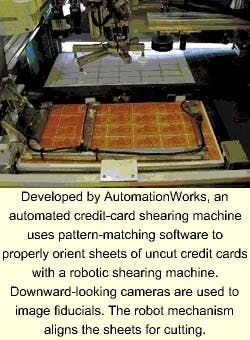

To correct these production problems and with the goal of doubling throughput to 1000 sheets per hour, AutomationWorks (San Jose, CA) has designed and built an automated industrial machine that combines machine-vision capabilities, precise servo-motion control, serial communications, and digital input and output signals (see photo). The downward-looking cameras perform imaging graphical fiducials and a robot mechanism for sheet alignment.

"Leveraging off-the-shelf software and hardware," says Jeffrey Long, senior project engineer at AutomationWorks, "has allowed us to create a graphical-user-interface (GUI) driven, network-enabled, machine-control application with utilities for machine calibration, parameter editing, and teaching."

Credit-card manufacturingIn the manufacture of credit cards, 12 x 25 x 0.3-in. sheets are printed with credit-card graphics in a 5 x 7 or 6 x 6 card-grid format prior to trimming. Graphics include fiducial marks that can be referenced by the vision system to verify the true alignment of the cards on the sheet, regardless of the quality or location of the sheet edges. The variety of card types and graphics requires a machine-vision system that is capable of recognizing fiducials of varying type, color, and location and of shearing-sheet edges in different locations relative to those fiducials.To shear sheets of plastic credit cards relative to these printed graphical fiducials, random sheets must be precisely positioned between the shearing blades. This setup requires calculating the position of each randomly oriented sheet and robotically moving the sheet to a pre-established location for cutting.

To identify the variety of fiducial colors and types required and to interface these data with a four-axis robotic handler, Long and his colleagues chose LabVIEW and IMAQ Advanced Vision software from National Instruments (Austin, TX). "The software's open interconnectivity enabled a custom-configurable and adaptable application interface through which many different product types are supported," says Long.

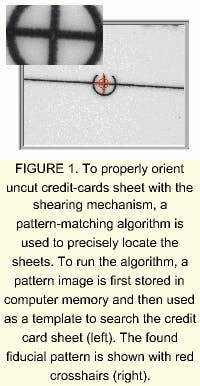

Using traditional machine-vision tools such as line, circle, and edge finders would have required extensive programming because of the variety of graphical fiducial types, colors, and locations. This would have made it difficult and costly for end users to add variations to support changing customer demands. "Fortunately," says Long, "image pattern-matching is integrated into IMAQ Vision." It provides fast searching of a gray-scale image for a small sub-image or pattern (see Fig. 1).

By using one tool capable of finding any fiducial, searching is made simpler. And, because all pattern-matching functions and examples needed for training new patterns are included with IMAQ Vision, end users can be provided with a simple interface for teaching new fiducials.

To allow process engineers to teach the location, orientation, and pixel scale of each camera and to teach the placement location of new sheet types relative to the shear, an interactive calibration routine was written in LabVIEW. During application run time, the sequence of registering a sheet and transporting it to the correct location for accurate shearing is accomplished in several different stages. First, with the sheet held by the picking mechanism of a robot, fiducials at opposite corners of the sheet are located in the robot camera's field of view. Simultaneously, the x-y-z location coordinates of the robot are latched from servo encoders. By knowing both the position of the robot and the fiducial locations of the credit card sheet, the fiducial locations relative to the robot origin can be calculated using the known calibrated camera locations.

Location of the center of each sheet is then computed from the fiducial locations and the known geometrical relationship between fiducials and the center of the sheet. The relative coordinate offset from the robot gripper to the center of the sheet is then calculated from the sheet and robot locations using LabVIEW. Last, the sheet is driven by the robot to the pre-established location at which the shear blades will trim the sheet edge at the exact location at machine setup. According to Long, the automated system results in a consistent repeatability of ±0.0008 in.

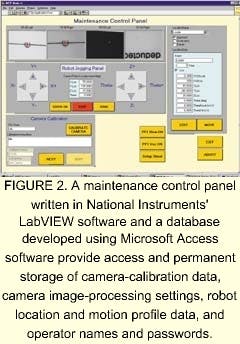

Consequently, when an operator selects a credit-card recipe, all the data required to process that product are automatically retrieved and loaded. New fiducial patterns, robot locations, and camera settings can be added to the database as needed. Calibration routines and location-editing menus are used to access and modify data directly without the need for operator data entry (see Fig. 2).

In operation, a credit-card recipe is chosen by the test operator at batch initialization. It identifies which process operation sequence, camera choice, robot locations, and fiducial types are required to trim a specific product type.

According to Long, installation of the automated shearing system has indeed doubled throughput and increased trimming precision to reduce scrap. Using LabVIEW enabled software engineers to implement the multi-tasking machine control, database access and maintenance, and GUI operator panels faster than using callable vision-based routines.

Company InformationAutomationWorksSan Jose, CA 95127Web: www.automationworks.comNational Instruments

Austin, TX 78759

Web: www.ni.com