Surgeons use graphics workstations to visualize the brain

Surgeons use graphics workstations to visualize the brain

By Lawrence H. Brown, Contributing Editor

First explored as a research tool in the mid-1980s, image-guided surgery is now giving physicians more precise ways to operate on the brain. Multiple-slice data sets, such as those acquired from magnetic resonance (MR), computed tomography (CT), or positron emission tomography (PET), present surgeons with multiplanar images and three-dimensional (3-D) reconstructed images of the brain. During preoperative analysis these help physicians carefully plan surgery, and they provide visual and physical guides during operations.

To help surgeons visualize which part of the brain they are operating on, ISG Technologies (Mississauga, Canada) has developed a system that correlates image data with positioning information from a physical probe. Known as the Viewing Wand, the image-guided system is now in use at the Montreal Neurological Institute and Hospital (MNI; Montreal, Canada) and 30 other hospitals worldwide.

"The Viewing Wand lets the surgeon check in relation to preoperative MR, CT, or PET data exactly which part of the brain is being explored," says Terry Peters, a research professor at MNI. "Thus, less exploratory surgery is required, which reduces patient trauma and speeds surgery." This results in a patient spending less time recovering postoperatively and less time in the hospital.

Topographical maps

Surgical guidance is accomplished by registering the physical coordinates of the patient to MR, CT, or PET image coordinates. Physical registration points on the nose and ears are first registered during scanning and then before surgery. Both sets of coordinates are then registered using a least-squares-fit algorithm. This minimizes the mean square distance between points on the patient and in the digitized images. Thus, the physical probe`s position and orientation can be displayed with respect to tomographic or 3-D representations on a computer.

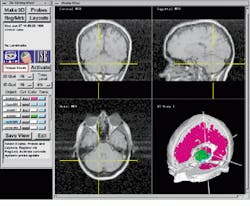

In the Viewing Wand system, a hand-held probe from Faro (Lake Mary, FL) is attached to the operating table via a mechanically linked arm (see Fig. 1). Transducers located in each of the arm`s six joints collect and send positional and directional vector information to an IBM 386 PC. There, rotational and translational data of the probe are calculated using a DSP-based add-in board. Positional information is then sent over an RS-232 serial link to a 715/100 Hewlett-Packard (Palo Alto, CA) graphics workstation, where axial, coronal, sagittal 2-D, and reconstructed 3-D images are displayed simultaneously (see Fig. 2). Running under UNIX OS, the workstation is configured with a 96-Mbyte RAM and a 2-Gbyte disk for image storage.

MRI and PET

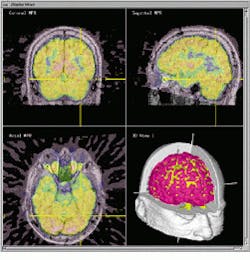

Positron emission tomography yields functional information that can be important in determining areas of low metabolic activity or activity related to a particular brain function (see Fig. 3). Because of this, research teams at the MNI have combined MR and PET data on the Viewing Wand system to better understand the metabolic activity of the brain.

Orthogonal slices of combined MR and PET images are displayed with a 3-D display of object surfaces derived from the original MRI and PET images. PET activity in each slice is indicated by a color scale that is superimposed on the underlying gray-scale MRI. The relative contribution of each data set to the final image is under the interactive control of the operator. The position of the surgical probe in relation to each data set is indicated by a cross-hair cursor on each of the images and by its representation in the 3-D image.

Stereoscopic views

In conditions when a true rendering of depth perception is required, such as when localizing the brain`s structure when a tumor is located both at the base of the brain and up against the spinal cord, enhanced visualization is provided by stereographic techniques. While volume-rendered 3-D medical images give the viewer an impression of depth, there is often ambiguity in such displays that can only be resolved with a stereoscopic representation.

To eliminate this ambiguity, a Crystal Eyes stereo display system from StereoGraphics (San Raphael, CA) is integrated with the graphics workstation. The system displays alternate images (left- and right-eye views) at a frame rate of 60 images per second per eye. Glasses worn by the surgeon incorporate active liquid-crystal shutters that are synchronized to the display by means of an infrared beam so that each image is presented only to its appropriate eye. Three-dimensional objects representing anatomical structures are generated by segmenting MRI or CT data.

Stereoscopic views are then obtained by surface-rendering these objects from two viewpoints with an angular separation of between 2 and 7 (see Fig. 4). A 3-D representation of the probe is also displayed in the correct orientation and position. When displayed stereoscopically, these elements allow unambiguous location of brain structures. This 3-D perspective provides an additional depth cue, and, as computer hardware becomes more powerful, it is likely that stereo visualization will be used more often in such surgical procedures.

In medical applications, MNI plans to use the Viewing Wand system in spinal surgery. While such three-dimensional systems are now in frequent use in operating rooms, they also can be used in different configurations in industrial inspection applications. Faro`s probes, for example, are used in conjunction with computer-aided-design data to perform accurate wear inspection of mechanical parts.

Noncontact measurement

Although Faro`s surgical probe provides 3-D positional data with an accuracy of 1.5 mm rms, it is only suitable where the patient is held fixed with a rigid head clamp. To overcome this limitation, the Viewing Wand system can also be configured with an optical probe from Northern Digital Polaris (Waterloo, Ontario, Canada) that uses infrared LEDs tracked by two CCD cameras to localize anatomical structures and render data in three dimensions.

In the future, as computational power of workstations increases, surgeons will be able to manipulate 3-D anatomical structures in real time. Better yet, the decreasing cost of medical imaging systems may mean that patient data can be collected in real time as the operation proceeds. This would allow any subtle changes in anatomy during surgery to be seen and procedures adjusted in real time.

FIGURE 1. By placing a hand-held probe inside a patient`s brain during surgery, the physician can automatically retrieve cross sections and a three-dimensional reconstruction of the patient`s brain.

FIGURE 2. Using the ISG Viewing Wand system during surgical procedures, physicians can examine coronal (top left), sagittal (top right), and axial (bottom left) MRIs of the patient`s brain. Reformatted 2-D MRI slice data are displayed as a 3-D object in the lower right quadrant.

FIGURE 3. Merged MRI and PET data allow the surgeon to combine physical anatomical structures with metabolic activity. Surface rendering of segmented 3-D objects (bottom right) shows the location of the cortex (red) and PET activation level (yellow).

FIGURE 4. Stereo pairs of MRI image data are obtained by imaging the brain at 7 increments. They provide the surgeon with a sense of depth perception.