Vision captures web events

Event-capture system monitors paper-making process for breaks and defects.

By Kari K. Hilden, Brian Mock, and John Ens

Paper mills operate paper-making machinery that can be several hundred feet long and run paper at a speed of more than 1 mile/min. They face a significant problem when events such as paper breaks or significant defects occur during the production process. New paper-machine technology has eliminated some of the causes of these problems, but increased speed and wider paper, along with more complicated processes, still result in unacceptably numerous and expensive events. These events could be caused by, for example, dirt in the moisturizing fluids or instability in the paper sheets from difficult-to-see wet wrinkles formed before drying.

Many problems can be solved with traditional problem-solving methods and analysis tools. There remains, however, a large number of paper-line disruptions that may not be solved quickly or correctly by traditional methods. These events often occur randomly, and the event position along the process line may not be the origination point of the problem

Vision-based systems such as WebVision, developed by Papertech, can continuously monitor, in real time, all of the critical locations from the beginning to the end of the paper-production process. When a line interruption occurs, digital video is automatically stored into hard-drive memory, making it possible to fully analyze the events leading up to and following the disruption. This information determines corrective steps and can be made available to both plantwide and corporatewide networks.

EVENT CAPTURE

Although it is very challenging to capture in a production environment, full-motion video will often yield the information necessary to solve the problem. Traditional VCR-based camera systems have been used since the mid-1980s to provide information about critical areas upstream and near the event. These systems had several limitations that included poor resolution, inability to locate cameras inside the process, low frame rates, inability to synchronize cameras, and difficult-to-operate/maintain multiple-camera systems. In addition, the systems could not withstand the harsh paper-machine environment.

In the WebVision event-capture system, cameras are positioned in enclosures along the paper-production process and send video information to the computers by a coaxial cable or fiber (see photo). These cameras can be positioned up to 1000 ft from the event-capture computers without significant video-signal loss. This video information is digitized, compressed, and saved to a memory-storage system, where it is continuously stored and erased by the storage system, creating a video buffer-the length of which is determined by how much ‘pre-event’ video and ‘postevent’ video is required for later review and analysis.

The buffering process is stopped when the event-capture system receives a dry contact signal from the paper process-control system, generated as a result of a fault or stoppage of the paper line. The system can also trigger itself when the real-time analysis tools determine an undesired change in web sheet or visual machine-process condition. The video is saved to a temporary video library, and the system automatically resets to capture additional events. In this way, the video buffer system only collects video information that has problem-solving value. The number of events that are stored is only limited by the size of the video-storage hard drives. All the individual cameras are synchronized so that, in review, a process disruption on one camera can be automatically tracked to all other cameras.

To accomplish the event-capture task, WebVision uses a distributed architecture consisting of three types of processes: multiple capture-module processes; one server process; and several client processes. The capture-module and client processes usually run on separate PCs. The server process usually runs on one of the client PCs. The processes communicate with each other over Ethernet links. For a small portable system with only one or two cameras, all the processes can run on one computer (see Fig. 1).

Up to 32 cameras are connected to capture modules, usually one per camera.The capture modules compress the images and also perform real-time processing;they are controlled by the WebVision server. Any number of WebVision clients can connect to the WebVision server. These client PCs run the user interface for WebVision.

The proprietary WebVision software is written in Microsoft Visual C++ and Visual Basic. The core software consists of about 100,000 lines of C and C++ code, and the user interface is written in about 50,000 lines of Visual Basic code.

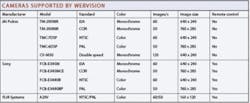

WebVision uses a range of analog cameras from FLIR Systems, JAI Pulnix, and Sony, which can be connected to the capture modules by coaxial cable or by fiber with AM transceivers (see table on p. 34). Cameras such as the JAI Pulnix TM-200NIR are typically configured to provide up to 60 fields/s. The system also can be configured to include other nonstandard analog video signals. For example, the JAI Pulnix CV-M30 yields 120 fields/s.

To view the paper-making line, a camera and separate, specially designed lighting enclosures are mounted on a bracket set above the line. The protective enclosures enable the cameras and lens to withstand continuous operating temperatures of over 110°C.

The capture resolution of the cameras on the paper machine is a critical component of the system, and specialized hardware was developed by Papertech to protect the camera assembly and maintain lens cleanliness. Additionally, traditional lighting systems are not adequate to withstand the paper-machine environment, remain clean, and provide enough concentrated light energy to maintain the required shutter speeds and depth of field to capture the small defects that may be the cause of the break. Papertech developed a compact and focusable high-frequency (20-kHz) lighting system based on a 150-W xenon bulb. The system uses a sheet of compressed air to cool and clean the enclosure and prevent dirt from contacting the lens of the unit.

THREADED LOGIC

For each capture module, WebVision supports several frame grabbers from Integral Technologies, including the FlashBus MV, Spectrim, MX-132, and MX-332. The interface to each frame grabber is handled by a separate DLL, so adapting to a new frame grabber does not require any changes to the main WebVision code.

The frame-grabber driver continuously writes the images to a rotating buffer of uncompressed images. The Channel Manager, which runs on a high-priority thread, monitors this rotating buffer and dispenses image pointers to the following other functions, which run as separate threads: compression, real-time analysis, and formation (see Fig. 2).

The images are compressed individually using the Intel JPEG compression library, which is optimized for the Streaming SIMD Extensions (SSE) instruction set on a Pentium 4 processor. The compressed images are written to a rotating storage buffer on the hard drive, as well as a rotating storage buffer in RAM. The rotating storage buffer on the hard drive can be very large.

When a video download is requested, the rotating buffer in the hard drive is simply renamed as a video file, and a new rotating buffer is created on the hard drive. Therefore, a video download is almost instantaneous. The rotating storage buffer in RAM is used for storing partial videos. When a partial video download is requested by the server, a separate thread is created that writes compressed images from RAM to a new video file on the hard drive. Meanwhile, the compression thread continues so that no images are lost.

The real-time analysis runs on a separate thread that continuously requests image pointers from the Channel Manager. The algorithm was designed to find changes in the camera images. Each image is compared to a reference image to determine if any changes have occurred. The sensitivity as well as the required size of changes can be adjusted.

When a video is downloaded, the real-time analysis information is saved in the video as gray-scale information. When the video is opened, a one-dimensional graph is produced that depicts changes along the entire length of the video file.

All paper has natural variations in density caused by aggregates of fibers that accumulate into structures called “flocs.” These variations can be seen by looking at the paper with a light source placed behind it. The Formation Number measures the amount of density variation of the paper. A larger Formation Number means that the paper has a larger density variation. The formation analysis, licensed from Leading Edge Technologies, runs as a separate thread that continuously requests image pointers from the Channel Manager.

WebVision videos are stored in a proprietary file format designed by Papertech. Each camera produces its own video file. An event consists of a number of video camera files. This file format contains the compressed JPEG images, but also contains ancillary data such as

• Data about when and where the video was recorded

• Gray-scale information, which gives a visual graph of changes in the video

• Region-of-interest mask

• Reference images from the real-time analysis

• Machine speed information for synchronization of different camera views

• User-added annotations for any image in the video file.

The file format is based on tagged fields so that it can easily be expanded when more data are desired in the video file. WebVision videos are initially stored in rotating storage on the hard drives of the capture modules. When the hard drive is full, older videos are automatically deleted. Videos can also be moved to permanent storage on the host PC.

With the capacity to analyze the changes that take place from one image to the next, the system can rapidly locate a hole or other fault that has passed by the cameras (see Fig. 3). Also the system can scan all of the camera locations to show the location on the sheet where it broke further down the machine. This allows the operator to examine, for example, what the sheet looked like in the press section for holes that resulted in the break in the dry end (see Fig. 4).

Real-time analysis tools were developed to address two critical issues in paper manufacturing: defects that do not cause paper breaks and are present in the end product and conditions on the paper machine that occur over time that will cause a paper break if left uncorrected. The real-time analysis tools identify sheet defects and visual machine conditions so that corrective changes can be made before a sheet break occurs. Additionally, defects can be marked and removed before shipping to the customer.

The analysis tools include multilevel, image-enhanced zoom and a region-of-interest feature that allows the operator to analyze any specific camera area for changes in real time and after the event. Minimizing all sources of paper-line disruptions increases operating efficiencies and production rates.

Applying event-capture systems to solve randomly occurring process disruptions can be used in any industrial automated process. Cameras are placed along the process or multiple views are used of the same process to capture only the process disruption. These systems are portable, require minimal setup time, and have an intuitive user interface. Review of the triggered files can be performed at an operator review station that could consist of a combination of live video monitors and a review monitor, keyboard, and mouse. Remote viewing of the files and control of the system is possible from any location, depending on the network architecture.KARI K. HILDEN is president and BRIAN MOCK is product manager at Papertech, North Vancouver, BC, Canada; www.papertech.ca. JOHN ENS is principal engineer of Intense Technologies, Richmond, BC, Canada; www.intense.ca.