Software guides robotic systems

Sophisticated algorithms add intelligence to automated manufacturing.

By R. Winn Hardin, Contributing Editor

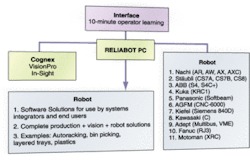

Until recently, tasks such as picking one part out of 20 similar parts, gripping the outer ring and inner circle of a gear and lifting it from a rack, or picking up one microchip from a pile were beyond robots unless designers included upstream fixtures to orient parts in specific order or sorters to separate different models. Now, machine-vision systems are giving ‘sight’ to robots and flexibility to production lines. Where hard-fixture tools once ruled, vision-guided robots are making inroads in industries from electronics to food processing.

Small-part product verification for robot handling is found in electronic contract manufacturing, automotive subassembly manufacturing and other small-scale assemblies, tool-and-die operations, and processing organic materials such as food and lumber. To the integrator, most small- to medium-size assembly or material-handling operations only require a vision system to provide two-dimensional (2-D) offset to the robot. An offset is the x, y (and possibly z, or height) distance away from the robot’s zero point, determined during calibration and re-established periodically so that the vision system can correct for thermal expansion of the robot, wear of the end-of-arm tooling, and similar equipment degradation. By using a static calibration plate or reference point, the vision system can correct for drift in the robot’s ability to consistently return to a fixed position.

In these 2-D robot-guidance applications, small to medium parts typically come into the robot workcell flat on a conveyor. The vision sensor and lighting system are located both above and below if the vision system uses reflectance, or straddling the part if the inspection uses transmitted light. The vision system acquires an image of the part on the conveyor and guides a robot arm such as the Adept SCARA Cobra 600 or Epson EZ linear modules to a point directly above the part to begin the operation.

For example, Serrature Meroni, a manufacturer of door and window accessories, recently used a Cognex In-Sight 1000 smart vision sensor to guide an Adept SCARA robot to insert a rotor into a door-lock mechanism. The machine-vision sensor is equipped with a 25-mm lens and automatically acquires the image of the lock rotor using a single input trigger and two outputs (see Fig. 1).

LED ringlighting provides soft, even illumination from all directions to emphasize surface features on nonreflective parts and to de-emphasize contrast between part and background. Once the sensor captures the image, it performs an analysis to determine lock-rotor orientation with respect to a reference angle. The In-Sight sensor transmits the result of the control to the Adept robot via an RS-232 serial communications line, which mounts the rotor inside the lock with an angular precision measurement of 0.1˚.

Normalized correlation and geometric pattern-matching are both mainstream search algorithms: normalized correlation compares a stored template of pixels to the image until it finds a fit, while a geometric pattern search represents the stored pattern in groupings of features or vectors rather than as a pixel map. “Normalized correlation compensates for a set threshold in a gray-scale image,” explains Mark Sippel, principal product marketing manager for Cognex In-Sight products. “It wants to bring the average pixel intensity to the level it was during training.” If the changes in light are not uniform, however, Sippel suggests using a geometric pattern search. “Optimizing a geometric pattern search tends to be application-specific, but by going from an area model pattern match to a edge pattern match, you can increase the robustness of the system and take away a little instability [at the cost of additional processing power or throughput if processing capability remains constant].”

WHEN 2-D ISN’T ENOUGH

There is an emerging trend toward using geometric pattern-matching to determine an object’s 3-D coordinates with a full six degrees of freedom (three dimensions plus orientation along each axis). Promoted by companies such as Braintech and ISRA Vision Systems, these single-camera 3-D systems use pattern-search algorithms to identify features and proprietary algorithms to deduce the position of the object based on the distortion of those features and the relationship between them.

One advantage of this approach is the-ability to mount the camera directly on the robot arm, which allows the vision system to get much closer to the work surface or multiple locations on the work surface and to provide high-spatial-resolution robot coordinates. Mounting multiple cameras for stereovision or cameras and laser light sources on the robotic arm adds to the mass of the arm and takes away from the robot’s maximum payload, which can slow operations even when the payload is within the robot’s prescribed capability.

Although single-camera geometric pattern-matching-based 3-D robotic guidance can work with parts of all sizes, highly reflective parts can cause trouble, regardless of the size or whether the application needs 2- or 3-D offset information for robot guidance. Normalized correlation and geometric pattern searches need images with clearly defined visual features to make their calculations. In these cases, the vision solution may require the use of structured light, such as galvanometer-based laser line or grid scanners (see Vision Systems Design, April 2004, p. 23). Laser light is coherent, meaning that it does not disperse in all directions after leaving the source.

Using the Matrox Geometric Model Finder algorithm and image-processing boards, Sensor Control, along with German partner Severt Robotertechnik, created a vision-guided robot cutter for sheet metal for German manufacturer Sefert Maschinenbau. Multiple cameras fixed to the ceiling above the sheet metal look down on samples as large as 1200 × 9500 mm. These cameras establish a rough position of the sheet metal to within 2 mm and provide rough guidance to the robot for the next step.

The next step uses a removable end-of-arm tool mounted on an articulated robot arm that supports another camera and laser light source. This vision sensor scans critical holes and other features on the sheet metal with submillimeter accuracy and then saves the data to guide the robot after the camera is removed. Although the camera on the end effector is removed automatically before cutting to protect the camera head, the camera enclosure has the added protection of its own air supply to maintain positive pressure inside the camera enclosure, guaranteeing that contaminants from the nearby cutting operation will not foul the camera head (see Fig. 2).

ORGANIC IMAGING

In food or lumber processing, where each uniquely shaped product is scanned before sorting, cutting, or processing further down the production line, the laser and camera are usually located above the product within 15° from the vertical axis and pointed down toward the product/conveyor; placing the camera farther than 15° off the optical axis invites unwanted optical distortion into the image-processing system.

In organics processing, the conveyor surface represents a height of zero. As the product moves through the laser line projected on the conveyor’s surface, the line appears to the vision system camera to bulge, or even break, in the case of an object with steep pitch. By acquiring the image of the laser line as the product passes through, the vision system deduces the height of each pixel along that laser line based on the displacement of the laser line from the original position.

To obtain this displacement, the vision system is first calibrated using a reference block of known dimensions placed under the scanning laser line to establish the relationship between laser-line displacement and a known height from the reference block. As the product moves along the conveyor, the vision system determines z or height data by analyzing the laser line displacement from the norm and y information based on the length of the displaced laser line; x information on the length of the object is best provided by an encoder attached to the conveyor system and fed into the image processor through standard analog or digital I/O.

According to Matrox’s Simon, integrators have several algorithm choices to identify the laser displacement and, therefore, height information. “They put a filter on the camera to sort out everything by the color of the laser light, then people typically want to find the center of the laser line,” he said. Matrox offers a high-speed ASIC on many of its image-processing boards to perform kernel convolutions/edge detection at speeds up to 2000 frames/s for 1k × 1k images. The kernel’s job is to identify bright pixels that represent the center of the laser line in the image with subpixel accuracy (less than 3 to 7 µm for standard CCD cameras).

Although most geometric-pattern-matching algorithms are not designed to work on 3-D image maps, geometric pattern-matching can be used on a 3-D image if the height information is converted to pixel intensity and then the geometric search algorithm is applied to the resulting 2-D image for feature extraction. Simon suggests that this method can be used for product verification of a single product type coming down a mixed production line, which is often critical for robot guidance. Putting the product in an enclosure and allowing a small slit of white light to project onto the product could accomplish the same general setup, but white light is less collimated than laser light and so does not allow the same spatial precision, Simon says.

STEREO/LARGE-PART GUIDANCE

Despite the success of structured-light approaches, Adil Shafi, president of SHAFI Inc., believes “there’s a movement away from structured light. It’s still used when contours and reflections are a problem, but vision algorithms such as geometric pattern-matching have developed to a point where there’s not as much need for structured light.”

In addition to the single-camera 3-D vision system, stereovision using two cameras still offers a widely used method for guiding robots, especially in large-assembly operations, where the items are typically too large to scan by laser at acceptable throughputs. In these cases, integrators typically mount a two-camera stereovision system at a fixed position near the robot with an unobstructed view of the work area.

“You’re far more likely to have problems with a moving camera than with a stationary camera,” explains Christopher Byrne, branch manager of CEC Controls. “It’s not just that you have to use more-expensive cable (to avoid wear during flex), but you have to do calibration routines more frequently.”

With stereovision, integrators apply geometric pattern-matching, edge-detection kernels, or other algorithms to locate the same feature in images from two cameras separated by a known distance. Stereovision systems provide 3-D robotic guidance with slightly less spatial resolution than laser scanners, but at higher throughputs. When subassemblies include smooth parts, such as car doors, or an integrator wants to improve the robustness of the stereovision system’s 3-D guidance by providing more data for analysis, integrators can use gratings in front of the lighting system to create gray-scale contours or shadowgraphs on the surface. This gives the vision system more data points, or visual features, to calculate a 3-D location.

Company Info

Adept Technology, Livermore, CA, USAwww.adept.com

Braintech, North Vancouver, BC, Canadawww.braintech.com

CEC Controls Company, Wixom, MI, USAwww.ceccontrols.com

Cognex, Natick, MA, USAwww.cognex.com

Epson Robotics USA, Carson, CA, USAwww.robots.epson.com

ISRA Vision Systems, Darmstadt, Germanywww.isravision.com

Matrox Imaging, Dorval, Quebec, Canadawww.matrox.com/imaging

Sensor Control, Västerås, Sweden

Serrature Meroni, Nova Milanese, Italywww.serme.it

Severt Robotertechnik, Vreden, Germany www.severt-gmbh.de

SHAFI Inc., Brighton, MI, USAwww.shafiinc.com

null