June 2017 snapshots: 3D imaging, artificial intelligence, agricultural imaging, ADAS

Using 3D sensor technology for retail store traffic analytics

For retail stores that utilize camera-based traffic and conversion programs, the key to a successful program is acquiring data that can be trusted and is accurate. HeadCount Corporation (Edmonton, AB, Canada;www.headcount.com), a company that specializes in traffic counting and optimizing data, believes that without confidence in the data, managers will question the traffic counts instead of applying the insights to improve performance.

"If you can't trust your traffic count data, nothing else matters," says Mark Ryski, CEO and Founder of HeadCount Corporation and author of Conversion: The Last Great Retail Metric. "It all starts with an accurate traffic count and getting that is not as easy as it sounds," he says. "In fact, it's not uncommon for even the largest, most sophisticated retailers to struggle with traffic count quality issues."

In order to obtain accurate counts in high-traffic environments in both indoor and outdoor locations, as well as in areas with dynamic lighting or areas where precise count lines are required, HeadCount utilizes Brickstream 3D sensors (pictured) from FLIR Integrated Imaging Solutions, Inc. (Richmond, BC, Canada;www.ptgrey.com). Brickstream 3D sensors collect and store metrics in 1 minute intervals.

The stereo vision sensors feature two CMOS image sensors and two lenses and are used to capture 3D video. Height, direction, mass, and velocity of people and objects in the field of view are extracted and used to identify people and groups moving together from the background and from objects such as strollers and shopping carts. The 3D stereo images are processed within the device itself, eliminating the need for PC support.

HeadCount works with retailers that have traffic count data from existing systems, as well as retailers who are not yet counting. While the company considers itself 'technology agnostic,' they have stuck with the Brickstream sensors for their systems.

"We evaluate traffic counters based on three primary criteria: count accuracy, functionality and reliability and Brickstream is the only traffic counting solution that delivers on all three," said James Cummings, HeadCount's Vice President of Operations.

To be accurate, the sensors have to consider a number of factors that may contribute to unreliable numbers, such as eliminating kid counts, which Brickstream does by using a height setting . The sensors also feature evice management software tools, which enables sensor monitoring and troubleshooting, as well as traffic count audits.

Ryski suggests not to jump right into anything new, when it comes to using new technologies, and instead, use something that is proven to provide accurate and reliable data.

"I'd advise all retailers to start with a solid foundation of store traffic and conversion analytics first before spending time and resources on more speculative analytic efforts."

Artificial intelligence comes to surveillance cameras with new collaboration

Video surveillance camera company Dahua Technology USA (Irvine, CA, USA;www.dahuasecurity.com) and Movidius (San Mateo, CA, USA; www.movidius.com)-a company owned by Intel (Santa Clara, CA, USA; www.intel.com) that develops embedded vision technology-have announced a collaboration that will result in security cameras with advanced artificial intelligence (AI) capabilities for training the devices to gather, analyze, and retain information in a manner similar to the human brain.

Movidius' Myriad 2 Vision Processing Unit (VPU) technology will be integrated with a number of Dahua video surveillance cameras. The Myriad 2 VPU, which the company calls the industry's first "always-on vision processor," features an architecture comprised of a complete set of interfaces, a set of enhanced imaging/vision accelerators, a group of 12 specialized vector VLIW processors called SHAVEs, and an intelligent memory fabric that pulls together the processing resources to enable power-efficient processing.

"Deep learning is the fastest-growing field in Artificial Intelligence. It can enable computers to interpret large amounts of data in the form of images, sound, and text," said Mr. Zhu Jiangming, Executive Vice President of Dahua Technology. "With this sophisticated technology from Movidius, we can dramatically improve accuracy when analyzing video content, which will have a significant impact on the future of video surveillance."

Remi El-Ouazzane, Vice President of Intel New Technology Group and General Manager of Movidius, also commented.

"The new frontier for AI and machine learning is being able to deploy these technologies in real-world products. This means getting the power demand to a low enough level to be embedded right into the cameras themselves," he said. "The Myriad 2 VPU delivers a tremendous amount of compute for deep neural networks while requiring less than a single watt of power - allowing Dahua to create some of the smartest products to date."

Equipped with the Myriad 2 VPU, the Dahua 2 MP High Definition box camera will run the latest deep neural networks on the device itself, which enables new capabilities that include crowd density monitoring, stereoscopic vision, facial recognition, people counting, behavior analysis, and detection of illegally parked vehicles. For example, notes the press release, in the facial recognition category, a camera equipped with deep learning technology can detect gender, age range, and emotion even with the subject wearing glasses.

Drone measures plant stress and nutrient contents with multispectral camera

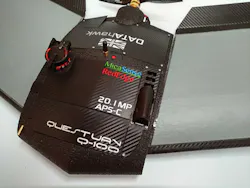

A partnership between vision-guided unmanned aerial vehicle (UAV) company QuestUAV (Amble, Northumberland, UK;www.questuav.com) and drone sensor and analytics company MicaSense (Seattle, WA, USA; www.micasense.com) has enabled the acquisition of multispectral images to monitor the health, stress, and nutrient content of crops.

Together with the MicaSense RedEdge multispectral camera, the Q-11 DATAhawk UAV is already being used worldwide in agricultural and fruit farming applications. The fixed wing drone can cover more than a square mile in a single flight; providing high-quality multispectral data for crop monitoring and analysis in an automated workflow.

The RedEdge camera captures images in blue, green, red, red edge, and near-infrared, and its narrowband optical filters provide full imager resolution for each band. The camera features a global shutter image sensor for distortion-free results and a capture rate of 1 capture per second of all bands. The hand-launchable Q-100 DATAhawk is a rugged UAV that can fly for up to 55 minutes and features multiple landing options, including automatic and parachute landing, allowing for easy and safe operation in open and confined environments.

QuestUAV's flight planning software enables users to plan a flight mission from autonomous take-off through site surveying at specific height and image overlap until autonomous landing. Images are acquired as soon as the UAV approaches survey height and starts flying the grid lines. Every second the sensor captures five images (one for each band) and writes them directly to the drone's internal SD card. The ground sampling distance at 400 ft. is 3.5 in., and geo-coordinates are directly written to the exchangeable image file format.

Following flight, images can be directly uploaded from the SD card to MicaSense ATLAS for storage, photogrammetric processing, analysis, and presentation. Datasets are processed as soon as the upload is completed, and outputs are available within 24 hours. ATLAS is a cloud-based solution that enables users to automatically process multispectral data and extract multiple outputs such as orthomosaics, vegetation index maps (e.g. NDVI and NDRE) and Digital Surface Models (DSMs). Each layer is reflectance-calibrated and registered at the sub-pixel level, with the value for each pixel indicative of percent reflectance for that band, providing valuable information on crop health at all stages of growth, according to QuestUAV.

Once processing is finished, all layers can be viewed in a secure web-based map interface. Datasets are organized in a 'Farms and Fields' structure. Users can create field boundaries online and ATLAS automatically organizes the data into the field boundaries. The map interface allows users to view RGB orthomosaics, and index maps in a multi-layer stack as well as to scout the field and share information with their farm management team.

With the map interface of ATLAS, users can view and analyze data from any online device, enabling farmers and agronomists with the ability to monitor crop status and plant health over time in order to detect patterns correlated to crop vigor, plant stress and nutrient content.

FLIR brings thermal imaging to vehicles with Automotive Development Kit

FLIR Systems' (Wilsonville, OR, USA;www.flir.com) new Automotive Development Kit (ADK), which is based on the company's Boson thermal imaging camera core, is designed for the development of next-generation automotive thermal vision and advanced driver assistance systems (ADAS).

Thermal imagers are already integrated on vehicles from GM (Detroit, MI, USA;www.gm.com), Mercedes (Stuttgart, Germany; www.mercedes.com), Audi (Ingolstadt, Germany; www.audi.com) and BMW (Munich, Germany; www.bmx.com), and enable the capture of images well beyond a car's high beams, noted FLIR. With the new ADK, FLIR's Boson thermal core will enable the testing, development, and potential integration of a thermal imaging system in a vehicle, and will do so quickly and easy, according to the company.

Boson is an IP67-rated thermal sensor that measures just 21 x 21 x 26.7 mm, with the whole ADK measuring 38 x 38 x 42.5 mm. The thermal array is a 320 x 256 uncooled VOx microbolometer detector with a 12 μm pixel pitch. Featuring a spectral band of 8 - 14 μm, the camera can acquire images at up to 30 fps. FLIR's new ADK is plug-and-play, and its thermal data ports directly into analytics over standard USB connection, or through an optional NVIDIA (Santa Clara, CA, USA;www.nvidia.com) DRIVE PX 2 connection.

Additionally, the ADK can be added to ADAS to complement existing sensors and features a detection range of >100 m, a 24° or 34° field of view, and features three different hardware configurations.