Camera design: Localized encoding increases data throughput of GigE cameras

While the GigE Vision standard does not require the dedicated frame grabbers of faster interface standards such as Camera Link, Camera Link HS and CoaXPress, the available practical data transmission rate of approximately 115MBytes/s can limit the use of such cameras in high-speed machine vision applications.

In an effort to overcome this limitation, Teledyne DALSA (Waterloo, ON, Canada; www.teledynedalsa.com) has developed a localized relative encoding method, dubbed TurboDrive that, by reducing the amount of data needed to describe an image, can increase the throughput of image data over the GigE Vision interface from between 120-235%. This will allow cameras that employ this method to run at faster data rates.

"For instance," says Eric Carey, Program Director for Area Scan Machine Vision at Teledyne DALSA, "the Linea 4k GigE monochrome line-scan camera is normally limited to a 26kHz line rate because of the speed of the GigE Vision interface. Using the TurboDrive method, this line rate can reach 80kHz if highly uniform images are captured-the same rate as the Linea 4k Camera Link model. However, since the GigE Vision interface can be used, no frame grabber is required and longer camera to computer cables can be used."

The TurboDrive algorithm takes advantage of the fact that many images exhibit a high-level of redundancy with little pixel-to-pixel variation. In a completely white 8-bit image, for example, all the pixels in the image will have image values of 255 bits. Since the level of randomness in the image is very low, the image is said to have a low image entropy or measure of disorder and is therefore easier to encode.

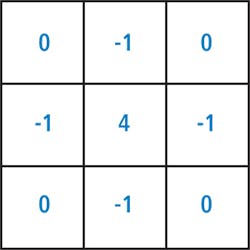

To exploit this image redundancy, the TurboDrive algorithm determines the pixel to pixel variation in the image. As an example, applying a 3 x 3 high-pass filter to a completely uniform (e.g. white) image will result in all the pixels in the resultant image having a value of zero.

Traditionally, using absolute encoding, transmitting this image over the GigE Vision interface would require 8-bits to be transmitted for each pixel in the image (since the value of each pixel is 255). However, where images with a high-level of redundancy are filtered, the data required to encode the image will be less.

While image filtering is used to reduce the amount of per-pixel data in the image, the filtered image alone is not transmitted over the GigE interface. If it were, there would be no way for the decoder to determine the original values of the pixels in the image. For this reason, the TurboDrive algorithm analyzes a known original or seed pixel within the image and subtracts the filtered pixel values from this seed value. By encoding these pixel differences, the amount of data required to transmit any given image is reduced, since many pixels will require less than 8-bits to encode.

To take advantage of the TurboDrive method, cameras such as the Linea GigE camera operate in burst mode and use on-board image buffers to acquire images at a faster rate than required by traditional GigE cameras. As images are acquired in this way, localized relative encoding is used to process image data and add the additional information required to decode the information to the data packets sent over the GigE interface. This decoding is performed by Teledyne DALSA's GigE Vision driver and is transparent to the application source code.

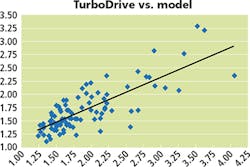

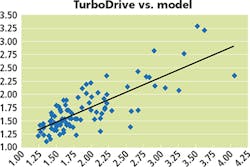

Teledyne DALSA's TurboDrive is a proprietary implementation based on localized relative encoding and it is subject to a pending patent. To demonstrate the capability of the technique, the company has shown how its algorithm compares to a mathematical model based on known techniques. Using code written in an Octave script, this mathematical model was compared with TurboDrive over a set of 98 images frequently found in machine vision applications. Using the graph shown on page 10, systems integrators can compare the performance of both the mathematical model and the TurboDrive implementation to estimate the throughput increase that can be performed on any particular application.