Wavelets and statistical analysis combine to index images

Andrew Wilson, Editor, [email protected]

In the near term, automated content-based image-retrieval systems are expected to be used to speed image classification in medical imaging and military reconnaissance. However, the automatic indexing and searching of large image databases is a challenging design problem for image processing and computer vision. To do so requires the development of vision systems that can automatically extract features from images, use statistical analysis to build classification models of such images, and index them with keywords.

At Pennsylvania State University (University Park, PA; www.psu.edu), assistant professor James Z. Wang has developed such an indexing system using wavelet-based transforms and statistical-analysis techniques. The resulting Automatic Linguistic Indexing of Pictures (ALIP) system builds a pictorial dictionary and then uses it for associating images with keywords. The system functions similarly to a human expert in annotating or classifying images.

Three major components make up the ALIP system: a feature-extraction process, a statistical-modeling process, and an indexing process that links keywords to images. Using a wavelet-based feature-extraction algorithm, known image features are placed in a training database. Statistical modeling techniques then study these features, and a statistical model is developed. This statistical model is then associated with a textual description and stored in a concept dictionary.

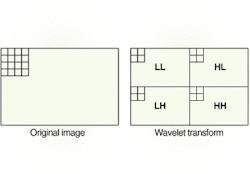

Using wavelet analysis, horizontal and vertical frequencies of images can be computed from a one-level wavelet transform. These bands represent the Low horizontal and Low vertical frequencies (LL: top left), High horizontal and Low vertical frequencies (HL: top right), Low horizontal and High vertical frequencies (LH; bottom left), and High horizontal and High vertical frequencies (HH; bottom right) of the image. These features compute feature vectors that then direct a Hidden Markov Model. This model automatically index images.

To build the ALIP system, six features of color images are extracted. Three of these features are the average color components in a block of 4 × 4 pixels in the image. The other three components are texture features that represent energy in high-frequency bands of wavelet transforms. To extract these features, a wavelet transform is applied to the luminance component of color images. After a one-level wavelet transform is performed, the 4 × 4 image block is decomposed into four frequency bands.

These bands represent the Low horizontal and Low vertical frequencies (LL), High horizontal and Low vertical frequencies (HL), Low horizontal and High vertical frequencies (LH), and High horizontal and High vertical frequencies (HH) of the image (see figure). Three feature vectors are then derived by computing the square root of the second-order moment of the wavelet coefficients in each one of the HL, LH, and HH bands.

After the features are extracted from the images at different resolutions, a process known as two-dimensional multiresolution Hidden Markov modeling (2-D MHMM) describes the statistical properties of the feature vectors and their spatial dependence. Hidden Markov Models were developed from research performed by the late Andrei Markov (1856-1922), a Russian mathematician known for studying a class of random processes now known as Markov chains.

A training sequence directs the HMM to adapt its parameters to observed training data. In one experiment,Wang trained ALIP with 24,000 photographs found on 600 CD-ROMs, with each CD-ROM collection assigned keywords to describe its content. After learning these images, the computer then automatically created a dictionary of concepts. Statistical modeling enabled ALIP to automatically index new or unlearned images with the linguistic terms of the dictionary.