September 2018 snapshots: Polarization imaging, deep learning camera from Amazon, vision-guided Army drones, and SWIR imaging.

United States Army to deploy personal reconnaissance drones from FLIR Systems

The United States Army will deploy vision-guided Black Hornet Personal Reconnaissance Systems (PRS) drones from FLIR (Wilsonville, OR, USA;www.flir.com) to support squad-level surveillance and reconnaissance capabilities in the Army’s first batch order for the Soldier Borne Sensor (SBS) program.

This deployment comes as part of a $2.6 million contract that the Army awarded FLIR for the newly-released Black Hornet PRS system, which the Army had tested and evaluated in both 2016 and 2017. The Army, according to a press release, will continue its evaluation and consider broader scale roll out of the Black Hornet for full operational deployment within all infantryunits.

Black Hornet PRS drones, according to FLIR, are the world’s smallest combat-proven nano-unmanned aerial system (UAS), and the next generation Black Hornet 3 adds the ability to navigate in GPS-denied environments. With its ability to provide real-time live motion video and snapshots back to the operator, the drone “provides the modern-day warrior with a game-changing piece of equipment for instant use on the battlefield,” and enables “immediate situational awareness for soldiers executing dismounted operations globally,” according to FLIR.

The UAS weighs 32 g and can fly 2 km at speeds of more than 21 km/h and features a visible image sensor as well as a FLIR Lepton thermal imager, both of which offer live video and snapshots. Smaller than a dime, Lepton thermal image sensors are FLIR’s smallest thermal camera core and are available with either a 160 x 120 or 80 x 60 uncooled VOx microbolometer array that is sensitive in the longwave infrared range from8 to 14µm.

Black Hornet 3 UAVs also come with a controller and display, and are sold directly through FLIR to military, government agencies, and law enforcementcustomers.

“The United States Army’s selection of FLIR to provide the Black Hornet PRS in this initial delivery of the Soldier Borne Sensor program represents a key opportunity to provide soldiers in every U.S. Army squad a critical advantage on the modern battlefield,” said James Cannon, President and CEO of FLIR Systems. “This contract demonstrates the strong demand for nano-drone technology, and will lead to broader deployment. We’re proud to provide the highly-differentiated Black Hornet PRS to help support the U.S. Government to achieve the objective of protecting itswarfighters.”

To date, FLIR has delivered the Black Hornet Personal Reconnaissance Systems to 30 nations aroundthe world.

Deep learning-enabled video camera launched by Amazon

First announced by Amazon (Seattle, WA, USA;www.amazon.com) at the re:Invent conference in November, the Amazon Web Services (AWS) Deep Lens video camera—which is designed to put deep learning technology in the hands of developers—is now shipping tocustomers.

DeepLens runs deep learning models directly on the device and is designed to provide developers with hands-on artificial intelligence technology. The device features a 4 MPixel camera that captures 1080P video along with an Intel (Santa Clara, CA, USA;www.intel.com) Atom Processor that provides more than 100 GFLOPS of computer power, which AWS says is enough to run tens of frames of incoming video through on-board deep learning models everysecond.

AWS’ camera also has 8 GB RAM, 16GB memory (expandable), and Intel Gen9 graphics engine, and WiFi, USB, and micro HDMI ports. AWS DeepLens runs the Ubuntu 16.04 OS and is preloaded with AWS Greengrass Core, as well as a device-optimized version of MXNet, and the flexibility to use other frameworks such as TensorFlow and Caffe. Additionally, the Intel clDNN library provides a set of deep learning primitives for computer vision and other AI workloads, according toAmazon.

AWS DeepLens, according to the company, “allows developers of all skill levels to get started with deep learning in less than 10 minutes by providing sample projects with practical, hands-on examples which can start running with a singleclick.”

The initial response to DeepLens was positive, according to Jeff Barr, Chief Evangelist for AWS.

“Educators, students, and developers signed up for hands-on sessions and started to build and train models right away,” he wrote. “Their enthusiasm continued throughout the preview period and into this year’s AWS Summit season, where we did our best to provide all interested parties with access to devices, tools, andtraining.”

DeepLens was also used in a few challenges and the HackTillDawnhackathon.

“I was fortunate enough to be able to attend the event and to help to choose the three winners. It was amazing to watch the teams, most with no previous machine learning or computer vision experience, dive right in and build interesting, sophisticated applications designed to enhance the attendee experience at large-scale music festivals,” Barr wrote ofHackTillDawn.

Additionally, AWS held the AWS DeepLens Challenge, which tasked participants with building machine learning projects that utilized DeepLens. The submissions, according to Barr, were as diverse as they were interesting, with applications designed for children, adults, and animals. Details on all of the submissions, including demo videos and source code, are available on the AWS Community Projects page (http://bit.ly/VSD-AWS).

“From what I can tell, DeepLens has proven itself as an excellent learning vehicle. While speaking to the attendees at HackTillDawn, I learned that many of them were eager to get some hands-on experience that they could use to broaden their skillsets and to help them to progress in their careers,” wrote Barr.

SWIR camera-based vision system characterizes laser beams

When using lasers in applications such as welding and cutting of materials, medical applications, or surveying and ranging; it is important to measure the spatial intensity of the laser, as extensive use can lead to degradation and loss of efficiency. Machine vision technology—in the form of a beam profiler—can help to assess the “health”of a laser.

Various types of lasers are used in applications such as the ones mentioned above, including those in the 900 to 1800 nm (SWIR) range. To assess lasers in the SWIR range, Axiom Optics (Boston, MA, USA;www.axiomoptics.com) developed the CinCam InGaAs SWIR Beam Profiler, which is used to measure various characteristics of the laser, including beam profile or 2D power distribution, 2D beam size measurements, beam divergence, focusing ability of the laser (beam quality with M2), and pointingstability.

The CinCam InGaAs turnkey solution is available in three models: CinCam InGaAs 320, CinCam InGaAs 640, and the CinCam InGaAs 636. Between the three of them, according to the company, most, if not all beam sizes can be profiled. Providing the SWIR-based vision within the system are SWIR cameras from Allied Vision (Stadtroda, Germany;www.alliedvision.com). The three Goldeye SWIR models used in the CinCam InGaAs models are the Goldeye G-008 TEC1 (320 x 256 resolution at 334 fps, Goldeye G-032 TEC1 (0.3 MPixels at 100 fps), and the Goldeye G-033 (0.3 MPixels at 301 fps).

These GigE cameras from Allied Vision are sensitive in the SWIR spectrum from 900 to 1700 nm, and are based on IngGaAs focal plane arrays and offer thermo-electric (TEC) sensor cooling with no fan (G-008 TEC1, and G-033 TEC1), and a nitrogen-filled cooling chamber that enables dual-stage thermo-electric cooling down to -60°C (G-032 TEC2). The G-008 TEC1 is used in the CinCam InGaAs 320, Axiom Optics’ “entry level” model, in terms of pricing, while the Goldeye G-033 TEC1, used in the CinCam InGaAs 640, has the smallest pixel pitch, 15 µm, allowing the profiling of smaller beams and acquisition of additional beam details. The Goldeye G-032 TEC1 provides the largest active area allowing profiling of large beams or multiple beams at once with the CinCamInGaAs636.

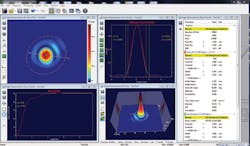

Axiom Optics also utilized RayCi software from Cinogy (Duderstadt, Germany;www.cinogy.com), which supports Windows (XP, Vista, Windows 7 and 8) and can simultaneously control several beam profiler cameras on a single computer. The software enables users to access standard settings and complete several laser beam characterizations, that are captured in detailed illustrations, and ensure the “optimal visualization of measurement results during profiling.” Additionally, comparisons between live data and previously-stored data is possible with RayCi’s ability to simultaneously analyzeboth.

“The Goldeye is the perfect camera line for our system for multiple reasons,” Nick Lechocinski, Sales/3D sensor and camera specialist at Axiom Optics, explained. “The Goldeye’s three models address our customers’ varying requirements in terms of price and performance. Furthermore, the Goldeye has TEC1 cooling (thermo-electric cooling), which is mandatory for the quantitative measurements completed in laser beam profiling.”

Researchers deploy polarization camera for carbon fiber inspection

To avoid safety risks, quality assurance is essential in the production of parts made from carbon fiber reinforced polymers (CFRP). These components exhibit particularly high tensile strength in the direction of the fibers, so manufacturers must pay close attention to the direction in which the various plies are formed together during production. To inspect carbon fiber components, researchers from the Fraunhofer Institute for Integrated Circuits IIS (Erlangen, Germany;https://www.iis.fraunhofer.de/en.html) have deployed a polarizationcamera.

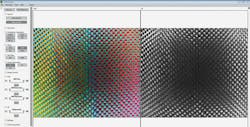

The right image shows the measured intensity, the left image shows the false color polarization information, with color depicting the measured angles of the fibers.

Initially, the team developed a proprietary polarization camera called POLKA, which captures the orientation and position of the carbon fiber bundle in real-time. The camera features a custom 640 x 480-pixel CMOS image sensor with on-chip polarizers and a frame rate of up to 25 fps, a pixel size of 6 µm, and Polka imaging software, which is used to calculate the degree of linearpolarization.

Recently, however, Fraunhofer Institute for Integrated Circuits IIS showcased a carbon fiber inspection demonstration at Control 2018 in Stuttgart, Germany using an off-the-shelf Phoenix PHX050S-P polarization camera from LUCID Vision Labs (Richmond, BC, Canada;www.thinklucid.com).

The PHX050S-P camera features Sony’s (Tokyo, Japan;www.sony.com) IMX250MZR CMOS polarized image sensor, which is a 5 MPixel global shutter sensor with a 3.45 μm pixel and a frame rate of up to 24 fps. The sensor is based on the IMX250 Sony Pregius CMOS monochrome sensor with a polarizing filter added to the pixel. The sensor has four different directional polarizing filters (0°, 90°, 45°, and 135°) on every four pixels. Lucid Vision’s GigE Vision camera, the company said, performs on-camera processing using the four directional filters and outputs both the intensity and polarized angle of each image pixel. Additionally, the camera features a transformable board layout that allows it to be tri-folded into a stacked camera, or unfolded into 90 or 180° orientations.

One of the unique properties of carbon fibers is that they polarize incidental unpolarized light parallel to the direction of the fiber, so the Phoenix camera was used to capture the orientation and position of the carbon fiber bundle in real time to determine which way the fiber runs. The demonstration again used the Fraunhofer IIS Polka imaging software, which performs the required computation for calculating the degree of linear polarization, which directly indicates the direction of the fibers for each pixel. This, according to LUCID Vision Labs, is the only way to ensure the required stability of the component.