Visual programming speeds robotics, vision growth

Andrew Wilson, Editor, [email protected]

Hidden away in a corner of the 2007 International Robots & Vision Show (Rosemont, IL, USA) was a very large company that demonstrated a Windows-based environment for developers to create robotics applications across a variety of hardware. The company, Microsoft (Redmond, WA, USA; msdn.microsoft.com/robotics), showed the latest version of its Microsoft Robotics Studio, a software environment that makes it easy for hobbyists, academics, and commercial developers to create robotics applications. The company demonstrated the software environment’s Visual Programming Language (VPL), a graphical dataflow-based programming model to develop robotic and robotic vision-based applications.

“Several challenges currently face the system integrator in developing these types of applications,” says Joseph Fernando, a senior program manager and architect with Microsoft’s robotics group. “These include integrating a heterogeneous collection of devices with varying requirements, configuring them in an environment that could be potentially highly distributed, asynchronously coordinating activity within these devices, and dynamically controlling the multiple components used in such systems.” Furthermore, each of the components used need to process these data concurrently.

“Currently, most robot manufacturers have their own programming languages and protocols that a system integrator must learn, and most applications are developed with tight coupling to the hardware,” says Fernando. “And there is neither a common programming model nor an environment between them. Once a program is developed it becomes difficult to integrate additional devices or replace existing devices that have higher performance characteristics. In addition, it is difficult to transfer the program to another similar system.

The community asked for a common software environment in which the developers, as well as the wider industry, can abstract and represent these devices. This would enable interactions to be orchestrated with a higher degree of device agnosticism, allowing reuse and transportability and to have multiple tasks to run concurrently on heterogeneous distributed environments.”

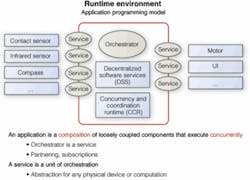

This is precisely what Fernando and his colleagues have achieved with Microsoft’s Robotics Studio. In essence, an application built using the software is a composition of loosely coupled components, called Services, that execute concurrently. At the heart of this model are two powerful technologies: Decentralized Systems Services (DSS) and Concurrency and Coordination Runtime (CCR). CCR makes it simple to write asynchronous programs that can concurrently process inputs and outputs from multiple devices. DSS facilitates messaging, making it possible to write distributed applications.

In this environment the same programming model is utilized whether a program runs locally on a machine or is distributed across a network. Each of the devices in a system is abstracted and represented as services. “A service can represent any form of device, as well as computation by abstracting the externally observable and modifiable properties into the service’s structured ‘State’ and defining messages ‘Operations’ that can act on this State,” says Fernando. “Our runtime environment architecture conforms to the Representational State Transfer (REST) model. Interaction with the system and robot services is achieved by sending and receiving [Operation] messages to and from the appropriate service.” The State and Operations forms the service contract and is uniquely addressable.

A program can instantiate runtime copies of the service based on the contract they offer; retrieve or manipulate data produced by the service through the operations exposed; or terminate it. These services are reusable across many different systems. “Further, services are composable,” says Fernando, “making it possible to build higher-order services that provide advanced functionality, such as ‘Differential Drive’ or ‘Ackerman steering’ built from lower-level services such as motor services that bind more closely with the hardware.”

When a higher-order service is created, it can be instructed how to bind with lower-level services through a manifest. This facilitates separation of functionality and breaks tight hardware coupling, increasing application reuse and transportability.

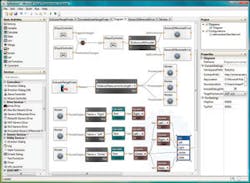

Microsoft also provides many tools with which to develop a robotics-based application. At the highest level, this includes a visual programming environment that allows service blocks to be dragged and dropped onto a worksheet. Messages passing between different blocks can be performed by linking these together. To develop services for Microsoft’s Robotics Studio, programmers can use several authoring languages, including those in Microsoft Visual Studio (C#, Visual Basic.NET), as well as scripting languages such as Microsoft Iron Python.

At the Robots & Vision Show, Fernando demonstrated how a program developed with the graphical programming environment can be run in multiple configurations when higher-order services are used. In the Visual Programming Language environment he connected an Xbox 360 game controller with the generic differential drive. Then he bound the generic differential drive to an iRobot Create platform by specifying a manifest and ran the program, giving him the ability to control the physical robot.

Then the manifest was changed so that the program bound to a simulated iRobot Create, giving Fernando the ability to drive the robot within Microsoft Robotics Studio’s Simulation Visualization tool. The Simulation Visualization tool provides a 3-D rendering of environments, as well as objects in motion, with real-world physics provided through AGEIA PhysX technology. Going from the physical robot to the simulator required no changes to the code.

Fernando demonstrated how a simple program could be developed to control a P3-DX mobile robot from Pioneer (www.activrobots.com) equipped with a laser rangefinder from SICK (Minneapolis, MN, USA; www.sick.com). As the robot moves, the laser scanner finds any object in its path and determines the closest object. Because the 180° field of view of the sensor is broken into three sections, the program can make three decisions on which way to move. Here, three instantiations of the same message, running on three separate paths transparent to the user, compute the result. As the P3-DX mobile robot was mounted with simulated cameras, Fernando could capture images as well as video from these viewpoints from the simulator.

As Microsoft has determined, a key to the success of its Robotics Studio will be providing developers with multiple services for the cameras, robots, sensors, and actuators that are needed to develop and configure a system. The company is currently working with more than 40 machine-vision and robot providers to provide such systems. Two of the companies to provide machine-vision software support for Robotics Studio are Braintech (Vancouver, BC, Canada; www.braintech.com), with its Volts-IQ package that performs object tracking, and RoboRealm (www.roborealm.com), a company that offers software for use in computer vision, image processing, and robot vision.