The history of machine vision

Machine vision as a concept dates back as far as the 1930s, when Electronic Sorting Machines (then located in New Jersey) offering food sorters based on using specific filters and photomultiplier detectors. Some of the most significant inventions and discoveries that led to the development of machine vision systems, however, date back much, much further.

To thoroughly chronicle this, wrote Andy Wilson in the Keystone of machine vision systems design, from the Vision Systems Design 200th anniversary issue in 2013, one could begin by highlighting the development of early Egyptian optical lens systems dating back to 700 BC, the introduction of punched paper cards in 1801 by Joseph Marie Jacquard that allowed a loom to weave intricate patterns automatically or Maxwell's 1873 unified theory of electricity and magnetism.

Andy’s article takes a deep look back that numerous disparate technologies that have paved the way for today’s off-the-shelf machine vision components.

Keystones of machine vision systems design

While it is true that machine vision systems have only been deployed for less than a century, some of the most significant inventions and discoveries that led to the development of such systems date back far longer. To thoroughly chronicle this, one could begin by highlighting the development of early Egyptian optical lens systems dating back to 700 BC, the introduction of punched paper cards in 1801 by Joseph Marie Jacquard that allowed a loom to weave intricate patterns automatically or Maxwell's 1873 unified theory of electricity and magnetism.

To encapsulate the history of optics, physics, chemistry, electronics, computer and mechanical design into a single article, however, would, of course, be a momentous task. So, rather than take this approach, this article will examine how the discoveries and inventions by early pioneers have impacted more recent inventions such as the development of solid-state cameras, machine vision algorithms, LED lighting and computer-based vision systems.

Capturing images

Although descriptions of pin-hole camera date back to as early as the 5th century BC, it was not until about 1800 that Thomas Wedgwood, the son of a famous English potter, attempted to capture images using paper or white treated with silver nitrate. Following this, Louis Daguerre and others demonstrated that a silver-plated copper plate exposed under iodine vapor would produce a coating of light-sensitive silver iodide on the surface with the resultant fixed plate producing a replica of the scene.

Developments of the mid-19th century was followed by others, notably Henry Fox Talbot in England who showed that paper impregnated with silver chloride could be used to capture images. While this work would lead to the development of a multi-billion dollar photographic industry, it is interesting that, during the same time period, others were studying methods of capturing images electronically.

In 1857, Heinrich Geissler a German physicist developed a gas discharge tube filled with rarefied gasses that would glow when a current was applied to the two metal electrodes at each end. Modifying this invention, Sir William Crookes discovered that streams of electrons could be projected towards the end of such a tube using a cathode-anode structure common in cathode ray tubes (CRTs).

In 1926, Alan Archibald Campbell-Swinton attempted to capture an image from such a tube by projecting an image onto a selenium-coated metal plate scanned by the CRT beam. Such experiments were commercialized by Philo Taylor Farnsworth who demonstrated a working version of such a video camera tube known as an image dissector in 1927.

These developments were followed by the introduction of the image Orthicon and Vidicon by RCA in 1939 and the 1950s, Philips' Plumbicon, Hitachi's Saticon and Sony's Trinicon, all of which use similar principles. These camera tubes, developed originally to television applications, were the first to find their way into cameras developed for machine vision applications.

Needless to say, being tube-based, such cameras were not exactly purpose built for rugged, high-EMI susceptible applications. This was to change when, in 1969, Willard Boyle and George E. Smith working at AT&T Bell Labs showed how charge could be shifted along the surface of a semiconductor in what was known as a "charge bubble device". Although they were both later awarded Nobel prizes for the invention of the CCD concept, it was an English physicist Michael Tompsett, a former researcher at the English Electric Valve Company (now e2V; Chelmsford, England; www.e2v.com), that, in 1971 while working at Bell Labs, showed how the CCD could be used as an imaging device.

Three years later, the late Bryce Bayer, while working for Kodak, showed how by applying a checkerboard filter of red, green, and blue to an array of pixels on an area CCD array, color images could be captured using the device.

While the CCD transfers collected charge from each pixel during readout and erases the image, scientists at General Electric in 1972 developed and X-Y array of addressable photosensitive elements known as a charge injection device (CID). Unlike the CCD, the charge collected is retained in each pixel after the image is read and only cleared when charge is "injected" into the substrate. Using this technology, the blooming and smearing artifacts associated with CCDs is eliminated. Cameras based around this technology were originally offered by CIDTEC, now part of Thermo Fischer Scientific (Waltham, MA; www.thermoscientific.com).

While the term active pixel image sensor or CMOS image sensor was not to emerge for two decades, previous work on such devices dates back as far as 1967 when Dr. Gene Weckler described such a device in his paper "Operation of pn junction photodetectors in a photon flux integrating mode," IEEE J. Solid-State Circuits (http://bit.ly/12SnC7O).

Despite this, the success of CMOS imagers was not to become widely adopted for the next thirty years, due in part to the variability of the CMOS manufacturing process. Today, however, many manufacturers of active pixel image sensors widely tout the performance of such devices as comparable to those of CCDs.

Building such devices is expensive, however and – even with the emergence of "fabless" developers - just a handful of vendors currently offer CCD and CMOS imagers. Of these, perhaps the best known are Aptina (San Jose, CA, USA; www.aptina.com), CMOSIS (now ams Sensors Belgium; Antwerp, Belgium http://cmosis.com), Sony Electronics (Park Ridge, NJ; www.sony.com) and Truesense Imaging (Now ON Semiconductor; Phoenix, AZ, USA; www.onsemi.com), all of whom offer a variety of devices in multiple configurations.

While the list of imager vendors may be small, however, the emergence of such devices has spawned literally hundreds of camera companies worldwide. While many target low-cost applications such as webcams, others such as Basler (Ahrensburg, Germany; www.baslerweb.com), Imperx (Boca Raton, FL, USA; www.imperx.com) and JAI (San Jose, CA, USA; www.jai.com) are firmly focused on the machine vision and image processing markets often incorporating on board FPGAs into their products.

Lighting and illumination

Although Thomas Edison is widely credited with the invention of the first practical electric light, it was Alessandro Volta, the invention of the forerunner of today's storage battery, who noticed that when wires were connected to the terminals of such devices, they would glow.

In 1812, using a large voltaic battery, Sir Humphry Davy demonstrated that an arch discharge would occur and in 1860 Michael Faraday, an early associate of Davy's, demonstrated a lamp exhausted of air that used two carbon electrodes to produce light.

Building on these discoveries, Edison formed the Edison Electric Light Company in 1878 and demonstrated his version of an incandescent lamp just one year later. To extend the life of such incandescent lamps, Alexander Just and Franjo Hannaman developed and patented an electric bulb with a Tungsten filament in 1904 while showing that lamps filled with an inert gas produce a higher luminosity than vacuum-based tubes.

Just as the invention of the incandescent lamp predates Edison so too does the halogen lamp. As far back as 1882, chlorine was used to stop the blackening effects caused by the blackening of the lamp and slow the thinning of the tungsten filament. However, it was not until Elmer Fridrich and Emmitt Wiley working for General Electric in Nela Park, Ohio patented a practical version of the halogen lamp in 1955 that such illumination devices became practical.

Like the invention of the incandescent lamp, the origins of the fluorescent lamp date back to the mid 19th century when in 1857, Heinrich Geissler a German physicist developed a gas discharge tube filled with rarefied gasses that would glow when a current was applied to the two metal electrodes at each end. As well as leading to the invention of commercial fluorescent lamps, this discovery would form the basis of tube-based image capture devices in the 20th century (see "Capturing images").

In 1896 Daniel Moore, building on Geissler's discovery developed a fluorescent lamp that used nitrogen gas and founded his own companies to market them. After these companies were purchased by General Electric, Moore went on to develop a miniature neon lamp.

While incandescent and fluorescent lamps became widely popular in the 20th century, it would be research in electroluminescence that would form the basis of the introduction of solid-state LED lighting. While electroluminescence was discovered by Henry Round working at Marconi Labs in 1907, it was pioneering work by Oleg Losev, who in the mid-1920s, observed light emission from zinc oxide and silicon carbide crystal rectifier diodes when a current was passed through them (see "The life and times of the LED," http://bit.ly/o7axVN).

Numerous papers published by Mr. Losev constitute the discovery of what is now known as the LED. Like many other such discoveries, it would be years later before such ideas could be commercialized. Indeed, it would not be until 1962 when, while working at General Electric Dr. Nick Holonyak, experimenting with GaAsP produced the world's first practical red LED. One decade later, Dr. M. George Craford, a former graduate student of Dr. Holonyak, invented the first yellow LED. Further developments followed with the development of blue and phosphor-based white LEDs.

For the machine vision industry the development of such low-cost, long-life and rugged light sources has led to the formation of numerous lighting companies including Advanced illumination (Rochester, VT, USA; www.advancedillumination.com), CCS America (Burlington, MA, USA; www.ccsamerica.com), ProPhotonix (Salem, NH, USA; www.prophotonix.com) and Spectrum Illumination (Montague, MI, USA; www.spectrumillumination.com) that all offer LED lighting products in many different types of configurations.

Interface standards

The evolution of machine vision owes as much to the television and broadcast industry as it does to the development of digital computers. As programmable vacuum tube based computers were emerging in the early 1940s, engineers working on the National Television System Committee (NTSC) were formulating the first monochrome analog NTSC standard.

Adopted in 1941, this was modified in 1953 in what would become the RS-170a standard to incorporate color while remaining compatible with the monochrome standard. Today, RS-170 is still being used is numerous digital CCD and CMOS-based cameras and frame grabber boards allowing 525-line images to be captured and transferred at 30 frames/s.

Just as open computer bus architectures led to the development of both analog and digital camera interface boards, television standards committees followed an alternative path introducing high-definition serial digital interfaces such as SDI and HD-SDI. Although primarily developed for broadcast equipment, these standards are also supported by computer interface boards allowing HDTV images to be transferred to host computers.

To allow these computers to be networked together, the Ethernet originally developed at XEROX PARC in the mid-1970s was formally standardized in 1985 and has become the de-facto standard for local area networks. At the same time, serial busses such as FireWire (IEEE 1394) under development since 1986 by Apple Computer were widely adopted in the mid-1990s by many machine vision camera companies.

Like FireWire, the Universal Serial Bus (USB) introduced in a similar time frame by a consortium of companies including Intel and Compaq were also to become widely adopted by both machine vision camera companies and manufacturers of interface boards.

When first introduced, however, these standards could not support the higher bandwidths of machine vision cameras and by their very nature were non-deterministic and because no high-speed point to point interface formally existed, the Automated Imaging Association (Ann Arbor, MI) formed the Camera Link committee in the late 1990s. Led by companies such as Basler and JAI, the well-known Camera Link standard was introduced in October 2000 (http://bit.ly/1cgEdKH).

For some, however, even the 680MByte/s data transfer rate was not sufficient to support the high data rates demanded by (at the time) high-performance machine vision cameras and it was Basler and others that, in 2004, by reassigning certain pins on the Camera Link specification managed to attain a 850 MByte/s transfer rate.

Just as this point-to-point protocol was gaining hold, other technologies such as Gigabit Ethernet were emerging to challenge the distance limitations of the Camera Link protocol. In 2006, for example, Pleora Technologies (Kanata, ON, Canada; www.pleora.com) pioneered the introduction of the GigE Vision standard, that although primarily based on the Gigabit Ethernet standard, incorporated numerous additions such as how camera data could be more effectively streamed, how systems developers could control and configure devices and – perhaps more importantly - the GenICam generic programming interface (http://bit.ly/13TDlba) for different types of machine vision cameras.

At the same time, the limitations of the Camera Link interface were posing problems for systems integrators because even the extended 680MByte/s interface required multiple connectors and still could not support emerging higher speed CMOS cameras. So it was that in 2008, a consortium of companies led by Active Silicon (Iver, United Kingdom; www.activesilicon.com), Adimec (Eindhoven,The Netherlands; www.adimec.com) and EqcoLogic (Brussels, Belgium; www.eqcologic.com) introduced the CoaXPress interface standard that, as its name implies, is a high-speed serial communications interface that allows 6.25Gbit/s to be transferred over a single co-ax cable. To increase this speed further, multiple channels can be used.

Under development at the same time, and primarily led by Teledyne DALSA (Waterloo, ON, Canada; www.teledynedalsa.com), the Camera Link HS (CLHS) standard – supposedly the successor to the Camera Link standard offers scalable bandwidths from 300 MBytes/s to 16 GBytes/s. At the time of writing, however, more companies have endorsed the CoaXPress standard than CLHS.

While high-speed standards such as CXP and CLHS support high-performance cameras, the emergence of the USB 3.0 standard in 2008 offered systems integrators a way to attain a maximum throughput of 400MBytes/s over 10 times fast than USB 2.0.

Like the original Gigabit Ethernet, the USB 3.0 standard was not well-suited to machine vision applications. Even so, FLIR (Formerly Point Grey; Richmond, BC, Canada; www.ptgrey.com) was the first to introduce a camera for this as early as 2009 (http://bit.ly/15gILiO). So it was that in January 2013, the USB Vision Technical Committee of the Automated Imaging Association announced the introduction of the USB 3.0 Vision standard which builds on many of the advances of GigE Vision standard including device discovery, device control, event handling, and streaming data mechanisms, a standard that will be supported by Point Grey and numerous others.

Computers and software

Although low-cost digital computers were not to emerge until the 1980s, research into the field of digital image processing dates back to the 1950s with pioneers such as Dr. Robert Nathan of JPL who in 1959 help develop imaging equipment to map the moon. In 1961, analog image data from Ranger spacecraft was then first transferred to digital data using a video film converter, and digitally processed by what NASA refers to as a "small" NCR 102D computer (http://1.usa.gov/162UGgI). In fact, it filled a room.

It was during the 1960s that many of the algorithms used in today's machine vision systems were developed. The pioneering work by Georges Matheron and Jean Serra on mathematical morphology in the 1960s led to the foundation of the Center of Mathematical Morphology at the École des Mines in Paris, France. Originally dealing with binary images, this work was later extended to include grey-scale images and the commonly known gradient, top-hat and watershed operators that are used in such software packages as Amerinex Applied Imaging's (Monroe Twp, NJ, USA; www.amerineximaging.com) Aphelion.

In 1969, Professor Azriel Rozenfeld described many of the most commonly algorithms used today in his book "Picture processing by computer," (Academic Press, 1969) and two years later, William K. Pratt and Harry C. Andrews founded the USC Signal and Image Processing Institute (SIPI), one of the first research organizations in the world dedicated to image processing.

During the 1970s, researchers at SIPI developed the basic theory of image processing and how it could be applied in image de-blurring, image coding and feature extraction. Indeed, early work on transform coding at SIPI now forms the basis the basis of the JPEG and MPEG standards.

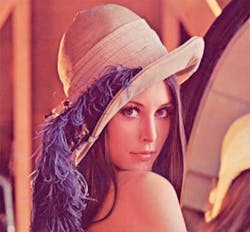

Tired of the standard text images used to compare the results of such algorithms, Dr. Alexander Sawchuk, now Leonard Silverman Chair Professor at the USC Viterbi School of Engineering (http://bit.ly/13vrXOB), then an assistant professor of electrical engineering at SIPI, digitized what was to become one of the most popular test images of the late 20th century.

Using a Muirhead wirephoto scanner, Dr. Sawchuk digitized the top third of Lena Soderberg, a Playboy centerfold from November 1972. Notable for its detail, color, high and low-frequency regions, the image became very popular in numerous research papers published in the 1970s. So popular, in fact, that in May 1997, Miss Soderberg was invited to attend the 50th Anniversary IS&T conference in Boston (http://bit.ly/1bfOTD). Needless to say, in today's rather more "politically correct" world, the image seems to have fallen out of favor!

Because of the lack of processing power offered by Von Neumann architectures, a number of companies introduced specialized image processing hardware in the mid-1980s. By incorporating proprietary and often parallel processing concepts these machines were at once powerful, while at the same time expensive.

Stand-alone systems by companies such as Vicom and Pixar were at the same time being challenged by modular hardware from companies such as Datacube, the developer of the first Q-bus frame grabber for Digital Equipment Corp (DEC) computers.

With the advent of PCs in the 1980s, board-level frame grabbers, processors and display controllers for the open architecture ISA bus began to emerge and with it, software callable libraries for image processing.

Today, with the emergence of the PCs PCI-Express bus, off-the-shelf frame grabbers can be used to transfer images to the host PC at very high-data rates using a number of different interfaces (see "Interface standards"). At the same time, the introduction of software packages from companies such as Microscan (Now Omron Microscan; Renton, WA; www.microscan.com), Matrox Imaging (Dorval, QC, Canada; www.matrox.com), MVTec (Munich, Germany; www.mvtec.com), Teledyne DALSA, and Stemmer Imaging (Puchheim, Germany; www.stemmer-imaging.de) make it increasingly easier to configure even the most sophisticated image processing and machine vision systems.