Next-Gen Machine Vision: Anomaly Detection for High-Speed, High-Mix Production Environments

Key Highlights

- The system is trained exclusively on ~200 conforming images, eliminating the need for defect samples during deployment.

- Deployment is rapid, typically completed within 45 minutes, significantly faster than traditional rule-based or supervised deep learning methods.

- It detects any deviation from normal appearance, enabling identification of unforeseen defect types such as contamination or process drift.

- Operates on existing camera hardware without requiring specialized imaging equipment, facilitating seamless integration with current manufacturing systems.

- Achieves high throughput (>1,100 units/min) with real-world accuracy (~99.5%), validated across over 50 global manufacturing sites.

Machine vision inspection has depended on two foundational assumptions: 1.) that defect examples can be collected in sufficient quantity and 2.) that product geometry and appearance remain stable enough for rule-based logic to function reliably over time. In practice, neither assumption holds consistently across modern manufacturing environments. New product variants, surface finish changes and the fundamental rarity of certain defect types create conditions under which conventional approaches either require extensive retraining cycles or fail entirely.

This article describes the technical architecture and deployment methodology of an anomaly-detection-based approach to AI visual inspection—one that inverts the conventional paradigm by training exclusively on conforming (good) product images, then flagging deviations from that learned distribution at inference time.

The Core Technical Challenge

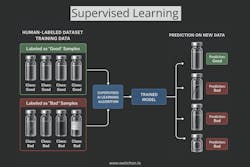

Traditional deep learning inspection systems use supervised classification: a convolutional neural network is trained on labeled images of both good and defective parts and inference assigns each incoming image to a class. This approach achieves high accuracy when defect samples are plentiful and well-represented. The practical constraint is data acquisition.

In many manufacturing contexts—particularly for low-volume, high-mix production or newly launched product lines—defect samples are scarce by definition. A plant that produces 1,000 units per day with a 0.1% defect rate generates approximately one defective part per day. Building a training dataset of several hundred labeled defect examples across multiple defect categories can take months, delaying deployment and leaving inspection gaps in the interim.

A secondary challenge is defect taxonomy completeness. Supervised models generalize poorly to defect types not represented in training data. A model trained on surface scratches and voids will not reliably detect a novel contamination event or an out-of-tolerance dimensional variation unless those categories were anticipated and included in the training set.

Anomaly Detection: Technical Approach

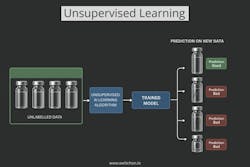

Anomaly detection reframes the problem. Rather than learning a discriminative boundary between good and defective classes, the model learns a generative or density-based representation of the conforming product distribution. At inference time, inputs that fall outside or at low probability density within that learned distribution are flagged as anomalous.

Training on Conforming Images

The approach described here requires approximately 200 images of conforming product per inspection use case. These images are used to build an internal model of normal appearance capturing expected surface texture, geometry, color distribution, and spatial relationships between features. No defect images are required at any stage of training or deployment.

This has a direct operational consequence: the system can be trained and deployed before any defective units have been observed on the line. Qualification of the inspection system does not depend on accumulating defect samples, which is particularly valuable during new product introduction or when transitioning between product generations.

Deviation Scoring and Thresholding

At inference time, each incoming image is compared against the learned conforming distribution. The system produces a deviation score for each image region, using learned feature maps that capture both global structure and local texture. Regions exceeding a configurable deviation threshold are marked for review.

The threshold is set during validation using a set of known-good and seeded-defect samples. Because the model is not trained on defect classes, threshold calibration is the primary mechanism for tuning the tradeoff between false reject rate and defect escape rate. This calibration step is use-case specific and typically completed during the 45-minute deployment window.

Hardware Integration

The system operates on existing camera hardware; no specialized imaging equipment is required. Integration points include PLC, MES, ERP and SCADA systems, enabling inline disposition decisions and traceability data capture without replacing existing infrastructure.

Deployment Methodology

The deployment process follows four sequential stages:

|

1. Capture |

2. Train |

3. Deploy |

4. Inspect |

|

Acquire images from existing production-line cameras |

~200 conforming product images used to build the deviation model no defect samples |

Inspection model validated and live in approximately 45 minutes |

System operates at 1,100+ units/min at ~99.5% real-world accuracy |

The elapsed time from image capture to a working inspection model is approximately 45 minutes for a new use case or SKU. This compares to days or weeks for rule-based systems, which require manual configuration of inspection regions, thresholds and decision logic for each product variant and to typical deep learning implementations, which require labeled dataset curation before training can begin.

Comparison with Conventional Inspection Approaches

The table below summarizes key technical and operational differences between anomaly-detection-based AI inspection, conventional supervised deep learning, and rule-based machine vision systems.

|

Criteria |

Anomaly Detection AI |

Supervised Deep Learning AI |

Rule-Based Vision System |

|

Training data needed |

~200 conforming images only |

Conforming + labeled defect samples |

Conforming + defect samples for threshold calibration |

|

Time to deploy (new SKU) |

~45 minutes |

Days to weeks (dataset curation + training) |

Days to weeks (manual rule configuration) |

|

Handling novel defect types |

Detects any deviation from normal distribution |

Limited to trained defect classes |

Detects only rules-specified anomalies |

|

Throughput |

1,100+ units/min |

Varies; typically limited by inference compute |

Varies; typically limited by frame processing |

|

Sensitivity to product change |

Retrain on new conforming images; same workflow |

Full dataset recollection and retraining |

Full rule reconfiguration required |

The primary advantage of the anomaly detection approach is its generalization capability; because the model represents the entire conforming distribution rather than a discrete set of defect classes, it can flag defect types that were never anticipated during deployment. This is particularly relevant for contamination events, material substitution, and process drift, where the defect signature is not known in advance.

Industrial Application Areas

The anomaly detection approach is applicable across a range of inspection challenges where defect sample scarcity or product variety would limit conventional methods.

Automotive

Surface defect detection on cast and machined components, weld quality assessment, battery cell inspection, and bearing defect identification represent common use cases. The approach is well-suited to automotive environments where multiple part numbers share a common inspection station and rapid changeover is required.

Pharmaceutical and Life Sciences

Vial crack detection, fill level verification, cap and seal integrity, and label validation are representative applications. Pharmaceutical inspection is additionally subject to 21 CFR Part 11 requirements for electronic records and audit trails, which must be addressed at the system integration level.

Electronics

PCB defect detection, solder joint analysis, and missing component identification require sub-millimeter precision at full production speed. The anomaly detection approach handles the high visual complexity of PCB assemblies without requiring defect-class enumeration, which is impractical given the combinatorial variety of possible assembly failures.

Fast-Moving Consumer Goods (FMCG)

Packaging defect detection, seal integrity, fill consistency, and label alignment are common applications. The ability to deploy a new product model in less than one hour supports the high SKU velocity typical in FMCG manufacturing.

Accuracy and Performance Characterization

Reported real-world line accuracy across active deployments is approximately 99.5%. This figure is derived from production deployments rather than controlled laboratory conditions, which matters because real production environments introduce lighting variation, vibration, part-to-part dimensional variation, and handling marks that are absent from curated test datasets.

Throughput of 1,100+ units per minute is achieved on existing camera hardware without specialized compute infrastructure requirements beyond those needed for inference. Latency characteristics are use-case dependent and are validated during the deployment window.

Performance figures cited here reflect aggregate data from 50+ global plant deployments across automotive, pharmaceutical, electronics, and FMCG sectors, covering more than one billion inspected products.

Compliance and Quality System Integration

AI inspection systems deployed in regulated manufacturing environments must satisfy documentation, traceability, and validation requirements independent of the underlying inspection algorithm. Relevant standards and certifications applicable to anomaly-detection-based inspection systems include:

|

Standard / Certification |

Scope |

Applicability |

|

SOC 2 |

Data security, availability and processing integrity |

All enterprise deployments |

|

ISO 9001:2015 |

Quality management system standard |

Global manufacturing quality baseline |

|

CE Certification |

European conformity for products in the EU market |

European plant deployments |

|

21 CFR Part 11 |

FDA standard for electronic records and electronic signatures |

Pharmaceutical and life sciences lines |

Operational and Economic Impact

Manufacturers implementing AI visual inspection typically realize return on investment within 9 to 12 months, driven by reductions across three cost categories: defect escapes that reach the field (warranty claims, recalls, customer-facing quality failures); inspection labor (replacing shift-dependent manual checking with continuous automated inspection at full line speed); and scrap and rework costs from defects caught late in the value stream.

The economic case for inspection automation is strongest where defect escape costs are high, where manual inspection throughput limits line speed, or where product variety makes rule-based system maintenance expensive. The anomaly-detection approach reduces the cost of system deployment and changeover relative to supervised methods, which affects the ROI calculation particularly for high-mix environments.

Conclusion

Anomaly-detection-based AI visual inspection offers a technically sound alternative to supervised deep learning and rule-based vision for manufacturing environments where defect sample scarcity, product variety or rapid changeover cycles make conventional approaches impractical. By training exclusively on conforming product images, the approach eliminates the dependency on defect sample collection that limits conventional deployment timelines, while preserving the ability to detect novel defect types that fall outside any predefined taxonomy.

The approach has been validated across more than 50 active global plant deployments in automotive, pharmaceutical, electronics and FMCG sectors, inspecting more than 1 billion products. Reported real-world line accuracy of approximately 99.5% at throughput rates exceeding 1,100 units per minute demonstrates viability at industrial scale. The 45-minute deployment window per new use case enables inspection coverage to keep pace with product introduction cadences that would otherwise outrun the system's ability to qualify new models.

About the Author

Bhuvan Yadav

Bhuvan Yadav is an intern at SwitchOn with hands-on experience across computer vision, machine learning, and industrial AI deployment. He has worked on the systems behind DeepInspect, SwitchOn's anomaly-detection platform validated across 50+ global manufacturing sites and over one billion inspected products