INFRARED IMAGING: Vineyard monitoring system combines global positioning and NIR imaging

Whileairborne remote sensing systems have been used for a number of years to monitor the health of crops, they are unaffordable to small or medium-sized producers. In addition, the resolution attainable using airborne systems is often insufficient to detect significant variation of the health of specific crops such as vines.

Verónica Sáiz-Rubio and her advisor Francisco Rovira-Más at thePolytechnic University of Valencia (Valencia, Spain) have developed a ground-based machine-vision system that combines infrared (IR) imaging and a global positioning system (GPS) that promises to reduce the cost of crop monitoring.

Mounted on a tractor fromJohn Deere (Moline, IL, USA), the system consists of a Gigabit Ethernet camera from JAI (Copenhagen, Denmark) that is interfaced to a laptop computer located in the cabin. To select a particular view, the camera was fitted with either an 8- or 25-mm focal length lens that was fitted with two bandpass filters—one centered at 324 nm in the ultraviolet (UV) band and the other centered at 880 nm in the IR band.

To assign a location to the position of the images as they are captured, the tractor was also fitted with a Star Fire switch differential GPS receiver supplied by John Deere that provided a positional static accuracy of the tractor of approximately 75 cm and a pass-to-pass accuracy of ±33 cm (SF1 signals).

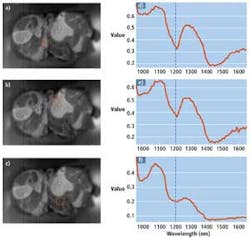

A dynamic threshold was applied to each of the captured images to simplify the estimation of vegetation within each image. Since the vigorous vines in the image are enhanced by the filter, there is a sharp drop in the histogram profile in the vicinity of the optimal threshold.

By computing and applying this threshold to the images, the total number of pixels classified as vigorous vegetation can be found. Dividing this number by the resolution of the image then provides the percentage of vegetation for each image. The combination of the dynamic thresholds, the percentage of vegetation, and the global position of the system are then used to create a vigor map that plots distance against the percentage of vegetation (see Fig. 1).

In tests carried out between July and September 2011 at the El Ardal Winery near Requena, Spain, the system was capable of segmenting near-infrared (NIR) filtered images by separating vegetation from a background composed of sky, soil, trellis wires, and vine trunks (see Fig. 2). According to Saiz-Rubio, while the segmentation algorithm found correct thresholds in most of the NIR images, difficulties occurred in the UV band because of light reflections caused by the sky. Nevertheless, the use of UV, thermal IR, or multispectral techniques may prove useful in future systems.

Vigor maps generated with the system provide a comparative analysis of the health of such plants; comparing these data with a manual assessment of the health of such plants will later be correlated with the captured data to make useful predictions regarding grape yield.

At VISION 2011, Saiz-Rubio and Rovira-Más were awarded third-place inEdmund Optics' (Barrington, NJ, USA) 2011 Higher Education Grant Program, receiving a prize of €2000 worth of Edmund Optics products. Starting this month, the company is accepting applications for its 2012 Higher Education Grant Program awards.