NOVEL BLIND deconvolution techniques RESTORE BLURRED IMAGES

NOVEL BLIND deconvolution techniques RESTORE BLURRED IMAGES

By Andrew Wilson, Editor at Large

Images acquired from scientific, medical, and military applications are often degraded and require image-restoration techniques to highlight specific details. In addition, this degradation generally appears in the form of image blurring, where the image may appear out of

focus. If the cause of this blurring is well known, then deconvolution techniques such as inverse filtering can be used to deblur the image.

In real-world imaging systems, however, the cause of the blurring is not known, and only assumptions can be made about both the cause and the resultant image. In such cases, the cause of the blur and the image itself must be estimated using partial information about the imaging system. Several scientists and researchers are using this process, known as blind-image deconvolution, in attempts to automatically deblur images obtained from space telescopes, scanning-electron-microscope (SEM) images, and microscopy samples.

Blind deconvolution method

In most imaging systems, obtained images can be defined as the two-dimensional convolution of the true image with a linear shift-invariant blur or point-spread function (PSF). Mathematically, this can be represented as

g (x, y) = f (x, y) * h (x, y) + n (x, y)

where g (x, y) is the degraded observed image, f ( x, y) is the true image, h (x, y) is the PSF, n (x, y) is noise, and * is the convolution operator.

If the point-spread function is known or can be estimated, then standard deconvolution methods can be used to estimate the original image. However, in many situations, both the PSF and the information about the original image are unknown.

"The problem of blind deconvolution, extracting both the original image and the PSF from only the measurement of their convolution, may at first seem a futile task," says David Biggs of the department of electrical and electronic engineering at the University of Auckland (Auckland, New Zealand). Indeed, even when any noise factor associated with the imaging system is not considered, there are an infinite number of solutions for f (x, y) and h (x, y). However, if certain constraints, such as non-negativity and finite support are applied to both the original image and the PSF, then the set of solutions can be greatly reduced.

A highly popular method for determining the degraded image is iterative blind deconvolution (IBD) using conjugate gradient algorithms. In the conjugate gradient method, the fast-Fourier transform (FFT) is used to estimate the PSF of the image using the FFT of the degraded image and the image estimate. In this approach, both the image and the PSF are restored in an iterative way. "After a random estimate is made for the true image," says Dimitrios Hatzinakos of the department of electrical and computer engineering at the University of Toronto (Toronto, Ontario, Canada), "the algorithm alternates between the image and Fourier domains enforcing known constraints in each."

A constraint that can be applied to the image is that of finite support, a criterion that does not allow the image to exist outside a certain boundary. If the image estimate does appear outside this boundary, nonzero pixels outside the region are replaced with background pixel values. Negative-value pixels within this region can also be replaced with zero.

Maximum likelihood

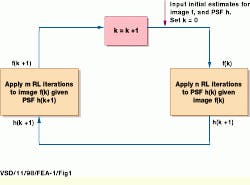

Another approach to IBD uses maximum-likelihood algorithms instead of conjugate gradients. Figure 1 shows a generic implementation of this method. In operation, the initial estimates for the image and the PSF are first determined. Then, using an iterative algorithm, one variable is fixed while the other is updated. A popular algorithm to perform this iteration, the Richardson-Lucy method, was developed independently by both William H. Richardson in 1972 1 and L. B. Lucy in 1974.2

"Because the Richardson-Lucy method is a maximum-likelihood approach used when the image has been contaminated by noise with Poisson statistics, it is especially useful in optical imaging," says Biggs. At the University of Auckland, Biggs and Mark Andrews have used IBD to deconvolve noisy 3-D microscopy specimens labeled with a fluorescent marker. In such applications, light is focused on a particular plane and the object is imaged with a CCD camera. However, out-of-focus light passing through planes above and below the imaging plane result in a PSF that causes blurring. To gain a better estimate of the PSF, many fluorescence microscope systems image fluorescent beads at a number of imaging planes and then compute the PSF of the system.

To highlight the benefits of the Richardson-Lucy IBD method, Biggs and Andrews used a 3-D dataset of a nerve axon obtained from Vaytek (Fairfield, IA) that was composed of 24 serial sections. "After 130 Richardson-Lucy iterations were performed, the algorithm successfully extracted the core of the PSF," says Biggs (see Fig. 2). However, some of the original conic features remained. "Here," says Biggs, "improving the result would only be possible using information about the imaging system, which was unavailable to us." Biggs and Andrews have also used the Richardson-Lucy IBD method to deconvolve noisy images from both scanning electron microscopes and the Hubble Space Telescope.

At the Lund Observatory (Lund, Sweden), Peter Linde and his colleagues have also used the Richardson-Lucy IBD method to deconvolve a set of crowded stellar field images. "Although crowded-field photometry has for the last decade opened up new possibilities for studying many classes of astronomical objects," says Linde, "one of the limitations is the difficulty in detecting overlapping stellar images." To do so, Linde performed a series of tests to evaluate the effects of deconvolution on crowded fields. In his implementation, Linde used a test image that was deconvolved using 100 iterations. At every iteration, a separate image frame was produced and a digital movie constructed to closely inspect the iteration process.

"During the 100 iterations, the resolution increased from 2.5 to 0.9 arc sec," says Linde, "but most of the increase was seen during the first 20 iterations." Although more stars could be seen after the 20 iterations, other artifacts such as background flux distribution also occurred (see Fig. 3). To estimate the impact of this deconvolution on star detection, Linde used computer-based search detection techniques to quantitatively determine the number of stars in the original and deconvolved images. While the Richardson-Lucy IBD method did allow more stars to be detected, increasing the deconvolution procedure beyond 20 iterations forces the PSF function on random structures, allowing artifacts to appear as stars.

Poor convergence

"Although the IBD algorithm is popular for its low computational complexity," says Hatzinakos, "the algorithm is sensitive to initial image estimates and has poor convergence properties." Because of this, other algorithms, such as the non-negativity and support-constrained, recursive inverse filtering (NAS-RIF) algorithm, have been developed.

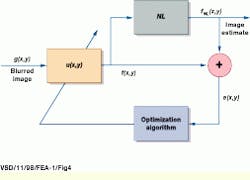

In the NAS-RIF method, developed by Deepa Kundur and Hatzinakos in 1996,3 the observed image g (x, y) is input to a variable finite response filter (FIR) represented as u (x, y). The output of this filter, f (x, y), then represents an estimate of the true image f (x, y) and is passed through a non-linear filter that sets any component of the image that is either outside the finite support or is negative to zero (see Fig. 4). The difference between this result, f NL (x, y) and the estimate of the true image, f (x, y), is then used as an error signal to update the variable filter, u (x, y).

"Unlike the IBD algorithm," says Hatzinakos, "the NAS-RIF algorithm is stable and well-behaved and converges faster for larger images." Better still, because the number of filter parameters is usually smaller than the filter size, the NAS-RIF method requires fewer computations than the IBD method.

At the Department of Computer Science of Wake Forest University (Winston-Salem, NC), Robert Plemmons and his colleagues have used a variation of the NAS-RIF algorithm in the restoration of ground-based telescope data. The variation incorporates new mathematical techniques to produce higher-resolution image restorations. To test the algorithm, Plemmons and his colleagues used a 256 ¥ 256 image of a ocean reconnaissance satellite obtained from the US Air Force Phillips Laboratory at Kirtland Air Force Base (Albuquerque, NM; see Fig. 5a). To produce a degraded image of the satellite, a computer-simulation algorithm was used that produced an image as it would be seen from a ground-based telescope.

"In astronomy applications," says Plemmons, "the empirical estimates of the PSF can sometimes be estimated by imaging a relatively bright, isolated point source such as a natural guide star." Using such a guide star degraded in a similar way to the satellite image produces an image with increased blur and noise and a degraded PSF (see Fig. 5b). Using the knowledge of the PSF degradation, Plemmons and his colleagues applied their variation of the NAS-RIF algorithm to the satellite image (see Fig. 5c and 5d). "As can be seen, use of both the guide-star image and a good estimate of support were useful in the deconvolution of the satellite image," says Plemmons.

In cases where the PSFs of image-processing systems are not known, IBD can prove effective. However, although the algorithm works well in many cases, in others it may fail to converge, and results must be monitored at each iteration to find the best image. Indeed, because IBD is so sensitive to the estimate of the PSF, it may be that, where possible, IBD is best used in conjunction with methods where partial information about both the PSF and the blurred image is known.

references

1. W. H. Richardson, J. Opt. Soc. Am. 62(1), 55 (January 1972).

2. L. B. Lucy, The Astronomical J. 79(6), 745 (June 1974).

3. D. Kundurand and D. Hatzinakos, "Blind image deconvolution: an algorithmic approach to practical image restoration," IEEE Signal Processing Magazine (May 1996).

FIGURE 1. In maximum-likelihood-based iterative blind deconvolution, the initial estimates for the image and the point-spread function are first determined. Then, using an iterative algorithm, one variable is fixed while the other is updated.

FIGURE 2. After 130 Richardson-Lucy iterations were performed on this 3-D dataset of nerve axons, the core of the point-spread function was extracted, and the image displayed as maximum intensity projections in the x-y, x-z, and y-z planes.

FIGURE 3. In crowded-field photometry, a key limitation is the detection of overlapping stellar images. After 100 iterations using the Richardson-Lucy method, the image of this crowded starfield increased the resolution from 2.5 to 0.9 arcsec, and more stars could be seen.

FIGURE 4. Using non-negativity and support-constrained, recursive inverse filtering (NAS-RIF), the observed image is input to a variable finite response filter (FIR). The output, representing an estimate of the true image is then passed through a non-linear filter that sets any component of the image that is either outside the finite support or is negative to zero. The difference between this result and the estimate of the true image is then used as an error signal to update the variable filter.

FIGURE 5. Using a variation of the NAS-RIF algorithm, researchers at Wake Forest University have shown that estimates of the point-spread function from natural guide stars can be used to deconvolve models of satellite images.