Choosing The Right Hardware Platform For Your Next Vision-Guided System

With the inclusion of machine learning and sensor fusion, embedded vision systems are evolving into a more intelligent class of ‘vision-guided’ autonomous systems across multitudes of applications. In ADAS, forward-looking camera technology is evolving to autonomous driving solutions. In the world of factory robotics, more and more collaborative robots (or ‘cobots’) are working alongside their human colleagues. Medical imaging has been transformed by the introduction of automated medical diagnostics that can in some cases be faster and more accurate than the human eye.

These systems typically have three mandates:

- Systems need to think and must respond immediately. This mandate demands far faster, more cohesive integration of sensing, processing, analyzing, deciding, communicating, and controlling functions. These systems must also be implemented very efficiently, which can be achieved by using the latest machine-learning inference techniques with 8 bits of precision and below—gaining both speed and efficiency with no loss of system-level accuracy.

- Given the very rapid development pace of neural networks and the rapid evolution of sensors, the flexibility to upgrade embedded-vision systems through hardware/software reconfigurability is a real competitive advantage.

- Most of these new embedded-vision systems are now connected to the Internet in some way (IoT), but they also need to communicate with legacy systems and existing machines, to new machines that may be introduced in the future, and to the cloud.

Xilinx devices and the Xilinx reVISION software defined development flow uniquely support each of these three mandates with significant, concrete, and measureable advantages over alternatives. They enable the fastest response time from sensors through efficient inference and control; they deliver reconfigurability to support the latest neural networks, algorithms and sensors; and they support any-to-any connectivity to the Internet (and to the cloud), to legacy networks, and to new machines.

Responsiveness – Lowest Latency from Sensor to Inference and Control

When comparing performance metrics against embedded GPUs and typical SoCs, Xilinx significantly beats the best of this group, Nvidia. Benchmarks of the Zynq SoC targeted reVISION flow against Nvidia Tegra X1 have shown up to 6x better images/second/watt in machine learning, 42x higher frames per second for computer vision processing, and 1/5 the latency (in milliseconds), critical for real time applications. As shown in Figure 1, there is huge value to having a very rapid and deterministic system response time. This example shows a car with Xilinx reVISION based on Zynq SoCs relative to a car with an Nvidia Tegra device identifying a potential collision and deploying brakes. At 65 mph, depending on how the Nvidia device is implemented, the Xilinx response time advantage translates to a range of 5 to 33 feet of distance, which could easily become the difference between a safe stop and a collision.

Figure 1: Why Response Time Matters: Xilinx vs. Nvidia Tegra X1- Xilinx: ZU9 running GoogLeNet @ batch = 1

- Nvidia TX1: 256 Cores; running GoogLeNet @ batch = 8

- Assuming the car was driving at 65 mph

Reconfigurability for Latest Networks and Sensors

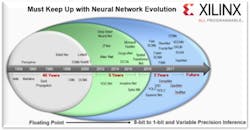

While response time is important, Xilinx solutions also provide very unique advantages in reconfigurability. In order to deploy the best systems with the state of the art neural network and machine learning inference efficiency, engineers must be able to optimize both the software and hardware throughout the product lifecycle. As shown in Figure 2, the last two years of advancements in machine learning has generated more technology than the advancements over the last 45 years. Many new neural networks are emerging along with new techniques that make deployment much more efficient. Whatever is specified today, or implemented tomorrow, needs to be ‘future proofed’ through hardware reconfigurability. Only Xilinx All programmable devices offer this level of reconfigurability.

Figure 2: Why Reconfigurability Matters as Machine Learning EvolvesAt the same time, there is a similar requirement for reconfigurability to manage the rapid evolution of sensor technology. The AI revolution has accelerated the development and evolution of sensor technologies across numerous categories. It has also resulted in a mandate for a new level of sensor fusion, combining multiple types of sensors in different combinations to create a full and complete view of the system’s environment and objects in that environment. As with machine learning, whatever sensor configuration is specified today, or implemented tomorrow, needs to be ‘future proofed’ through hardware reconfigurability. Again, only Xilinx All programmable devices offer this level of reconfigurability.

Any-to-Any Connectivity and Sensor Interfaces

As shown in the Figure 3, Zynq based vision platforms offer robust any-to-any connectivity and sensor interfaces.

Zynq sensor and connectivity advantages include:

- Up to 12x more bandwidth relative to alternative SOCs currently in the market, including support for native 8K and custom resolutions.

- Significantly more high and low bandwidth sensor interfaces and channels, enabling highly differentiated combinations of sensors such as RADAR, LiDAR, accelerometers and force torque sensors.

- Industry leading support for the latest data transfer and storage interfaces, easily reconfigured for future standards.

Bottom Line: Xilinx vs. Industry Alternatives

By combining the unique advantages of Zynq-based platforms with software defined development environments equipped with libraries and support for industry-standard frameworks, Xilinx is positioned as the best choice for vision-based system development. Xilinx is the only alternative that uniquely addresses all 3 ‘mandates’ for intelligent applications where differentiation and time to market with the latest technologies is critical; Responsiveness, Reconfigurability, Any-to-Any Connectivity.

Download papers and learn more about how Xilinx is enabling the most responsive and reconfigurable vision systems in the reVISION Developer Zone.