Enhanced cameras detect targets in the UV spectrum

Andy Wilson, Editor-in-Chief

For more than a decade, detection systems that incorporate sound and vision technologies have been used to automatically determine the location of gunfire. In 2001, for example, the Los Angeles County Sheriff's Department (LASD) in California implemented a gunshot-location imaging system developed by SST to detect gunfire noise and provides imaging locality information to help law-enforcement officers respond rapidly to shooting incidents (see "Acoustics and imagery pinpoint gunfire site," Vision Systems Design, June 2001).

However, to more accurately determine the type of arms used, more sophisticated narrowband, wide-field-of-view (FOV) camera systems are required. As researchers from the United States Naval Research Laboratory have shown, such systems can be readily implemented by determining the spectral content of muzzle flashes (see "Infrared Detection and Geolocation of Gunfire and Ordnance Events from Ground and Air Platforms").

"Gunfire spectral signatures are not just limited to the infrared spectrum," says Jim Chen, president of Delta Commercial Vision. Indeed, research has shown that targets such as the muzzle flash of an AK-47 assault rifle are more effectively detected in the ultraviolet (UV) spectrum.

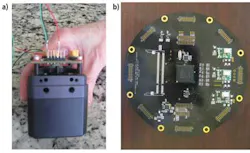

To develop an appropriate system for the United States Army, Delta Commercial Vision has designed a UV imaging system based around a custom UV camera and image processor (see Fig 1). Rather than use a back-illuminated CCD imager to perform this detection, Chen opted for a UV-enhanced photomultiplier tube such as those from Photonis.

By directly coupling the 20-mm-diameter UV sensitive coating on the photomultiplier to a fiber-optic taper from Edmund Optics, images are optically transferred to a 1/2-in. 1k × 1k-pixel CMOS imager from Aptina Imaging. To provide an interface to the image sensor, the glass of the imager was removed. Images are then transferred from the camera over a Camera Link Base interface at rates ranging from 40 to 120 frames/sec, depending on the type of sensor used.

"To detect the UV frequencies associated with the AK-47 muzzle flash in daylight," says Chen, "solar UV energy above 300 nm must be eliminated." Chen developed a custom filter that blocks wavelengths from 300 to 700 nm. By blocking this energy, the UV spectral signature of the muzzle flash can be visualized.

For any camera-based system like this to be effective, it must capture as wide an area of the battlefield as possible. Therefore, eight cameras are mounted in a single housing to provide a 360° view of the surrounding area. Image data from these cameras are then processed by the system's onboard FPGA to determine the location of any muzzle flash that may occur (see Fig. 2).

Positional data from the camera system are then transferred over Ethernet to the system's host PC. Here, the image data are correlated with an array of acoustic data captured by omnidirectional acoustic sensors. The data can then be used to trigger automatic weapons systems mounted on High Mobility Multipurpose Wheeled Vehicles (HMMWVs).

According to Chen, the system has already been tested in bright sunlit conditions and has been shown to identify muzzle flashes from AK-47 rifles at rates up to 40 frames/sec. While sophisticated narrowband, wide-FOV camera systems such as this can be deployed to detect specific types of arms, multispectral imaging systems that incorporate UV, visible, and infrared imagers will likely prove to be even more effective.