Infrared cameras enhance diagnostic medical imaging

Andy Wilson, Editor-in-Chief

Many machine-vision applications rely on visible-light cameras; however, more specialized applications demand imaging in the nonvisible spectrum. Those involved in developing military, medical, and spectroscopy systems may require infrared (IR) cameras that use different technologies to capture radiation from the shortwave-infrared (SWIR) to the longwave-infrared (LWIR) spectrum (see "The Infrared Choice," Vision Systems Design, April 2011).

To meet the needs of these niche applications, a camera must take accurate measurements of wavelengths at particular frequencies. Developing medical imaging systems, for example, demands an understanding of the spectral absorption and emission characteristics of specific tissue types. By leveraging these known spectral absorption and emission characteristics, IR systems can discriminate between features of healthy live tissue and cancerous tumors.

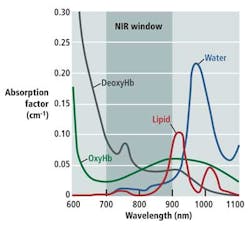

As Douglas Malchow, business development manager for industrial products at UTC Aerospace Systems, explains, many medical diagnostic tools take advantage of the optical or therapeutic window that defines the range of wavelengths where light has its maximum depth of penetration in tissue. Between 700 and 900 nm, the sum of the oxygenated hemoglobin (HbO2), deoxygenated hemoglobin (Hb), and water (H2O) reaches a minimum, making the therapeutic window ideal for medical imaging (see figure).

"[Near-infrared] NIR fluorescence imaging through deep tissue sections depends upon the low absorbance but high scattering properties of tissues in this wavelength range and enables deep tissue penetration," says Dr. Milton V. Marshall from the University of Texas Health Science Center. As Marshall points out, there are numerous imaging systems currently being used in field trials that leverage this therapeutic window (see "Near-Infrared Fluorescence Imaging in Humans with Indocyanine Green: A Review and Update" from The Open Surgical Oncology Journal, 2, 2010). These systems include the Photodynamic Eye (PDE) from Hamamatsu, the SPY Imaging System from Novadaq, IC-View from Pulsion Medical Systems, and the Fluorescence-Assisted Resection and Exploration (FLARE) System developed at Boston's Beth Israel Deaconess Medical Center.

Each of these systems requires that the patient first be intravenously administered an NIR fluorophore such as indocyanine green (ICG). Because ICG absorbs light between 600 and 900 nm and fluoresces between 750 and 950 nm, the location of the fluorophore can be highlighted by shining NIR light of one wavelength onto an area of tissue and then detecting the reflected wavelength.

At Nara Medical University, Dr. Naoto Furukawa has used the technique to highlight lymphatic vessels and lymph nodes in the treatment of cervical cancer ("The usefulness of photodynamic eye for sentinel lymph node identification in patients with cervical cancer," Tumori, 96, 2010). Using the PDE from Hamamatsu, tissue was first irradiated with NIR from 750 to 810 nm. ICG bound to lipoprotein fluorescing at a peak wavelength of 845 nm was then detected by the PDE to produce real-time images. Continuously bright lymph nodes were then identified as sentinel lymph nodes (SLNs), thus providing an indication of any cancerous condition in the patient.

Hamamatsu recently upgraded the design of the original PDE with the introduction of the pde-neo, which also offers the fluorescence imaging capability of the original PDE and provides several new functions including color and closeup imaging.

Discerning specific features during surgery is also the aim of the FLARE imaging system developed by professor John Frangioni and his colleagues at the Beth Israel Deaconess Medical Center. The system allows the surgeon to visualize both a color video image and two IR channels that can be used to discern tumors from other tissues such as nerves and blood cells, for instance.

In its original incarnation, the FLARE system used dichroic mirrors to direct light to a color video camera and two different NIR cameras. In this way, the FLARE imaging head was capable of simultaneous, real-time image acquisition from all three cameras. By using two NIR cameras, it was then possible to identify lymph nodes and identify any SLNs that may be present. Adding pseudocolor allows each type of node to be color coded, with all nodes displayed in red, SLNs and lymphatic channels in green, and overlap of the two colors appearing as yellow (see "The FLARE™ Intraoperative Near-Infrared Fluorescence Imaging System: A First-in-Human Clinical Trial in Breast Cancer Sentinel Lymph Node Mapping," Annals of Surgical Oncology, July 2009).

According to Frangioni, the first generation of FLARE was large, expensive to build, and had a heavy imaging head. Today, a version of the system known as the mini-FLARE is being used that employs custom optics from Qioptiq, an IMC-80F visible-light camera from IMI Technology Co. Ltd., dual-bandpass emission filters from Chroma Technology, and a model EC1020 camera from Prosilica--now part of Allied Vision Technologies--to perform the same task (see "Toward Optimization of Imaging System and Lymphatic Tracer for Near-Infrared Fluorescent Sentinel Lymph Node Mapping in Breast Cancer," Annals of Surgical Oncology, March 2011).

While ICG is a useful marker for many medical imaging applications, Frangioni and his colleagues are currently examining ways to engineer various NIR-fluorescing dyes so they can attach to specific cancer cells. This would be useful in breast cancer surgery, for example, where surgeons may remove the cancerous tumor and normal tissue surrounding it. With such advances, the use of IR-based fluorescence imaging systems is likely to become widespread in more specialized medical imaging applications.