Those who left the Automated Imaging Association's (AIA) 2013 Business Conference early would have missed two of perhaps the most interesting presentations. Both focused on object recognition and scene understanding with the aim of highlighting the latest research on algorithms used to perform such tasks.

The first presentation by Aude Oliva, PhD, principal research scientist with the Computer Science and Artificial Intelligence Lab (CSAIL) at the Massachusetts Institute of Technology (www.csail.mit.edu), was entitled "Frontiers in Computer Vision: From Human to Artificial Vision" and delighted the audience with a series of images designed to highlight the differences between human and machine perception.

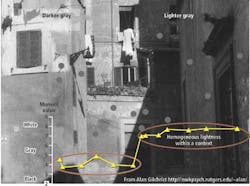

Oliva first presented the audience with an image from professor Alan Gilchrist of Rutgers University (http://nwkpsych.rutgers.edu/~alan/) to show the limitations of human perception (see figure). As can be seen from the image on page 14, although the dots within the image are all of the same gray value, they will be perceived by the human visual system as varying in gray value depending on the context in which they are placed. Thus, the gray dots on the darker background will appear to a human being as lighter than the gray dots on the light background. While a computer could easily ascertain that these dots are of the same gray value, the human visual system perceives the gray values using contextual background information.

The perception of whether a particular scene matches that of another is also subject to imperfect human memory. When presented with a number of images of typical rooms in a house and then presented with similar-but not exact images-many in the audience (including myself) believed that they had viewed the same scenes before.

Here again, researchers are using a number of different algorithms to classify whether images are similar. These classification algorithms make use of a number of different transforms including global visual features (like GIST), scale invariant feature transforms (SIFT), and histogram of gradients (HOG). However, even when deploying such algorithms, Oliva showed that large-scale scene categorization could only be performed 50% as effectively as that of human beings.

Professor David Forsyth, PhD, of the University of Illinois at Urbana-Champaign (http://illinois.edu) took up the subject of image classification in his presentation entitled "Computer Vision: The Modern Approach," showing that by using an increasing number of training samples, classification accuracy improves.

Environmental knowledge can also be used in many classification approaches. In his presentation, Forsyth showed how scene classification could be made more efficient by utilizing environmental knowledge. In a typical street scene, for example, it is highly unlikely that a pedestrian or motor vehicle would be found atop a tree, or, in an ocean view, that a car would be located at sea.

However, as Forsyth pointed out, "people like weird images." To the audience's delight, an image of a lion riding a horse was shown as a typical (Photoshopped) example. Thus, although drawing environmental knowledge may be useful in some applications, it cannot be used as a panacea to solve all image classification problems.

But are image classification procedures that implement algorithms such as SIFT to perform classification a true model of how human beings perceive images? When asked, Forsyth replied that this was the realm of other researchers and that, for now, if an algorithm proved useful for a specific task then it would be used. Copies of both Oliva's and Forsyth's presentations are available on the AIA web site atwww.visiononline.org/events/event.cfm?id=114.

Vision Systems Articles Archives