Neural network standard streamlines machine learning tech development

Several neural network frameworks for deep learning exist, all of which offer distance features and functionality. Transferring neural networks between frameworks, however, creates extra time and work for developers. The Khronos Group, an open consortium of leading hardware and software companies creating advanced acceleration standards, has developed NNEF (Neural Network Exchange Format), an open, royalty-free standard that allows hardware manufacturers to reliably exchange trained neural networks between training frameworks and inference engines.

Neural networks are trained using a variety of different frameworks and are then deployed on a similarly-wide variety of inference engines, each of which has its own proprietary format. This diversity is highly desirable but is also where the problem lies. Developers must construct proprietary importers and exporters in order to deploy networks across different inference engines. For the researchers, developers, and data scientists who are tasked with building, training, and deploying the networks, this is extra, unnecessary work.

The problem begins with the number of available training frameworks: Caffe, TensorFlow, Chainer, Theano, Caffe2, PyTorch, and more. While these deliver different functionality and optimization to developers, each training framework also has its own format for the networks developed in them, making transfers between frameworks cumbersome and time-consuming.

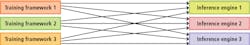

The inferencing stage has a similar set of issues. Convertors are needed before a trained network can be deployed. See Figure 1, where every inference engine requires an importer from every training framework. This process of transfer spells extra work for developers yet yields no additional benefit to creation or implementation of deployed products and systems.

With no standard means of transfer, developers are forced to waste time creating and maintaining converters for exporting and importing neural networks--and longer development time means wasted money, too. Perhaps more importantly, fragmentation also threatens innovation in the machine learning space. By spending time needlessly re-formatting software for import and export, developers are unable to dedicate their time and efforts to making real, tangible advances for machine learning in embedded vision and inferencing.

Although a complex problem, the solution for fragmentation is quite simple: a simplified process for transferring trained neural networks to new inference engines in the form of a PDF for neural networks. In the same way that the Portable Document Format (PDF) has enabled easy transfer of documents, the NNEF allows developers, researchers, and data scientists to easily transfer their networks from a training framework to the inference engine without having to spend extra time translating or adding exporters.

By providing a comprehensive, extensible, and well-supported standard that all parts of the machine learning ecosystem can depend on, a universal transfer standard would cut down on time wasted on transfer and translation and, ultimately, empower the industry to move forward to reach real implementation goals.

By describing trained networks and their weights independently of training frameworks and inference engines, NNEF empowers data scientists and engineers to transfer trained networks from their chosen training framework into a variety of inference engines. This freedom of exchange, coupled with the savings in time and development costs, will help spur advancements in emerging machine learning tech by giving developers the much-needed time and means to focus on innovation, rather than busy work that adds no value.

Khronos allows industry members to participate in the development of NNEF either as direct contributors or as advisers, making for a transparent standard that serves the needs of the whole industry.

Editor’s note: This article was contributed by Peter McGuinness, NNEF Working Group Chair, The Khronos Group (Beaverton, OR, USA; www.khronos.org).

Related stories:

Finding the optimal hardware for deep learning inference in machine vision

Fundamentals of deep neural networks

OPC Machine Vision part one officially adopted

Share your vision-related news by contacting Dennis Scimeca, Associate Editor, Vision Systems Design

SUBSCRIBE TO OUR NEWSLETTERS