Machine Vision Adds Traceability to Packaging

Garth Gaddy

Some product cases are easier to label with barcodes than others. When a single type of produce with a uniform size, weight, and variety is packed into cases over a period of many hours, for example, the same barcode can be placed repeatedly on those cases.

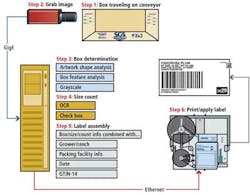

However, this is not the case with stone fruit such as peaches, plums, and nectarines. Boxes moving down a production line will vary randomly in case type or size, as well as the size and grade of produce (see Fig. 1). The barcode labeling process must be as dynamic as the packaging line.

To enable produce to be tracked through the supply chain, barcodes must be dynamically created and affixed to each case of produce. This barcode will contain information about the produce, its variety, relative size and packaging, the farm where it was produced, the packing company, and date of packaging. Any automated system must therefore analyze the size of each box and the identifying marks placed on them by an operator to indicate the number and variety of produce within.

At New Leaf Produce, Mike Jost required a system to place a traceable barcode label on cases of its stone fruit. Jost approached Vision Sort to develop an in-line vision-based system that can identify the type of case, produce size, and number of products packed (see Fig. 2). The data are then used to generate GS1-128 barcode labels that are affixed to cases as they travel along a conveyor at approximately 60 boxes/min.

Smart vision

As a case of produce moves under the inspection system, its presence is detected by a photodetector from Banner Engineering, which triggers a pair of 12-in. LC300 LED strobe lights from Smart Vision Lights positioned at a 30° angle and a monochrome 2-Mpixel, 2/3-in. format, 50 frames/sec ace camera from Basler.

Images from the camera are transferred over a GigE interface to an Intel-based multicore PC. To identify the types of cases, Gaddy used the HALCON 11 vision software package from MVTec Software. For the HALCON software to identify the boxes of produce, the system must first be trained. Images of each type of box to be identified are first captured as they travel through the system, and shape model files are created by analyzing the physical dimensions of the box and artwork printed on it (see Fig. 3). In practice, some boxes require as many as six shape models per box to be captured by the system before one box can be distinguished from another.

Shape models of the cases are then compared with previously trained models to rank, or score, the potential matches that are found. All models that are found to have a score above a set minimum are then run through a scoring algorithm that ensures the correct case is identified by the software. Should two cases appear similar in all but color, a grayscale analysis is performed on the image to make the distinction between cases.

Once the case type has been identified, the location of marked checkboxes is analyzed to determine the number and size of the fruit in the case. In instances where the produce type in the cases has been defined by placing a sticker on the case, or manually stamped, an optical character recognition (OCR) function reads the characters.

The system can search through more than 100 shape models and can classify the case type and determine which checkboxes have been checked by the packers in about 65 msec, after which a label is printed and affixed to the case. The total time taken to identify and affix a label to a case is approximately 0.5 sec.

After the model of the case has been identified, a CLV620-0120 barcode reader from SICK reads a barcode sticker previously affixed to the back of the case to identify the packager of the produce. These data, together with the box identification data, are logged into a database and can be recalled later for productivity, report generation, or payroll purposes.

Having identified the case type, size, and number of items of produce, the data are used to create a barcode identifier label. This is transferred over an Ethernet link to a S84 print engine from Sato that prints the barcode label, which is affixed to the case by a 250 label applicator from IDTechnology.

System control

A graphical user interface (GUI) written in Microsoft Visual Studio C# enables the user to control all the parameters of the system from a single touch-panel and handles the interface to the vision inspection software, the label printing and application tasks, and data logging, report generation, and alarm handling functions (see Fig. 4).

In addition to identifying the cases of produce and applying a barcode label, the system also maintains a Microsoft SQL relational database that contains parameters such as where the vision software should examine images for the checkboxes on each type of case and at what location the barcode should be placed. The software can also generate Excel files on demand, which contain pertinent reporting information such as system statistics, worker productivity, piece rate tallying, and box totals.

Custom Visual Studio C# software also monitors critical system parameters, such as whether the printer is low or out of labels. If these parameters exceed alarm levels, a text message is sent to maintenance personnel.

Due to the variety of different-sized cases that travel down the conveyor, it is important that the system be able to position the barcode label at specific locations according to the type of case. A custom-built positioning system aligns the boxes vertically and horizontally in front of the label application tool so they can be affixed in the exact position.

Future models

Although the systems that have currently been shipped use just one camera to identify and label the cases, future systems will employ two, or even three, cameras to further increase the functionality of the system. For example, a second camera will capture an image of the front of the cases as they move down the conveyor, enabling labels affixed to them by the packers to be automatically identified. A third camera placed in the hood of the vision system will capture images of the fruit in open cases. These images can then be analyzed to identify what type of produce is contained in them, obviating the need for packers to physically mark the checkboxes on the cases.

The Vision Sort system was developed to minimize the impact to the user's process and be fast enough that the average packing operation would only need one system per packing line rather than several systems. Two of the current single-camera systems have been in use since June 2012 at New Leaf Produce. Additional units were installed later in the summer of 2012 at two other California grower-shipper facilities. Multiple camera systems are expected to be shipped to customers during the course of 2013.

Garth Gaddy is president at Vision Sort (Reedley, CA, USA; http://visionsort.org).

Company Info

Banner Engineering

www.bannerengineering.com

Basler

www.baslerweb.com

IDTechnology

www.idtechnology.com

MVTec Software

www.mvtec.com

SICK

www.sick.com

Smart Vision Lights

www.smartvisionlights.com

Vision Sort

http://visionsort.org