Year In Review 2019: Wonders in vision systems design never cease

As in any tech-centric industry, new techniques and technologies in machine vision and image processing often create enthusiasm that morphs readily into hype. The line between hype and efficacy lies in successful implementation. Vision Systems Design, throughout 2019, has chronicled the space where the hype behind new technologies ends and the tally of useful applications begins.

Our recent Solutions in Vision 2020 global audience survey necessarily focused on some of the hottest vision technologies—deep learning, hyperspectral/multispectral imaging, polarization, embedded vision, 3D imaging, and computational imaging—who is using them now, and when vision professionals expect to be using them in the future. We also have been covering these technologies throughout the year, by way of demonstrating their current importance and understanding the directions in which they will continue to mature in the vision industry.

Deep Learning

Deep learning or teaching machines how to process and act upon information independently of human interaction (past the training phase), is a paradigm-changing technology in many fields. In the vision market, one of the earliest and now best-established uses of deep learning techniques lies in the field of industrial inspection, like PCB inspection systems.

The roots and operating principles of deep learning are rapidly being deployed in many other sectors of the vision world. Deep learning software is used to analyze satellite images of houses for solar power potential surveys. Local governments have used deep learning for street sign location cataloging.

Deep learning technology has advanced so far that it can be deployed on smartphones to provide personalized skin routines or detect vision disorders in children, and can even enable allow off-the-shelf cameras to see around corners.

Hyperspectral/multispectral imaging

Hyperspectral and multispectral imaging have historically been deployed for astronomical studies and on orbiting satellites, for uses like general land surveys and monitoring climate change.

In recent years, hyperspectral/multispectral imaging has become a popular technology for agricultural applications like general crop health measurement and detecting diseased crops specifically. Advanced agricultural applications like digital plant phenotyping also utilize this technology.

The degree to which hyperspectral/multispectral imaging is being deployed outside these two fields, for uses like measuring consumer electronics displays, improving image quality for tumor surgeries, and monitoring nuclear fusion reactors, demonstrates the wide potential for this vision technology in the future.

Polarization

The usefulness of polarization in vision applications feels long-established, yet there are still applications for polarization that integrators may not be aware of, like reducing reflections, analyzing thin coatings, and detecting changes in slope.

With the advent of on-chip polarizers, and new possibilities for miniaturization that may bring polarization technology to smartphones, we can expect “the book” on polarization in the vision industry to see many new chapters written in the very near future.

Embedded vision

“Embedded vision” is a difficult technology to break down into a single definition. At its most basic definition, embedded vision technology is designed to operate on very small platforms, like drones. Industrial PCs designed for factory installations as standalone units also fit the definition of embedded technology.

In March, Kwabena Agyeman, President and Co-founder of OpenMV, told the story of how the development of a cheap serial camera module inspired the creation of a popular open-source embedded camera platform. OpenMV is just one of many open-source architectures for embedded vision. The growth of this sector in the vision industry is also aided by low-cost development tools.

Thanks to these efforts, the definition of “embedded vision” will continue to blur as more and more applications that could fall into this category are designed and implemented. In the case of embedded vision, an unclear definition could be taken as a sign of success!

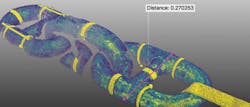

3D imaging

The rising ubiquity of 3D imaging is demonstrated by the cavalcade of varied 3D applications we’ve covered throughout 2019, like bonding wire inspection, harvesting sweet peppers, logistics e-fulfillment, improving the quality of arc welds, and trimming foam car interior parts.

3D laser scanners can be used to inspect underwater structures, make air travel safer by studying ice accretion on aircraft wings, and automatically profile ground vehicles.

The introduction of affordable stereo vision cameras like Intel RealSense, that allows waterborne drone swarms to communicate, and Microsoft’s Azure Kinect, that powers an innovative 4D tracking system, will allow integrators and engineers even more leeway to develop new vision systems that make use of this extremely useful technology.

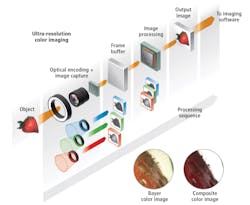

Computational imaging

Computational imaging often acts in a supporting role for other systems, as computational imaging is at heart a method for enhancing captured images, for instance merging images taken under different conditions. Photometric stereo, one computational imaging technique, can be used to enhance image contrast by combining images with varied directional illumination.

Computational imaging algorithms allow researchers to take photographers at great distances using only a single photon emitted from a LiDAR system. Computationally-created high dynamic range images can be used to measure paint coating thickness on steel products. Vision system developers may only have scratched the surface of what computational imaging can accomplish, and Vision Systems Design will keep watch on their progress in 2020, and beyond.